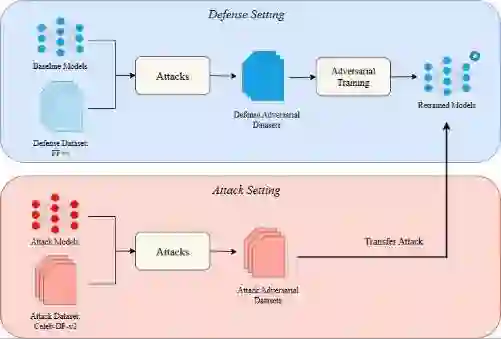

Deepfake detection systems deployed in real-world environments are subject to adversaries capable of crafting imperceptible perturbations that degrade model performance. While adversarial training is a widely adopted defense, its effectiveness under realistic conditions -- where attackers operate with limited knowledge and mismatched data distributions - remains underexplored. In this work, we extend the DUMB -- Dataset soUrces, Model architecture and Balance - and DUMBer methodology to deepfake detection. We evaluate detectors robustness against adversarial attacks under transferability constraints and cross-dataset configuration to extract real-world insights. Our study spans five state-of-the-art detectors (RECCE, SRM, XCeption, UCF, SPSL), three attacks (PGD, FGSM, FPBA), and two datasets (FaceForensics++ and Celeb-DF-V2). We analyze both attacker and defender perspectives mapping results to mismatch scenarios. Experiments show that adversarial training strategies reinforce robustness in the in-distribution cases but can also degrade it under cross-dataset configuration depending on the strategy adopted. These findings highlight the need for case-aware defense strategies in real-world applications exposed to adversarial attacks.

翻译:在现实环境中部署的深度伪造检测系统面临着能够制作难以察觉的扰动以降低模型性能的对手。虽然对抗训练是一种广泛采用的防御方法,但其在现实条件下的有效性——即攻击者知识有限且数据分布不匹配的情况——仍未得到充分探索。在本工作中,我们将DUMB(数据集来源、模型架构与平衡)及DUMBer方法论扩展至深度伪造检测领域。我们在可迁移性约束和跨数据集配置下评估检测器对抗攻击的鲁棒性,以提取现实世界的见解。我们的研究涵盖了五种最先进的检测器(RECCE、SRM、XCeption、UCF、SPSL)、三种攻击方法(PGD、FGSM、FPBA)以及两个数据集(FaceForensics++ 和 Celeb-DF-V2)。我们从攻击者和防御者双重视角分析结果,并将其映射至不匹配场景。实验表明,对抗训练策略在分布内情况下增强了鲁棒性,但在跨数据集配置下,根据所采用的策略,也可能降低鲁棒性。这些发现凸显了在面临对抗攻击的现实应用中,需要采用针对具体情况的防御策略。