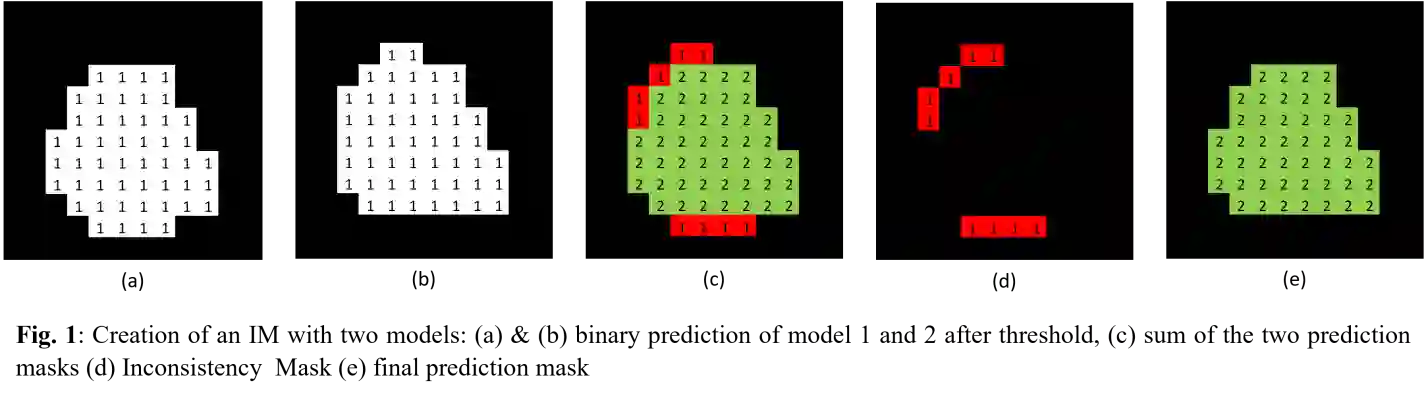

Generating sufficient labeled data is a significant hurdle in the efficient execution of deep learning projects, especially in uncharted territories of image segmentation where labeling demands extensive time, unlike classification tasks. Our study confronts this challenge, operating in an environment constrained by limited hardware resources and the lack of extensive datasets or pre-trained models. We introduce the novel use of Inconsistency Masks (IM) to effectively filter uncertainty in image-pseudo-label pairs, substantially elevating segmentation quality beyond traditional semi-supervised learning techniques. By integrating IM with other methods, we demonstrate remarkable binary segmentation performance on the ISIC 2018 dataset, starting with just 10% labeled data. Notably, three of our hybrid models outperform those trained on the fully labeled dataset. Our approach consistently achieves exceptional results across three additional datasets and shows further improvement when combined with other techniques. For comprehensive and robust evaluation, this paper includes an extensive analysis of prevalent semi-supervised learning strategies, all trained under identical starting conditions. The full code is available at: https://github.com/MichaelVorndran/InconsistencyMasks

翻译:生成足够的标注数据是深度学习项目高效执行中的重大障碍,尤其在图像分割这一尚未充分探索的领域——与分类任务不同,图像分割需要大量的标注时间。本研究针对这一挑战展开,在硬件资源受限、缺乏大规模数据集或预训练模型的环境中进行操作。我们引入了一种新颖的“不一致性掩码”(IM),用以有效过滤图像-伪标签对中的不确定性,显著提升分割质量,超越传统的半监督学习技术。通过将IM与其他方法结合,我们在ISIC 2018数据集上仅使用10%的标注数据便展示了卓越的二值分割性能。值得注意的是,我们的三种混合模型表现优于在完全标注数据集上训练的模型。该方法在另外三个数据集上持续取得优异结果,并且在与其他技术结合时展现出进一步改进。为进行全面稳健的评估,本文对流行的半监督学习策略进行了广泛分析,所有策略均在相同的初始条件下训练。完整代码见:https://github.com/MichaelVorndran/InconsistencyMasks

相关内容

- Today (iOS and OS X): widgets for the Today view of Notification Center

- Share (iOS and OS X): post content to web services or share content with others

- Actions (iOS and OS X): app extensions to view or manipulate inside another app

- Photo Editing (iOS): edit a photo or video in Apple's Photos app with extensions from a third-party apps

- Finder Sync (OS X): remote file storage in the Finder with support for Finder content annotation

- Storage Provider (iOS): an interface between files inside an app and other apps on a user's device

- Custom Keyboard (iOS): system-wide alternative keyboards

Source: iOS 8 Extensions: Apple’s Plan for a Powerful App Ecosystem