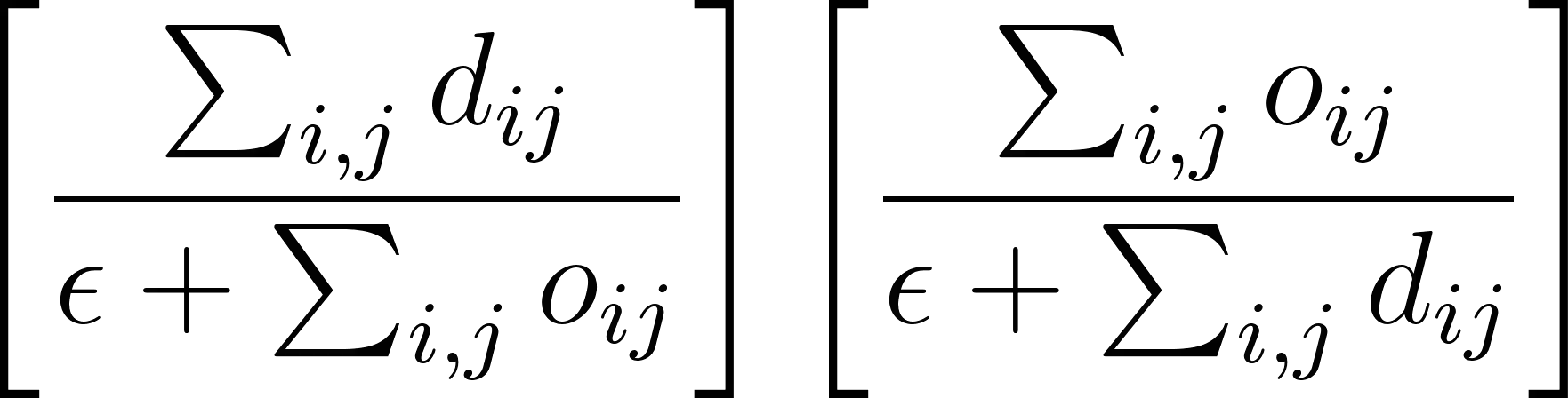

We introduce a novel anchor-free contrastive learning (AFCL) method leveraging our proposed Similarity-Orthogonality (SimO) loss. Our approach minimizes a semi-metric discriminative loss function that simultaneously optimizes two key objectives: reducing the distance and orthogonality between embeddings of similar inputs while maximizing these metrics for dissimilar inputs, facilitating more fine-grained contrastive learning. The AFCL method, powered by SimO loss, creates a fiber bundle topological structure in the embedding space, forming class-specific, internally cohesive yet orthogonal neighborhoods. We validate the efficacy of our method on the CIFAR-10 dataset, providing visualizations that demonstrate the impact of SimO loss on the embedding space. Our results illustrate the formation of distinct, orthogonal class neighborhoods, showcasing the method's ability to create well-structured embeddings that balance class separation with intra-class variability. This work opens new avenues for understanding and leveraging the geometric properties of learned representations in various machine learning tasks.

翻译:我们提出了一种新颖的无锚点对比学习方法,该方法利用我们提出的相似性-正交性损失。我们的方法最小化了一个半度量判别损失函数,该函数同时优化两个关键目标:减少相似输入嵌入之间的距离和正交性,同时最大化不相似输入之间的这些度量,从而促进更细粒度的对比学习。由SimO损失驱动的AFCL方法在嵌入空间中创建了一种纤维丛拓扑结构,形成了类特定的、内部凝聚但正交的邻域。我们在CIFAR-10数据集上验证了该方法的有效性,并通过可视化展示了SimO损失对嵌入空间的影响。我们的结果展示了不同正交类邻域的形成,证明了该方法能够创建结构良好的嵌入,平衡类间分离与类内变异性。这项工作为理解和利用各种机器学习任务中学习表示的几何特性开辟了新途径。