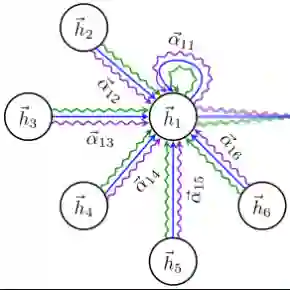

We study the visual semantic embedding problem for image-text matching. Most existing work utilizes a tailored cross-attention mechanism to perform local alignment across the two image and text modalities. This is computationally expensive, even though it is more powerful than the unimodal dual-encoder approach. This work introduces a dual-encoder image-text matching model, leveraging a scene graph to represent captions with nodes for objects and attributes interconnected by relational edges. Utilizing a graph attention network, our model efficiently encodes object-attribute and object-object semantic relations, resulting in a robust and fast-performing system. Representing caption as a scene graph offers the ability to utilize the strong relational inductive bias of graph neural networks to learn object-attribute and object-object relations effectively. To train the model, we propose losses that align the image and caption both at the holistic level (image-caption) and the local level (image-object entity), which we show is key to the success of the model. Our model is termed Composition model for Object Relations and Attributes, CORA. Experimental results on two prominent image-text retrieval benchmarks, Flickr30K and MSCOCO, demonstrate that CORA outperforms existing state-of-the-art computationally expensive cross-attention methods regarding recall score while achieving fast computation speed of the dual encoder.

翻译:本研究针对图像-文本匹配中的视觉语义嵌入问题展开探讨。现有研究大多采用定制的交叉注意力机制来实现图像与文本两种模态间的局部对齐,这种方法虽较单模态双编码器方案更为强大,但计算成本高昂。本文提出一种双编码器图像-文本匹配模型,通过场景图表示文本描述:以节点表示物体及其属性,并通过关系边相互连接。利用图注意力网络,我们的模型能高效编码物体-属性与物体-物体间的语义关系,从而构建出鲁棒且快速的系统。将文本描述表示为场景图,能够充分发挥图神经网络强大的关系归纳偏置优势,有效学习物体-属性及物体-物体间的关系。为训练模型,我们提出了在整体层面(图像-文本描述)和局部层面(图像-物体实体)同时对齐图像与文本描述的损失函数,并证明这是模型成功的关键。我们的模型被命名为物体关系与属性组合模型(CORA)。在Flickr30K和MSCOCO两个主流图像-文本检索基准数据集上的实验结果表明,CORA在召回率指标上超越了现有计算昂贵的交叉注意力方法,同时保持了双编码器的高速计算特性。