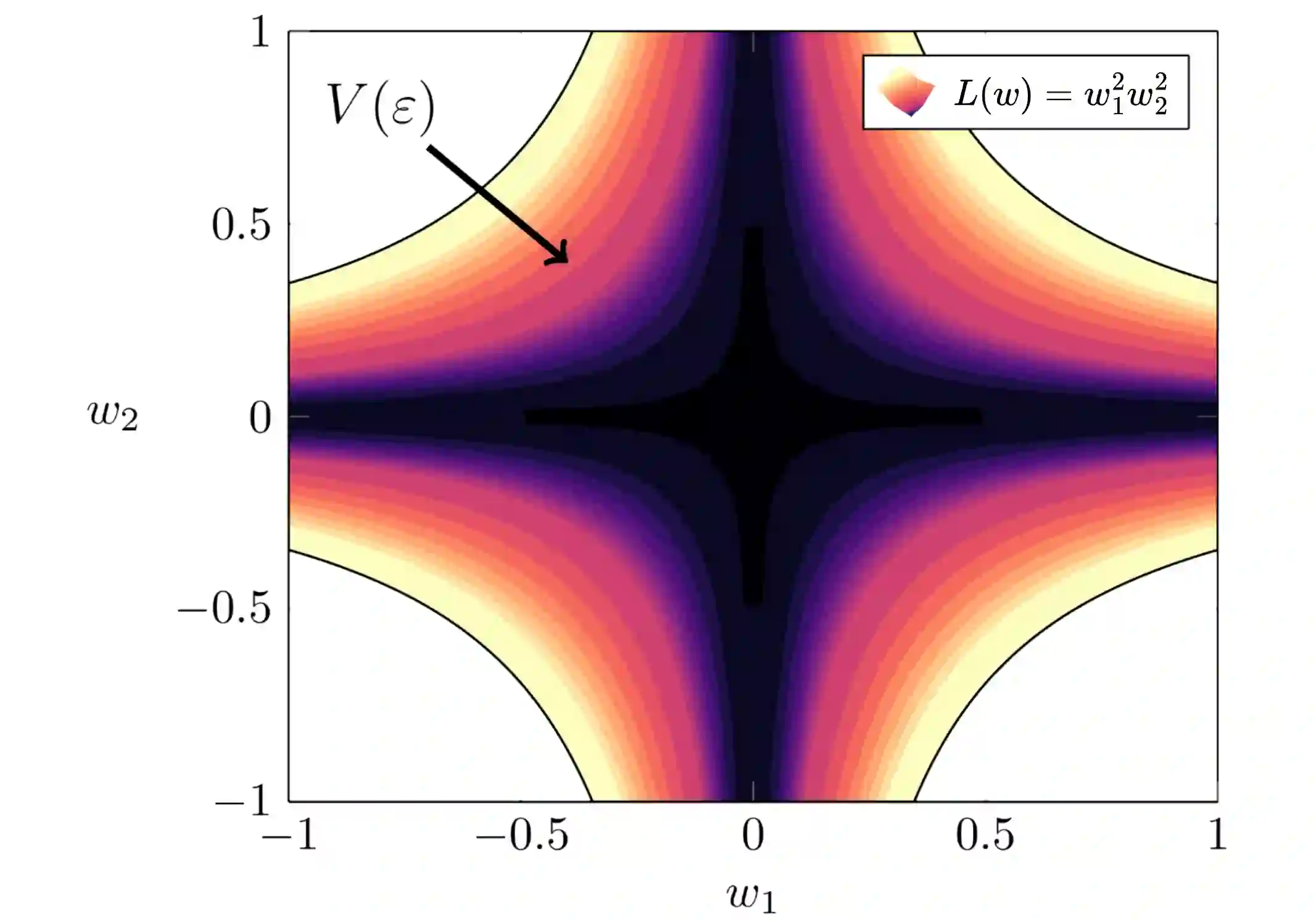

Mechanistic Interpretability aims to reverse engineer the algorithms implemented by neural networks by studying their weights and activations. An obstacle to reverse engineering neural networks is that many of the parameters inside a network are not involved in the computation being implemented by the network. These degenerate parameters may obfuscate internal structure. Singular learning theory teaches us that neural network parameterizations are biased towards being more degenerate, and parameterizations with more degeneracy are likely to generalize further. We identify 3 ways that network parameters can be degenerate: linear dependence between activations in a layer; linear dependence between gradients passed back to a layer; ReLUs which fire on the same subset of datapoints. We also present a heuristic argument that modular networks are likely to be more degenerate, and we develop a metric for identifying modules in a network that is based on this argument. We propose that if we can represent a neural network in a way that is invariant to reparameterizations that exploit the degeneracies, then this representation is likely to be more interpretable, and we provide some evidence that such a representation is likely to have sparser interactions. We introduce the Interaction Basis, a tractable technique to obtain a representation that is invariant to degeneracies from linear dependence of activations or Jacobians.

翻译:机制可解释性旨在通过研究神经网络的权重和激活来逆向工程其实现的算法。逆向工程神经网络的一个障碍是,网络中许多参数并未参与网络所实现的计算。这些简并参数可能混淆内部结构。奇异学习理论告诉我们,神经网络参数化偏向于更加简并,并且简并度更高的参数化更可能具有更强的泛化能力。我们确定了网络参数可能简并的三种方式:层内激活之间的线性依赖;传递回某层的梯度之间的线性依赖;以及在同一数据点子集上触发的ReLU。我们还提出一个启发式论证:模块化网络可能更简并,并基于此论证开发了一种用于识别网络中模块的度量指标。我们提出:如果能够以某种对利用简并性的重新参数化不变的方式表示神经网络,那么这种表示可能更可解释,并提供证据表明此类表示可能具有更稀疏的交互。我们引入了交互基(Interaction Basis),这是一种可行的技术,用于获得对由激活或雅可比矩阵的线性依赖引起的简并性不变的表示。