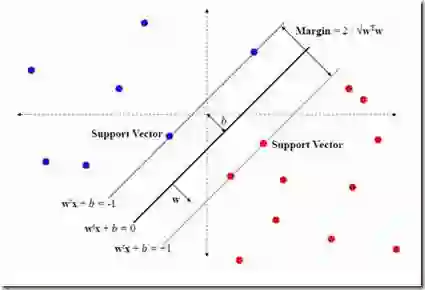

It remains a significant challenge how to quantitatively control the expressiveness of speech emotion in speech generation. In this work, we present a novel approach for manipulating the rendering of emotions for speech generation. We propose a hierarchical emotion distribution extractor, i.e. Hierarchical ED, that quantifies the intensity of emotions at different levels of granularity. Support vector machines (SVMs) are employed to rank emotion intensity, resulting in a hierarchical emotional embedding. Hierarchical ED is subsequently integrated into the FastSpeech2 framework, guiding the model to learn emotion intensity at phoneme, word, and utterance levels. During synthesis, users can manually edit the emotional intensity of the generated voices. Both objective and subjective evaluations demonstrate the effectiveness of the proposed network in terms of fine-grained quantitative emotion editing.

翻译:在语音生成中如何定量控制语音情感的表达性仍是一个重大挑战。本研究提出了一种新颖的语音生成情感渲染操控方法。我们设计了一种分层情感分布提取器(Hierarchical ED),可在不同粒度层次上量化情感强度。通过支持向量机(SVM)对情感强度进行排序,从而构建分层情感嵌入表示。该分层ED随后被整合到FastSpeech2框架中,引导模型在音素、词语和语句三个层级学习情感强度。在合成阶段,用户可手动编辑生成语音的情感强度。客观评估与主观实验均证明,所提出的网络在细粒度定量情感编辑方面具有显著有效性。