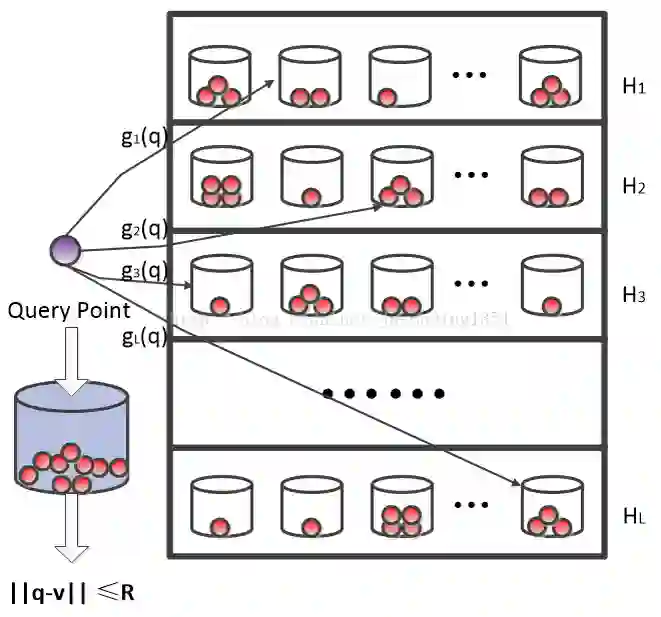

Locality sensitive hashing (LSH) is a fundamental algorithmic toolkit used by data scientists for approximate nearest neighbour search problems that have been used extensively in many large scale data processing applications such as near duplicate detection, nearest neighbour search, clustering, etc. In this work, we aim to propose faster and space efficient locality sensitive hash functions for Euclidean distance and cosine similarity for tensor data. Typically, the naive approach for obtaining LSH for tensor data involves first reshaping the tensor into vectors, followed by applying existing LSH methods for vector data $E2LSH$ and $SRP$. However, this approach becomes impractical for higher order tensors because the size of the reshaped vector becomes exponential in the order of the tensor. Consequently, the size of LSH parameters increases exponentially. To address this problem, we suggest two methods for LSH for Euclidean distance and cosine similarity, namely $CP-E2LSH$, $TT-E2LSH$, and $CP-SRP$, $TT-SRP$, respectively, building on $CP$ and tensor train $(TT)$ decompositions techniques. Our approaches are space efficient and can be efficiently applied to low rank $CP$ or $TT$ tensors. We provide a rigorous theoretical analysis of our proposal on their correctness and efficacy.

翻译:局部敏感哈希(LSH)是数据科学家用于近似最近邻搜索问题的基本算法工具,已广泛应用于大规模数据处理任务中,如近似重复检测、最近邻搜索、聚类等。本研究旨在为张量数据的欧氏距离和余弦相似度设计更快且空间高效的局部敏感哈希函数。通常,获取张量数据LSH的朴素方法需先将张量展平为向量,再对向量数据应用现有LSH方法($E2LSH$与$SRP$)。然而,对于高阶张量,这种方法因展平后向量维度随张量阶数指数增长而变得不可行,导致LSH参数规模呈指数级扩大。为解决该问题,我们基于CP分解和张量链(TT)分解技术,分别提出了针对欧氏距离和余弦相似度的LSH方法:$CP-E2LSH$、$TT-E2LSH$以及$CP-SRP$、$TT-SRP$。所提方法具有空间高效性,可有效应用于低秩CP或TT张量。我们通过严格的理论分析证明了方案的正确性与有效性。