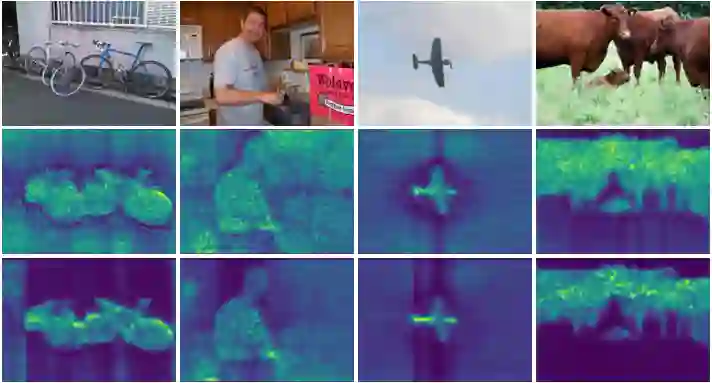

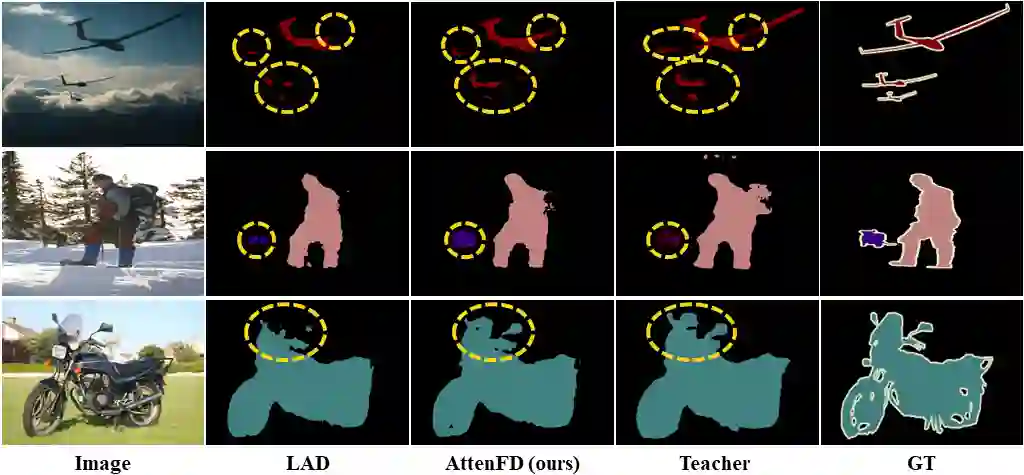

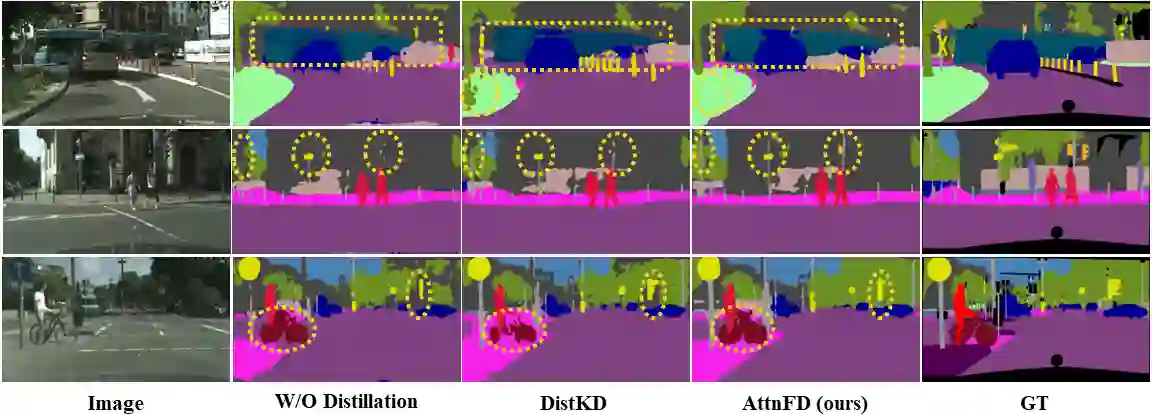

In contrast to existing complex methodologies commonly employed for distilling knowledge from a teacher to a student, the pro-posed method showcases the efficacy of a simple yet powerful method for utilizing refined feature maps to transfer attention. The proposed method has proven to be effective in distilling rich information, outperforming existing methods in semantic segmentation as a dense prediction task. The proposed Attention-guided Feature Distillation (AttnFD) method, em-ploys the Convolutional Block Attention Module (CBAM), which refines feature maps by taking into account both channel-specific and spatial information content. By only using the Mean Squared Error (MSE) loss function between the refined feature maps of the teacher and the student,AttnFD demonstrates outstanding performance in semantic segmentation, achieving state-of-the-art results in terms of mean Intersection over Union (mIoU) on the PascalVoc 2012 and Cityscapes datasets. The Code is available at https://github.com/AmirMansurian/AttnFD.

翻译:与现有通常用于从教师模型向学生模型蒸馏知识的复杂方法论不同,所提方法展示了一种利用精炼特征图传递注意力的简单而有效方法的功效。该经证明在蒸馏丰富信息方面高效,并在作为密集预测任务的语义分割中超越了现有方法。所提出的注意力引导特征蒸馏方法采用了卷积块注意力模块,该模块通过考虑通道特定和空间信息来精炼特征图。仅通过教师和学生精炼特征图之间的均方误差损失函数,AttnFD便在语义分割中展现出卓越性能,在PascalVoc 2012和Cityscapes数据集上以平均交并比取得了最先进的结果。代码可在https://github.com/AmirMansurian/AttnFD 获取。