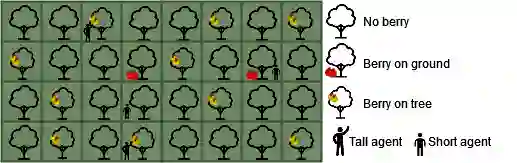

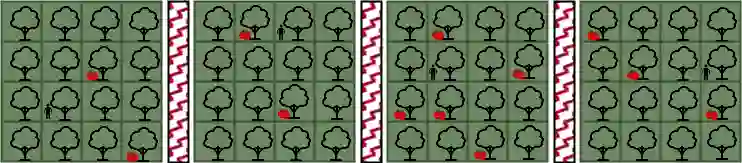

Social norms are standards of behaviour common in a society. However, when agents make decisions without considering how others are impacted, norms can emerge that lead to the subjugation of certain agents. We present RAWL-E, a method to create ethical norm-learning agents. RAWL-E agents operationalise maximin, a fairness principle from Rawlsian ethics, in their decision-making processes to promote ethical norms by balancing societal well-being with individual goals. We evaluate RAWL-E agents in simulated harvesting scenarios. We find that norms emerging in RAWL-E agent societies enhance social welfare, fairness, and robustness, and yield higher minimum experience compared to those that emerge in agent societies that do not implement Rawlsian ethics.

翻译:社会规范是社会中普遍存在的行为标准。然而,当智能体在决策过程中不考虑对他者的影响时,可能形成导致某些智能体被压制的规范。本文提出RAWL-E方法,用于构建具有伦理意识的规范学习智能体。RAWL-E智能体在其决策过程中运用罗尔斯伦理学中的"最大化最小值"公平原则,通过平衡社会福祉与个体目标来促进伦理规范的形成。我们在模拟采集场景中对RAWL-E智能体进行评估。研究发现,相较于未实施罗尔斯伦理的智能体社会,RAWL-E智能体社会中形成的规范能显著提升社会福利、公平性与鲁棒性,并产生更高的最低体验水平。