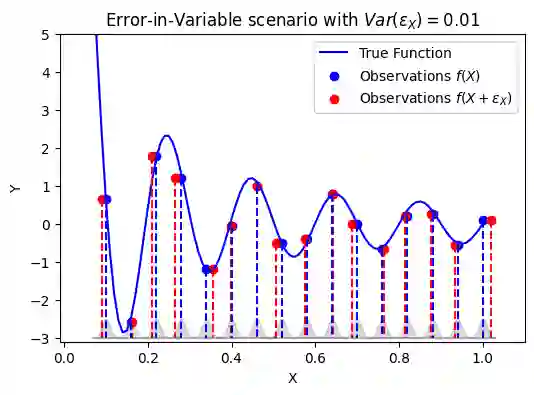

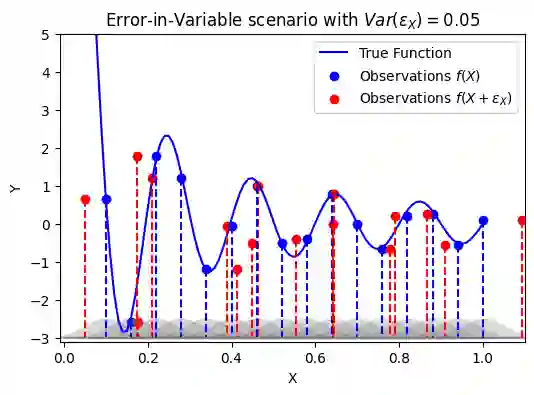

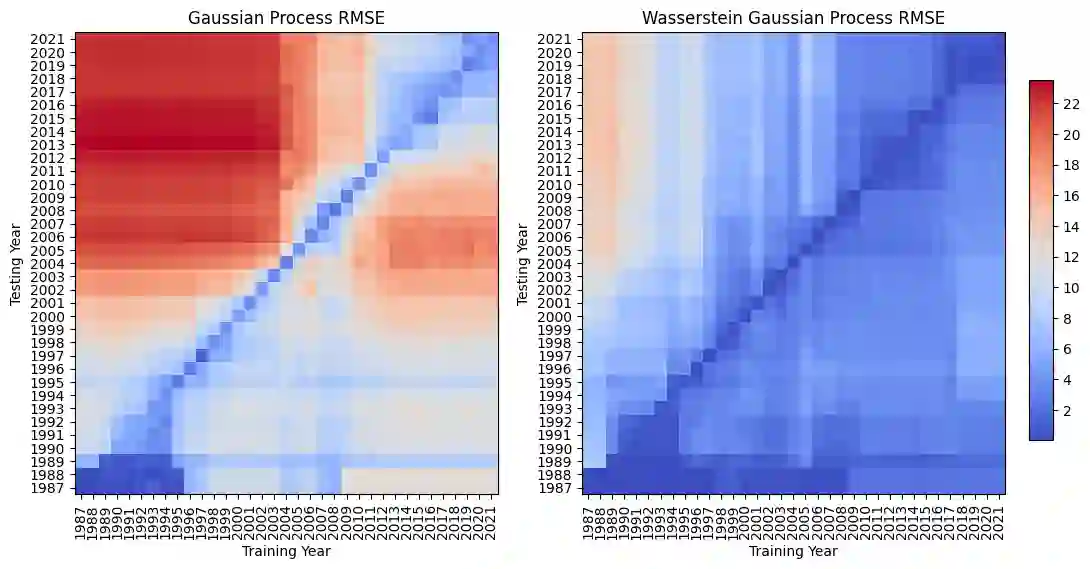

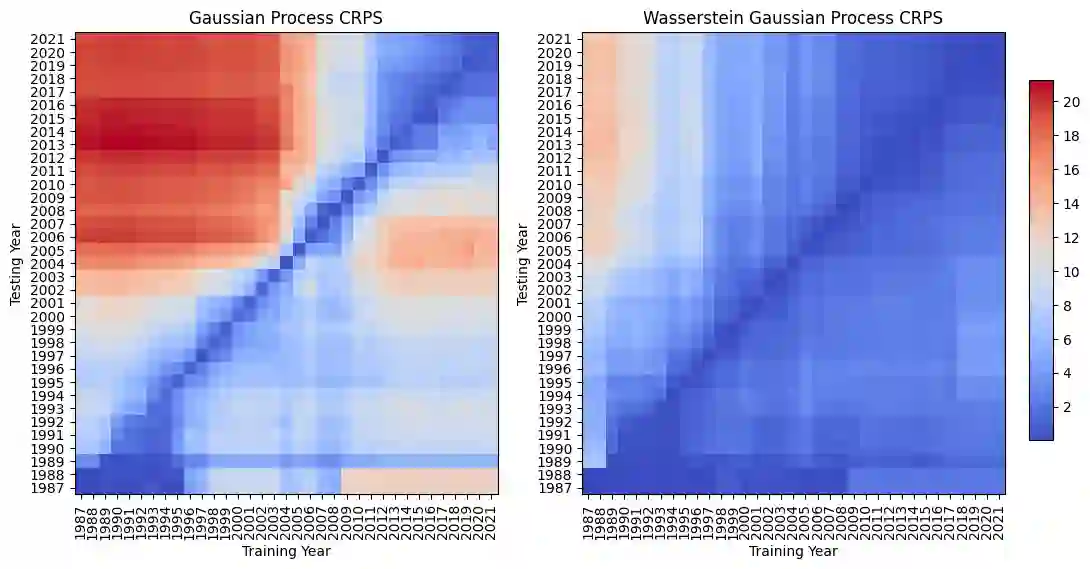

Gaussian process (GP) regression is widely used for uncertainty quantification, yet the standard formulation assumes noise-free covariates. When inputs are measured with error, this errors-in-variables (EIV) setting can lead to optimistically narrow posterior intervals and biased decisions. We study GP regression under input measurement uncertainty by representing each noisy input as a probability measure and defining covariance through Wasserstein distances between these measures. Building on this perspective, we instantiate a deterministic projected Wasserstein ARD (PWA) kernel whose one-dimensional components admit closed-form expressions and whose product structure yields a scalable, positive-definite kernel on distributions. Unlike latent-input GP models, PWA-based GPs (\PWAGPs) handle input noise without introducing unobserved covariates or Monte Carlo projections, making uncertainty quantification more transparent and robust.

翻译:高斯过程(GP)回归被广泛应用于不确定性量化,但其标准形式假设协变量无噪声。当输入存在测量误差时,这种变量含误差(EIV)设定可能导致后验区间过于乐观地偏窄以及决策偏差。本研究通过将每个含噪声输入表示为概率测度,并利用这些测度之间的Wasserstein距离定义协方差,探讨了输入测量不确定性下的GP回归。基于这一视角,我们构建了确定性的投影Wasserstein ARD(PWA)核函数,其一维分量具有闭式表达式,其乘积结构可在分布上生成可扩展的正定核。与隐变量输入GP模型不同,基于PWA的GP(\PWAGP)能够在不引入未观测协变量或蒙特卡洛投影的情况下处理输入噪声,从而使不确定性量化更加透明和稳健。