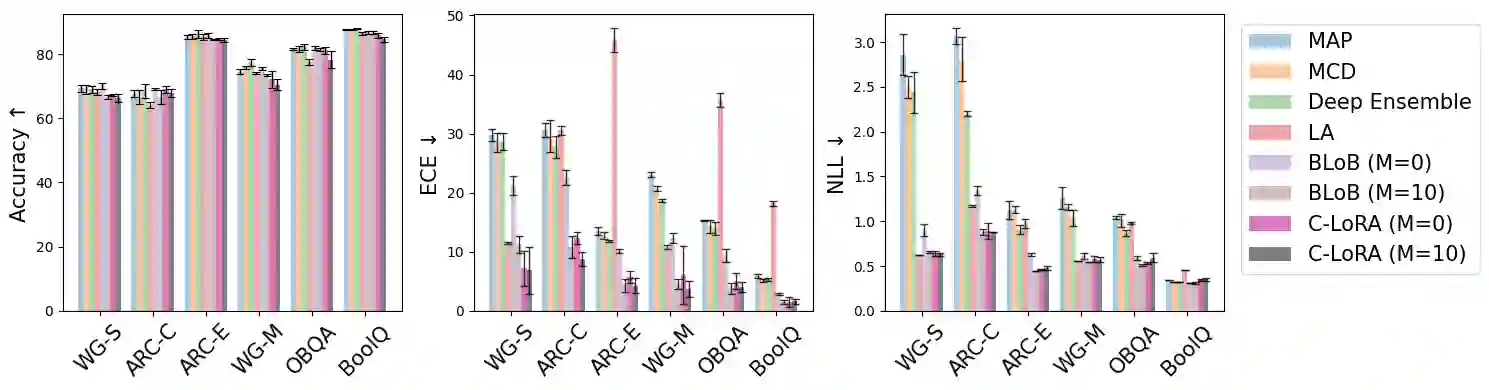

Low-Rank Adaptation (LoRA) offers a cost-effective solution for fine-tuning large language models (LLMs), but it often produces overconfident predictions in data-scarce few-shot settings. To address this issue, several classical statistical learning approaches have been repurposed for scalable uncertainty-aware LoRA fine-tuning. However, these approaches neglect how input characteristics affect the predictive uncertainty estimates. To address this limitation, we propose Contextual Low-Rank Adaptation (C-LoRA) as a novel uncertainty-aware and parameter efficient fine-tuning approach, by developing new lightweight LoRA modules contextualized to each input data sample to dynamically adapt uncertainty estimates. Incorporating data-driven contexts into the parameter posteriors, C-LoRA mitigates overfitting, achieves well-calibrated uncertainties, and yields robust predictions. Extensive experiments on LLaMA2-7B models demonstrate that C-LoRA consistently outperforms the state-of-the-art uncertainty-aware LoRA methods in both uncertainty quantification and model generalization. Ablation studies further confirm the critical role of our contextual modules in capturing sample-specific uncertainties. C-LoRA sets a new standard for robust, uncertainty-aware LLM fine-tuning in few-shot regimes. Although our experiments are limited to 7B models, our method is architecture-agnostic and, in principle, applies beyond this scale; studying its scaling to larger models remains an open problem. Our code is available at https://github.com/ahra99/c_lora.

翻译:低秩适配(LoRA)为大型语言模型(LLM)的微调提供了一种经济高效的解决方案,但在数据稀缺的少样本场景中常产生过度自信的预测。为解决此问题,已有研究将多种经典统计学习方法改造用于可扩展的不确定性感知LoRA微调。然而,这些方法忽略了输入特征如何影响预测不确定性估计。为克服这一局限,我们提出上下文低秩适配(C-LoRA)作为一种新颖的不确定性感知且参数高效的微调方法,通过开发针对每个输入数据样本上下文化的轻量级LoRA模块,动态调整不确定性估计。通过将数据驱动的上下文信息融入参数后验分布,C-LoRA有效缓解过拟合问题,实现良好校准的不确定性估计,并产生稳健的预测。基于LLaMA2-7B模型的广泛实验表明,C-LoRA在不确定性量化和模型泛化能力上均持续优于当前最先进的不确定性感知LoRA方法。消融研究进一步证实了上下文模块在捕获样本特异性不确定性中的关键作用。C-LoRA为少样本场景下稳健、不确定性感知的LLM微调设立了新标准。尽管实验仅限于70亿参数模型,但我们的方法具有架构无关性,原则上可扩展至更大规模;其向更大模型的扩展研究仍是一个开放性问题。代码已开源:https://github.com/ahra99/c_lora。