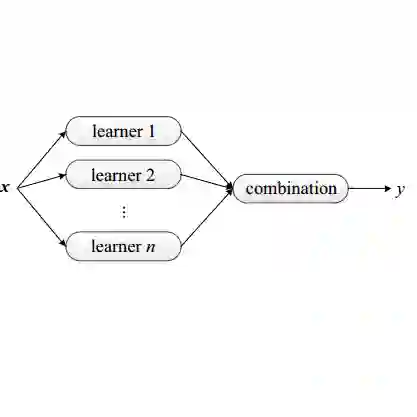

Missing data imputation is a critical challenge in various domains, such as healthcare and finance, where data completeness is vital for accurate analysis. Large language models (LLMs), trained on vast corpora, have shown strong potential in data generation, making them a promising tool for data imputation. However, challenges persist in designing effective prompts for a finetuning-free process and in mitigating the risk of LLM hallucinations. To address these issues, we propose a novel framework, LLM-Forest, which introduces a "forest" of few-shot learning LLM "trees" with confidence-based weighted voting, inspired by ensemble learning (Random Forest). This framework is established on a new concept of bipartite information graphs to identify high-quality relevant neighboring entries with both feature and value granularity. Extensive experiments on 9 real-world datasets demonstrate the effectiveness and efficiency of LLM-Forest.

翻译:数据填补是医疗和金融等多个领域中的关键挑战,在这些领域中数据完整性对于准确分析至关重要。基于海量语料训练的大型语言模型(LLMs)在数据生成方面展现出强大潜力,使其成为数据填补的有力工具。然而,如何在免微调过程中设计有效的提示词,以及如何降低LLM产生幻觉的风险,仍是亟待解决的难题。为解决这些问题,我们提出了一种新颖框架LLM-Forest,该框架受集成学习(随机森林)启发,构建了由少样本学习LLM“树”组成的“森林”,并采用基于置信度的加权投票机制。该框架建立在一种新的二分信息图概念之上,能够从特征和数值粒度两个维度识别高质量的相关相邻条目。在9个真实数据集上的大量实验证明了LLM-Forest的有效性与高效性。