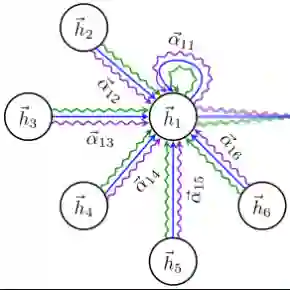

In the realm of medical image fusion, integrating information from various modalities is crucial for improving diagnostics and treatment planning, especially in retinal health, where the important features exhibit differently in different imaging modalities. Existing deep learning-based approaches insufficiently focus on retinal image fusion, and thus fail to preserve enough anatomical structure and fine vessel details in retinal image fusion. To address this, we propose the Topology-Aware Graph Attention Network (TaGAT) for multi-modal retinal image fusion, leveraging a novel Topology-Aware Encoder (TAE) with Graph Attention Networks (GAT) to effectively enhance spatial features with retinal vasculature's graph topology across modalities. The TAE encodes the base and detail features, extracted via a Long-short Range (LSR) encoder from retinal images, into the graph extracted from the retinal vessel. Within the TAE, the GAT-based Graph Information Update (GIU) block dynamically refines and aggregates the node features to generate topology-aware graph features. The updated graph features with base and detail features are combined and decoded as a fused image. Our model outperforms state-of-the-art methods in Fluorescein Fundus Angiography (FFA) with Color Fundus (CF) and Optical Coherence Tomography (OCT) with confocal microscopy retinal image fusion. The source code can be accessed via https://github.com/xintian-99/TaGAT.

翻译:在医学图像融合领域,整合来自不同模态的信息对于改善诊断和治疗规划至关重要,尤其在视网膜健康中,重要特征在不同成像模态下呈现显著差异。现有基于深度学习的方法对视网膜图像融合关注不足,因此在融合过程中难以充分保留解剖结构和细微血管细节。为此,我们提出面向多模态视网膜图像融合的拓扑感知图注意力网络(TaGAT),利用新颖的拓扑感知编码器(TAE)与图注意力网络(GAT),通过视网膜血管系统的图拓扑结构跨模态有效增强空间特征。TAE将通过长短期范围(LSR)编码器从视网膜图像提取的基础特征与细节特征,编码至从视网膜血管提取的图中。在TAE内部,基于GAT的图信息更新(GIU)模块动态优化并聚合节点特征,以生成拓扑感知的图特征。更新后的图特征与基础特征、细节特征相结合,经解码生成融合图像。我们的模型在荧光素眼底血管造影(FFA)与彩色眼底(CF)图像融合、以及光学相干断层扫描(OCT)与共聚焦显微镜视网膜图像融合任务中,性能优于现有先进方法。源代码可通过 https://github.com/xintian-99/TaGAT 获取。