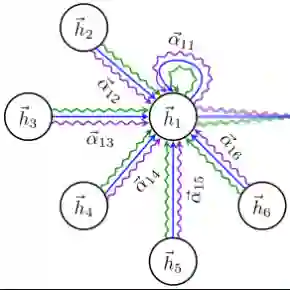

The rapid development of auditory attention decoding (AAD) based on electroencephalography (EEG) signals offers the possibility EEG-driven target speaker extraction. However, how to effectively utilize the target-speaker common information between EEG and speech remains an unresolved problem. In this paper, we propose a model for brain-controlled speaker extraction, which utilizes the EEG recorded from the listener to extract the target speech. In order to effectively extract information from EEG signals, we derive multi-scale time--frequency features and further incorporate cortical topological structures that are selectively engaged during the task. Moreover, to effectively exploit the non-Euclidean structure of EEG signals and capture their global features, the graph convolutional networks and self-attention mechanism are used in the EEG encoder. In addition, to make full use of the fused EEG and speech feature and preserve global context and capture speech rhythm and prosody, we introduce MossFormer2 which combines MossFormer and RNN-Free Recurrent as separator. Experimental results on both the public Cocktail Party and KUL dataset in this paper show that our TFGA-Net model significantly outper-forms the state-of-the-art method in certain objective evaluation metrics. The source code is available at: https://github.com/LaoDa-X/TFGA-NET.

翻译:基于脑电图(EEG)信号的听觉注意解码(AAD)的快速发展,为EEG驱动的目标说话人提取提供了可能。然而,如何有效利用EEG与语音之间的目标说话人共有信息,仍然是一个尚未解决的问题。本文提出了一种脑控说话人提取模型,该模型利用从听者记录的EEG信号来提取目标语音。为了有效地从EEG信号中提取信息,我们推导了多尺度时频特征,并进一步融入了任务期间选择性参与的皮层拓扑结构。此外,为了有效利用EEG信号的非欧几里得结构并捕获其全局特征,在EEG编码器中使用了图卷积网络和自注意力机制。另外,为了充分利用融合后的EEG与语音特征,并保留全局上下文、捕获语音节奏和韵律,我们引入了结合MossFormer与RNN-Free Recurrent作为分离器的MossFormer2。本文在公开的Cocktail Party和KUL数据集上的实验结果表明,我们的TFGA-Net模型在若干客观评价指标上显著优于现有最先进的方法。源代码可在以下网址获取:https://github.com/LaoDa-X/TFGA-NET。