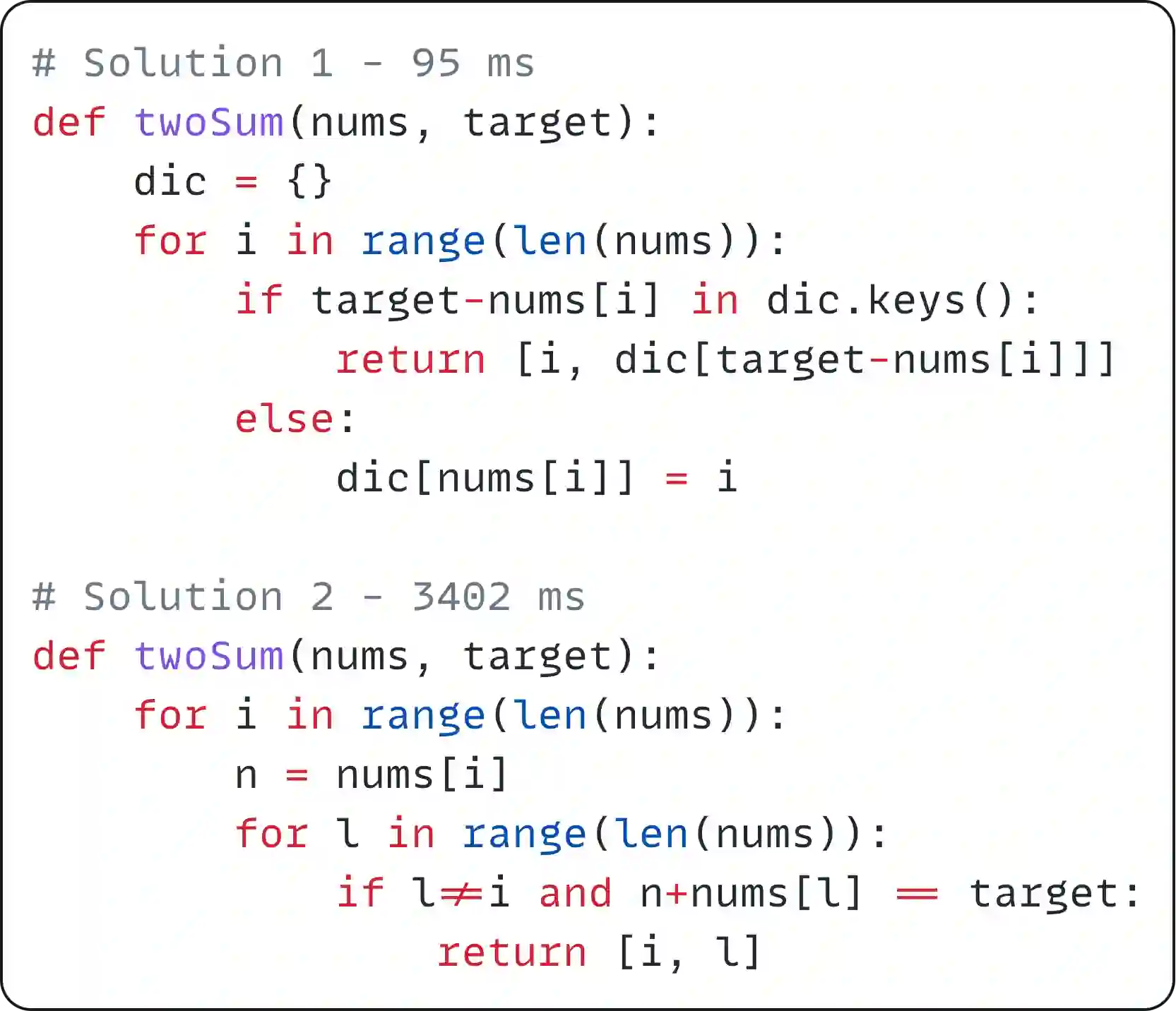

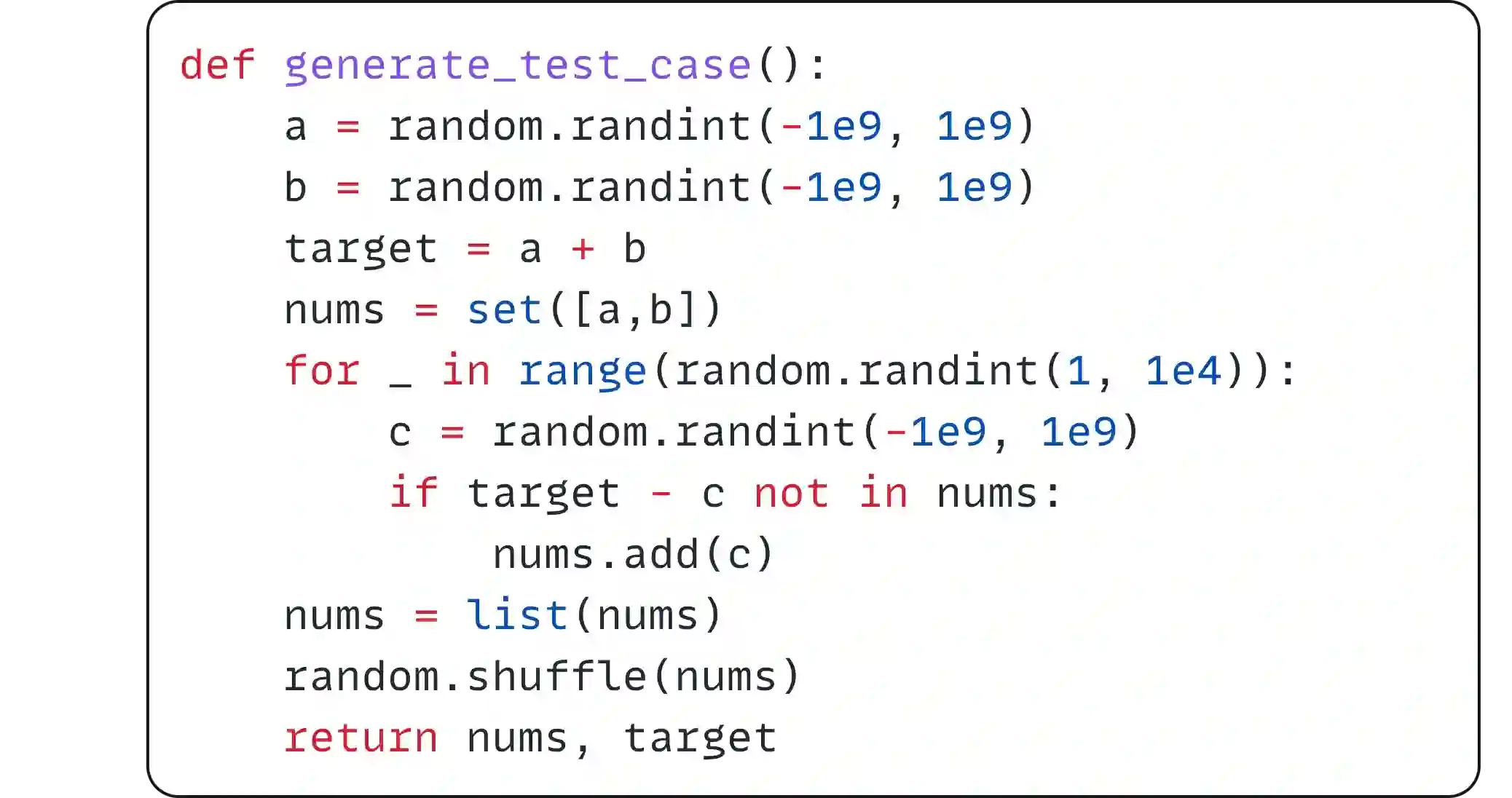

Despite advancements in evaluating Large Language Models (LLMs) for code synthesis, benchmarks have predominantly focused on functional correctness, overlooking the importance of code efficiency. We present Mercury, the first benchmark designated for assessing the code efficiency of LLM code synthesis tasks. Mercury consists of 1,889 programming tasks covering diverse difficulty levels alongside test case generators generating unlimited cases for comprehensive evaluation. Unlike existing benchmarks, Mercury integrates a novel metric Beyond@K to measure normalized code efficiency based on historical submissions, leading to a new evaluation indicator for code synthesis, which encourages generating functionally correct and computationally efficient code, mirroring the real-world software development standard. Our findings reveal that while LLMs demonstrate the remarkable capability to generate functionally correct code, there still exists a substantial gap in their efficiency output, underscoring a new frontier for LLM research and development.

翻译:尽管大语言模型在代码合成评估方面取得了进展,现有基准测试主要聚焦于功能正确性,却忽视了代码效率的重要性。我们提出水星——首个专门用于评估大语言模型代码合成任务效率的基准测试。该基准包含1,889个覆盖不同难度等级的编程任务,并配备测试用例生成器以产生无限量测试用例进行全面评估。与现有基准不同,水星创新性地引入基于历史提交记录的Beyond@K指标来量化标准化代码效率,从而构建代码合成的新型评估指标,旨在激励生成功能正确且计算高效的代码,这与现实软件开发标准高度契合。研究结果表明,尽管大语言模型展现出生成功能正确代码的卓越能力,但其在输出效率方面仍存在显著差距,这为大语言模型研究开辟了新的前沿方向。