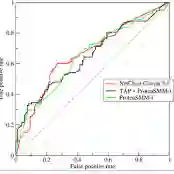

Recent vision-language models (VLMs) achieve strong zero-shot performance via large-scale image-text pretraining and have been widely adopted in medical image analysis. However, existing VLMs remain notably weak at understanding negated clinical statements, largely due to contrastive alignment objectives that treat negation as a minor linguistic variation rather than a meaning-inverting operator. In multi-label settings, prompt-based InfoNCE fine-tuning further reinforces easy-positive image-prompt alignments, limiting effective learning of disease absence. To overcome these limitations, we reformulate vision-language alignment as a conditional semantic comparison problem, which is instantiated through a bi-directional multiple-choice learning framework(Bi-MCQ). By jointly training Image-to-Text and Text-to-Image MCQ tasks with affirmative, negative, and mixed prompts, our method implements fine-tuning as conditional semantic comparison instead of global similarity maximization. We further introduce direction-specific Cross-Attention fusion modules to address asymmetric cues required by bi-directional reasoning and reduce alignment interference. Experiments on ChestXray14, Open-I, CheXpert, and PadChest show that Bi-MCQ improves negation understanding by up to 0.47 AUC over the zero-shot performance of the state-of-the-art CARZero model, while achieving up to a 0.08 absolute gain on positive-negative combined (PNC) evaluation. Additionally, Bi-MCQ reduces the affirmative-negative AUC gap by an average of 0.12 compared to InfoNCE-based fine-tuning, demonstrating that objective reformulation can substantially enhance negation understanding in medical VLMs.

翻译:近期,视觉-语言模型(VLMs)通过大规模图像-文本预训练实现了强大的零样本性能,并已广泛应用于医学图像分析。然而,现有VLMs在理解否定性临床陈述方面仍存在明显不足,这主要源于对比对齐目标将否定视为次要的语言变异而非意义反转算子。在多标签场景中,基于提示的InfoNCE微调进一步强化了易得正例的图像-提示对齐,限制了疾病缺席的有效学习。为克服这些局限,我们将视觉-语言对齐重新定义为条件语义比较问题,并通过双向多选学习框架(Bi-MCQ)实现具体化。通过联合训练图像到文本与文本到图像的多选任务(采用肯定、否定及混合提示),本方法将微调实现为条件语义比较而非全局相似度最大化。我们进一步引入方向特定的交叉注意力融合模块,以处理双向推理所需的不对称线索并减少对齐干扰。在ChestXray14、Open-I、CheXpert和PadChest数据集上的实验表明,Bi-MCQ在否定理解方面相比最先进的CARZero模型的零样本性能最高提升0.47 AUC,同时在正负联合(PNC)评估上实现最高0.08的绝对增益。此外,与基于InfoNCE的微调相比,Bi-MCQ将肯定-否定AUC差距平均缩小0.12,证明目标重构能显著增强医学VLMs的否定理解能力。