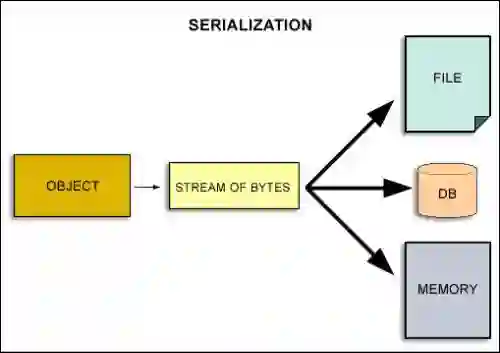

Vision-Language Models (VLMs) have demonstrated remarkable performance across a variety of real-world tasks. However, existing VLMs typically process visual information by serializing images, a method that diverges significantly from the parallel nature of human vision. Moreover, their opaque internal mechanisms hinder both deeper understanding and architectural innovation. Inspired by the dual-stream hypothesis of human vision, which distinguishes the "what" and "where" pathways, we deconstruct the visual processing in VLMs into object recognition and spatial perception for separate study. For object recognition, we convert images into text token maps and find that the model's perception of image content unfolds as a two-stage process from shallow to deep layers, beginning with attribute recognition and culminating in semantic disambiguation. For spatial perception, we theoretically derive and empirically verify the geometric structure underlying the positional representation in VLMs. Based on these findings, we introduce an instruction-agnostic token compression algorithm based on a plug-and-play visual decoder to improve decoding efficiency, and a RoPE scaling technique to enhance spatial reasoning. Through rigorous experiments, our work validates these analyses, offering a deeper understanding of VLM internals and providing clear principles for designing more capable future architectures.

翻译:视觉语言模型(VLMs)在各类现实任务中展现出卓越性能。然而,现有VLMs通常通过序列化方式处理视觉信息,这种方法与人类视觉的并行处理特性存在显著差异。此外,其不透明的内部机制既阻碍了深入理解,也限制了架构创新。受人类视觉双通路假说(区分“内容”与“位置”通路)的启发,我们将VLMs中的视觉处理解构为物体识别与空间感知两个独立研究维度。在物体识别方面,我们将图像转换为文本标记映射图,发现模型对图像内容的感知呈现为从浅层到深层的两阶段过程:始于属性识别,最终完成语义消歧。在空间感知方面,我们从理论上推导并通过实验验证了VLMs位置表征所基于的几何结构。基于这些发现,我们提出了一种基于即插即用视觉解码器的指令无关标记压缩算法以提升解码效率,以及一种RoPE缩放技术以增强空间推理能力。通过严谨的实验,我们的工作验证了上述分析,为深入理解VLM内部机制提供了新视角,并为设计更强大的未来架构指明了清晰原则。