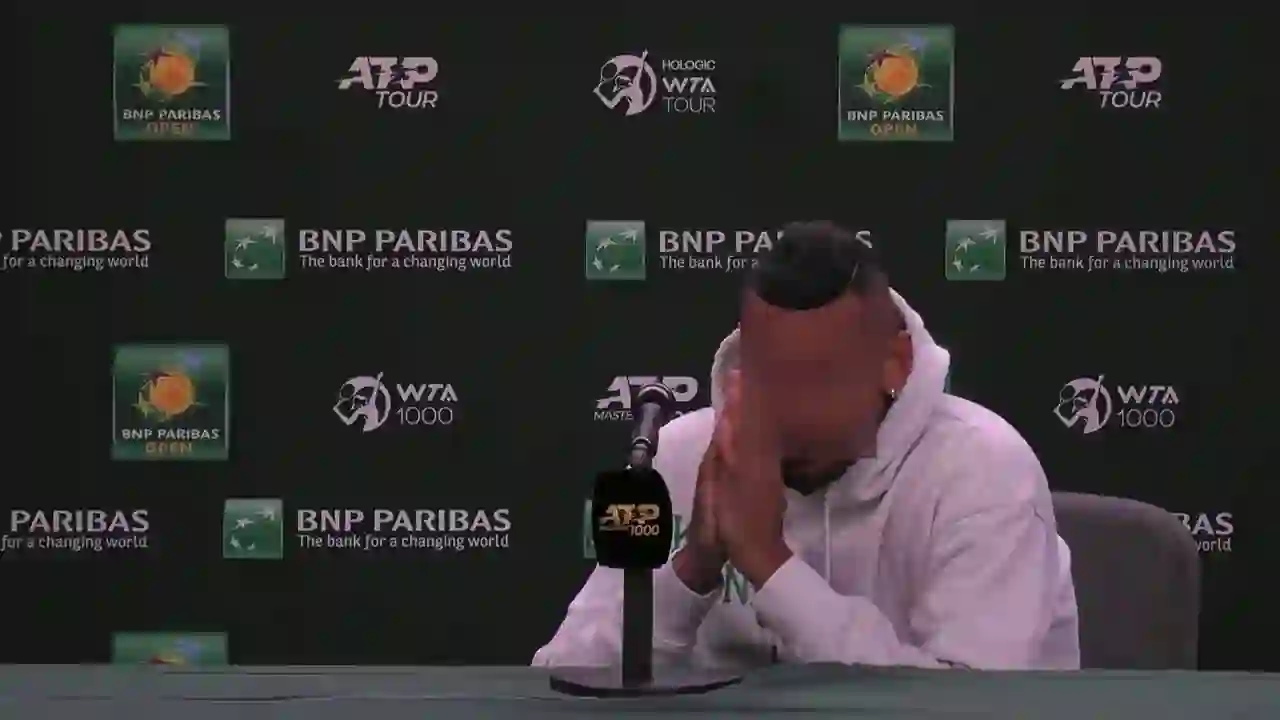

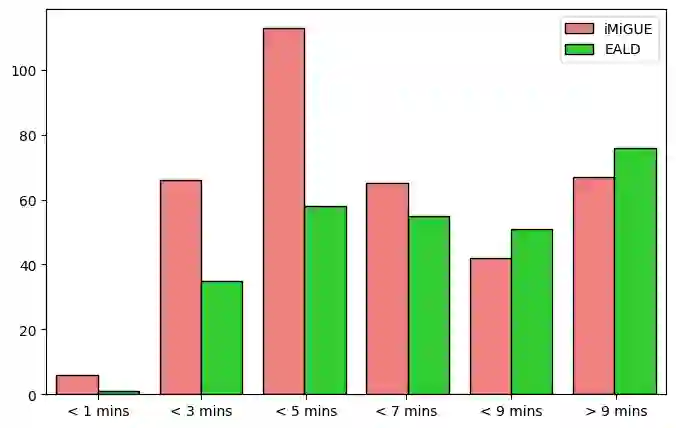

Emotion AI is the ability of computers to understand human emotional states. Existing works have achieved promising progress, but two limitations remain to be solved: 1) Previous studies have been more focused on short sequential video emotion analysis while overlooking long sequential video. However, the emotions in short sequential videos only reflect instantaneous emotions, which may be deliberately guided or hidden. In contrast, long sequential videos can reveal authentic emotions; 2) Previous studies commonly utilize various signals such as facial, speech, and even sensitive biological signals (e.g., electrocardiogram). However, due to the increasing demand for privacy, developing Emotion AI without relying on sensitive signals is becoming important. To address the aforementioned limitations, in this paper, we construct a dataset for Emotion Analysis in Long-sequential and De-identity videos called EALD by collecting and processing the sequences of athletes' post-match interviews. In addition to providing annotations of the overall emotional state of each video, we also provide the Non-Facial Body Language (NFBL) annotations for each player. NFBL is an inner-driven emotional expression and can serve as an identity-free clue to understanding the emotional state. Moreover, we provide a simple but effective baseline for further research. More precisely, we evaluate the Multimodal Large Language Models (MLLMs) with de-identification signals (e.g., visual, speech, and NFBLs) to perform emotion analysis. Our experimental results demonstrate that: 1) MLLMs can achieve comparable, even better performance than the supervised single-modal models, even in a zero-shot scenario; 2) NFBL is an important cue in long sequential emotion analysis. EALD will be available on the open-source platform.

翻译:情感人工智能是计算机理解人类情绪状态的能力。现有研究虽已取得显著进展,但仍存在两个有待解决的问题:1)已有研究更多关注短时序列视频情感分析,而忽略了长时间序列视频。然而,短时序列视频中的情绪仅反映瞬时情感,可能被人为引导或隐藏;相比之下,长时序列视频更能揭示真实情绪;2)现有研究普遍使用面部、语音甚至敏感生物信号(如心电图)等多种信号。但随着隐私保护需求的日益增长,开发不依赖敏感信号的情感人工智能变得愈发重要。为克服上述局限,本文通过采集和处理运动员赛后采访的序列视频,构建了名为EALD的长时间序列与去身份视频情感分析数据集。除标注每段视频的整体情绪状态外,我们还为每位运动员提供了非面部身体语言(NFBL)标注。NFBL是由内在驱动的情绪表达,可作为去身份线索辅助理解情绪状态。此外,我们为后续研究提供了一个简单有效的基线方法。具体而言,我们评估了融合去身份信号(如视觉、语音及NFBL)的多模态大语言模型(MLLMs)在情感分析任务中的表现。实验结果表明:1)即使在零样本场景下,MLLMs也能达到甚至超越有监督单模态模型的性能;2)NFBL是长时间序列情感分析中的重要线索。EALD数据集将开源发布。