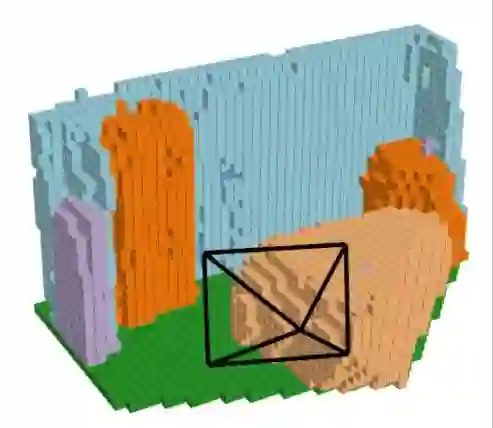

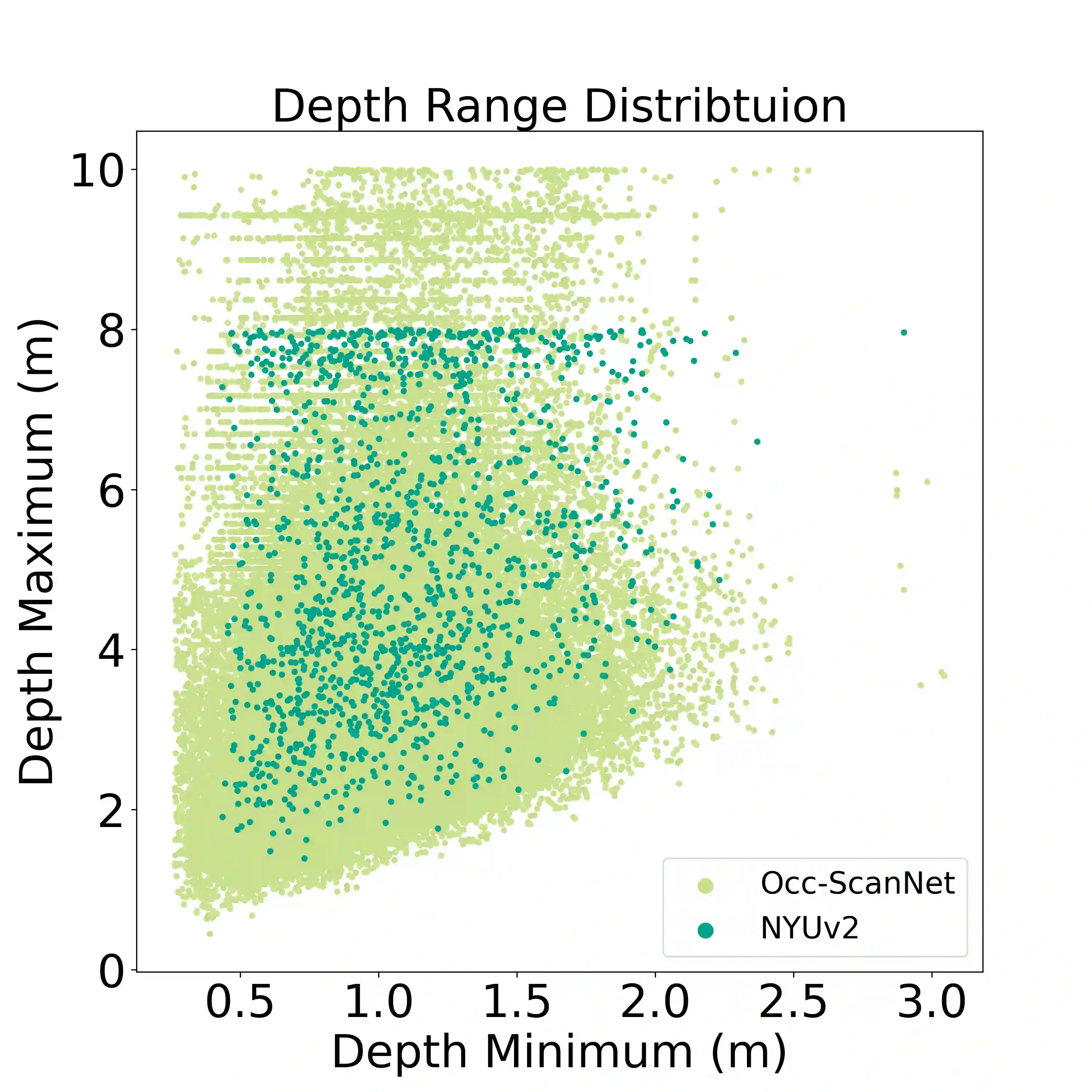

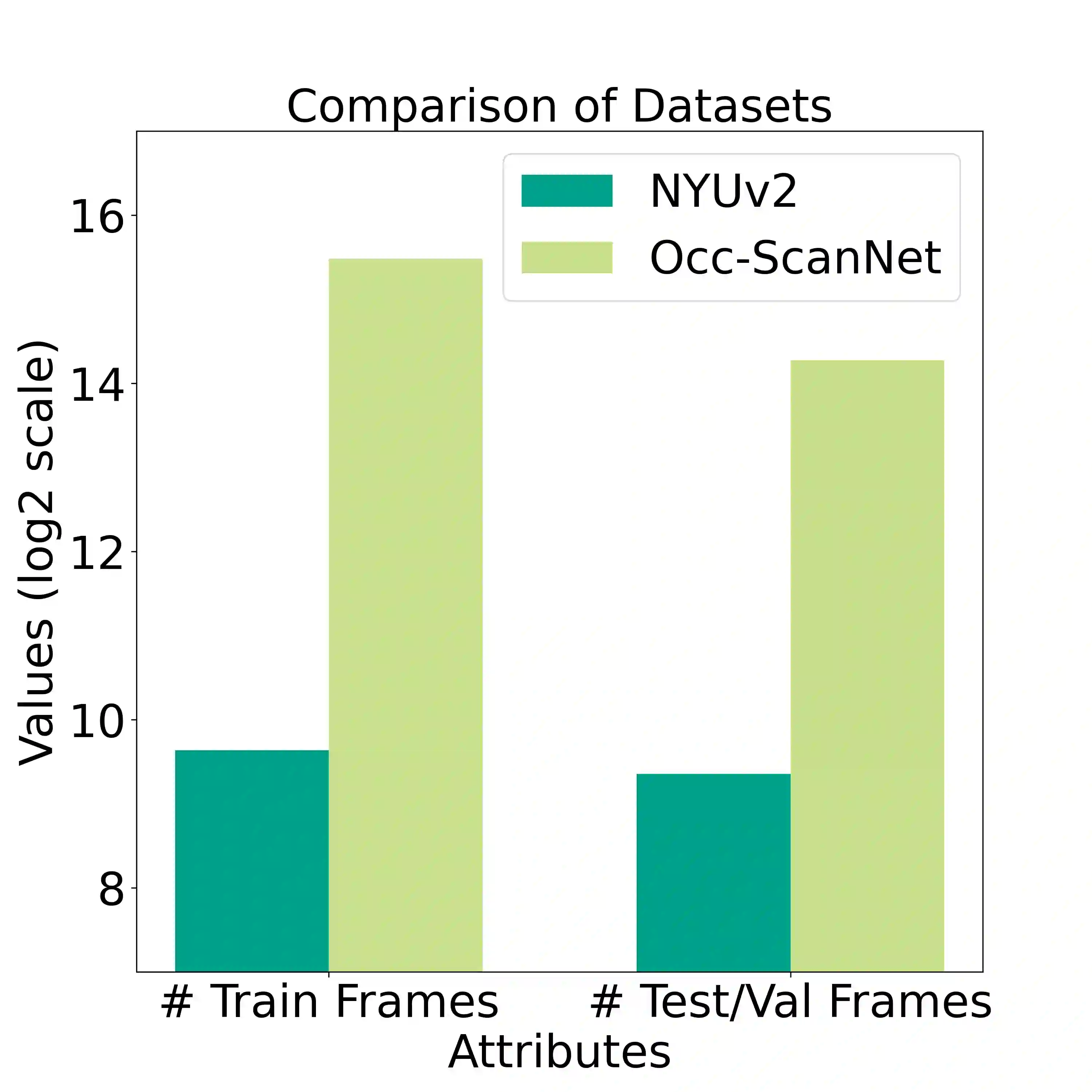

Camera-based 3D occupancy prediction has recently garnered increasing attention in outdoor driving scenes. However, research in indoor scenes remains relatively unexplored. The core differences in indoor scenes lie in the complexity of scene scale and the variance in object size. In this paper, we propose a novel method, named ISO, for predicting indoor scene occupancy using monocular images. ISO harnesses the advantages of a pretrained depth model to achieve accurate depth predictions. Furthermore, we introduce the Dual Feature Line of Sight Projection (D-FLoSP) module within ISO, which enhances the learning of 3D voxel features. To foster further research in this domain, we introduce Occ-ScanNet, a large-scale occupancy benchmark for indoor scenes. With a dataset size 40 times larger than the NYUv2 dataset, it facilitates future scalable research in indoor scene analysis. Experimental results on both NYUv2 and Occ-ScanNet demonstrate that our method achieves state-of-the-art performance. The dataset and code are made publicly at https://github.com/hongxiaoy/ISO.git.

翻译:基于相机的三维占用预测在室外驾驶场景中已获得日益广泛的关注,然而针对室内场景的研究仍相对匮乏。室内场景的核心差异在于场景尺度的复杂性与物体尺寸的多样性。本文提出一种名为ISO的新方法,利用单目图像预测室内场景占用。ISO借助预训练深度模型的优势实现精确的深度预测。此外,我们在ISO中引入了双特征视线投影模块,该模块能增强三维体素特征的学习能力。为推进该领域研究,我们提出了Occ-ScanNet——一个面向室内场景的大规模占用基准数据集。其数据规模达到NYUv2数据集的40倍,为未来可扩展的室内场景分析研究提供了支撑。在NYUv2和Occ-ScanNet上的实验结果表明,本方法取得了最先进的性能。数据集与代码已公开于https://github.com/hongxiaoy/ISO.git。