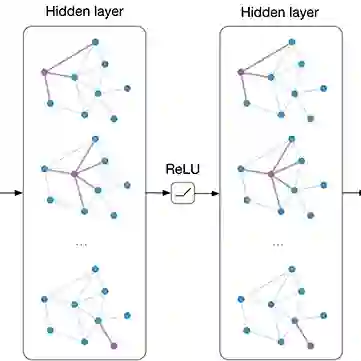

Graph Convolutional Networks (GCNs) have become a pivotal method in machine learning for modeling functions over graphs. Despite their widespread success across various applications, their statistical properties (e.g. consistency, convergence rates) remain ill-characterized. To begin addressing this knowledge gap, in this paper, we provide a formal analysis of the impact of convolution operators on regression tasks over homophilic networks. Focusing on estimators based solely on neighborhood aggregation, we examine how two common convolutions - the original GCN and GraphSage convolutions - affect the learning error as a function of the neighborhood topology and the number of convolutional layers. We explicitly characterize the bias-variance trade-off incurred by GCNs as a function of the neighborhood size and identify specific graph topologies where convolution operators are less effective. Our theoretical findings are corroborated by synthetic experiments, and provide a start to a deeper quantitative understanding of convolutional effects in GCNs for offering rigorous guidelines for practitioners.

翻译:图卷积网络(GCN)已成为机器学习中用于建模图函数的关键方法。尽管其在各类应用中取得了广泛成功,但其统计特性(如一致性、收敛速率)仍缺乏清晰的理论刻画。为填补这一知识空白,本文针对同配性网络上的回归任务,对卷积算子的影响进行了形式化分析。聚焦于仅基于邻域聚合的估计器,我们考察了两种常见卷积——原始GCN卷积与GraphSage卷积——如何随着邻域拓扑结构和卷积层数的变化影响学习误差。我们显式刻画了GCN所面临的偏差-方差权衡与邻域规模的函数关系,并识别出卷积算子效率较低的具体图拓扑结构。理论发现通过合成实验得到验证,为深入量化理解GCN中的卷积效应提供了起点,从而为实践者提供严谨的指导原则。