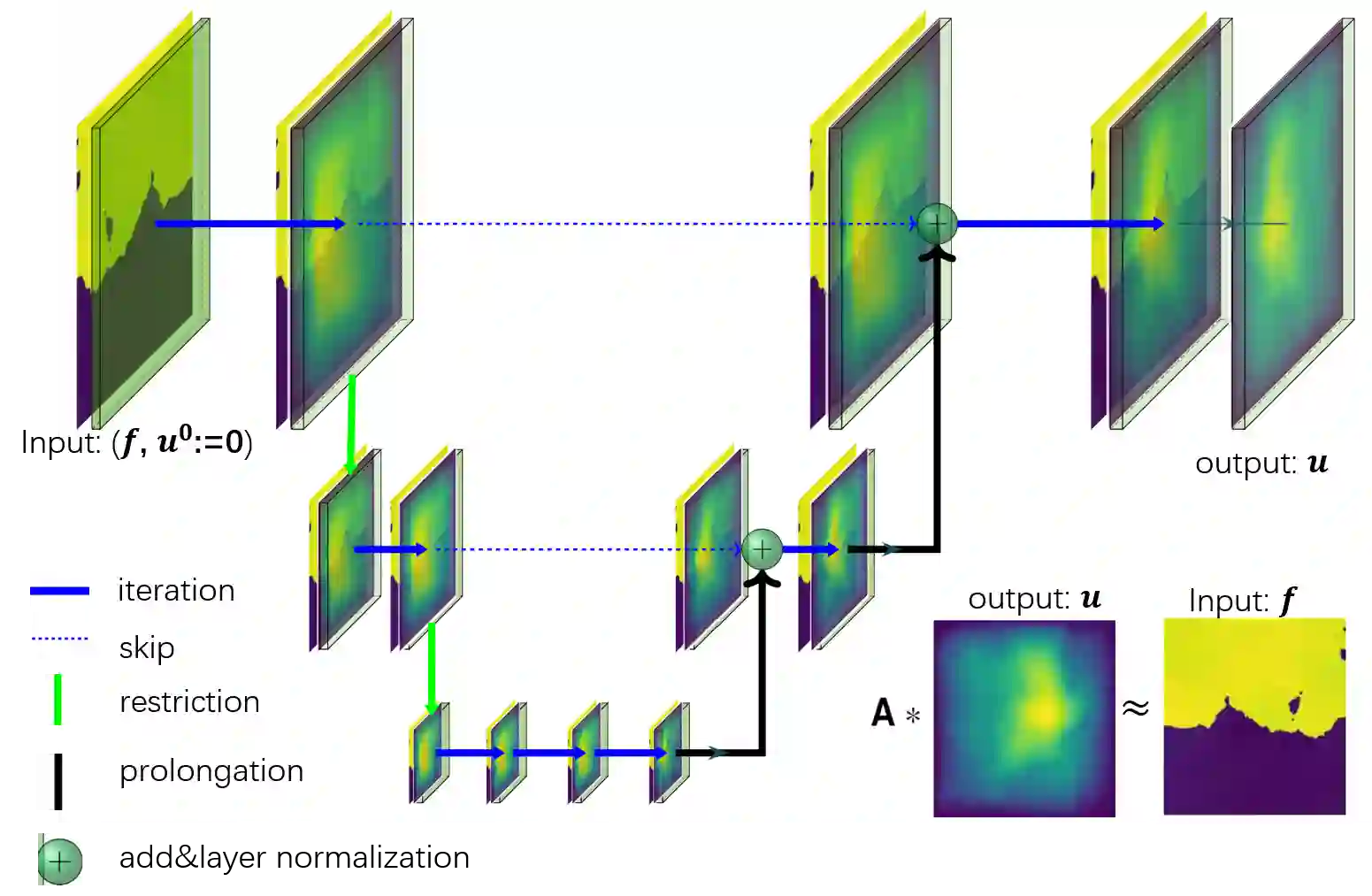

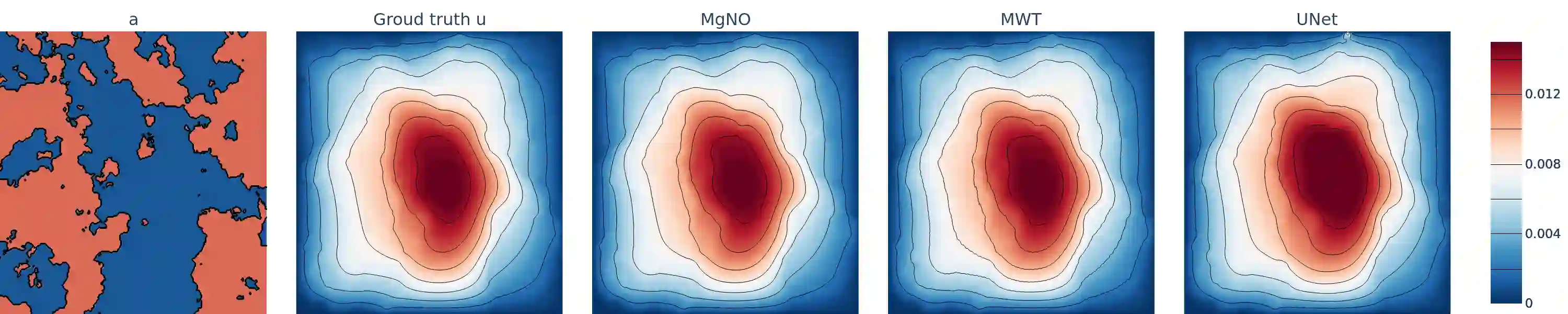

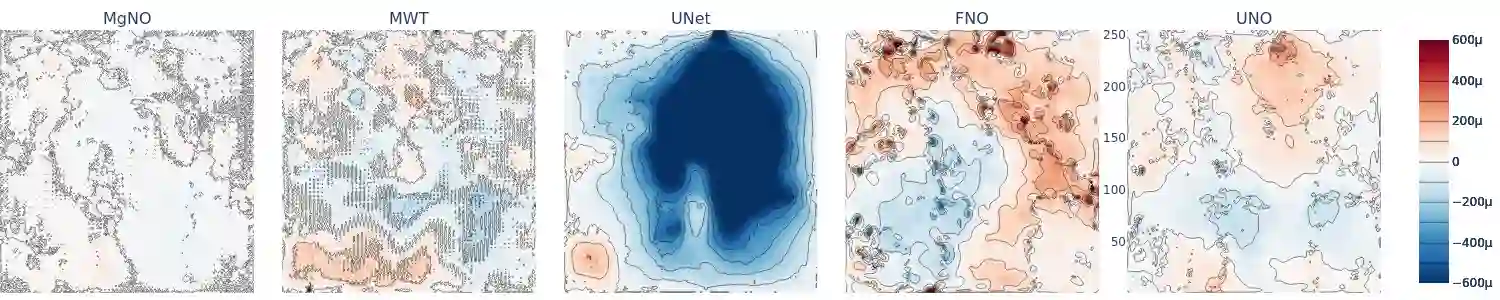

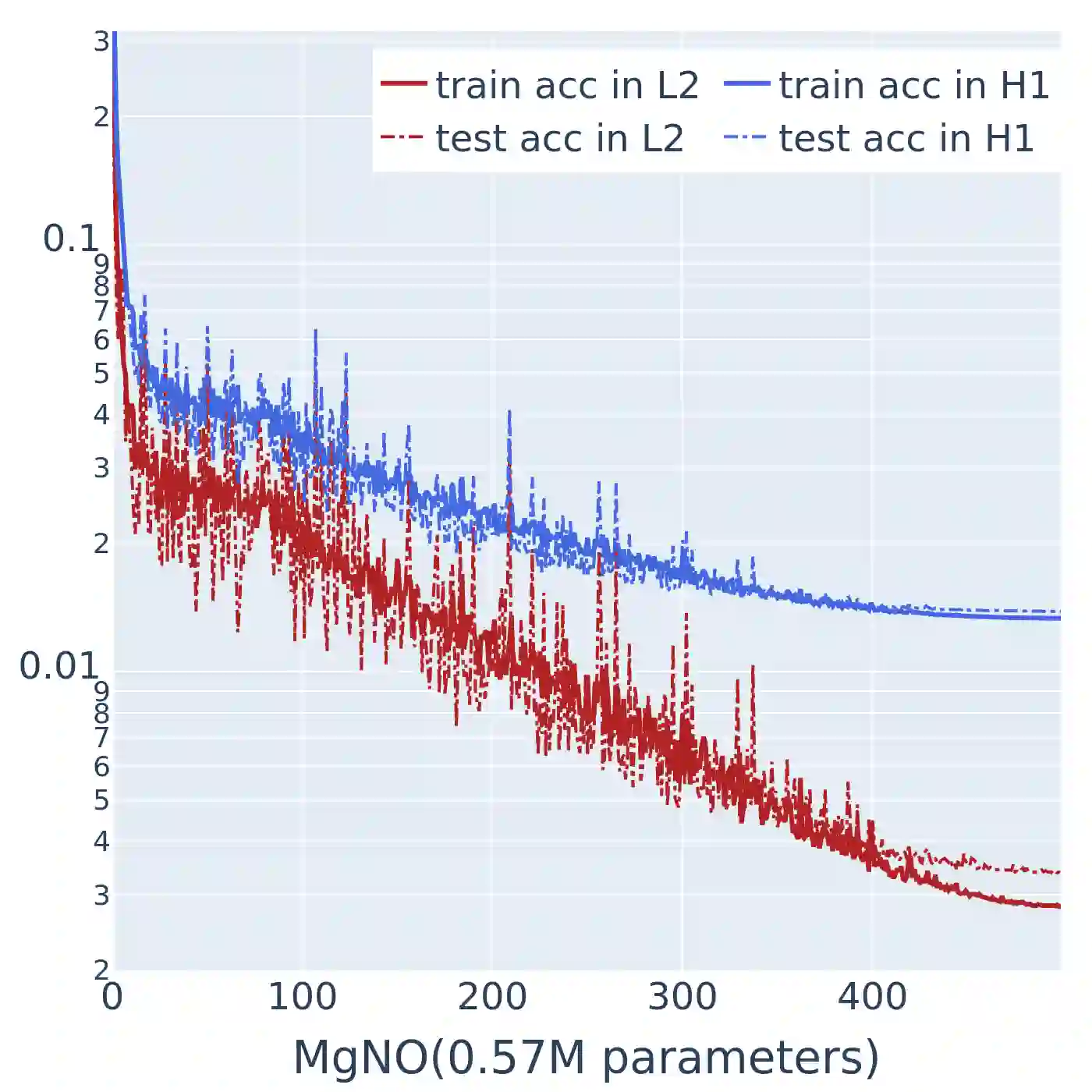

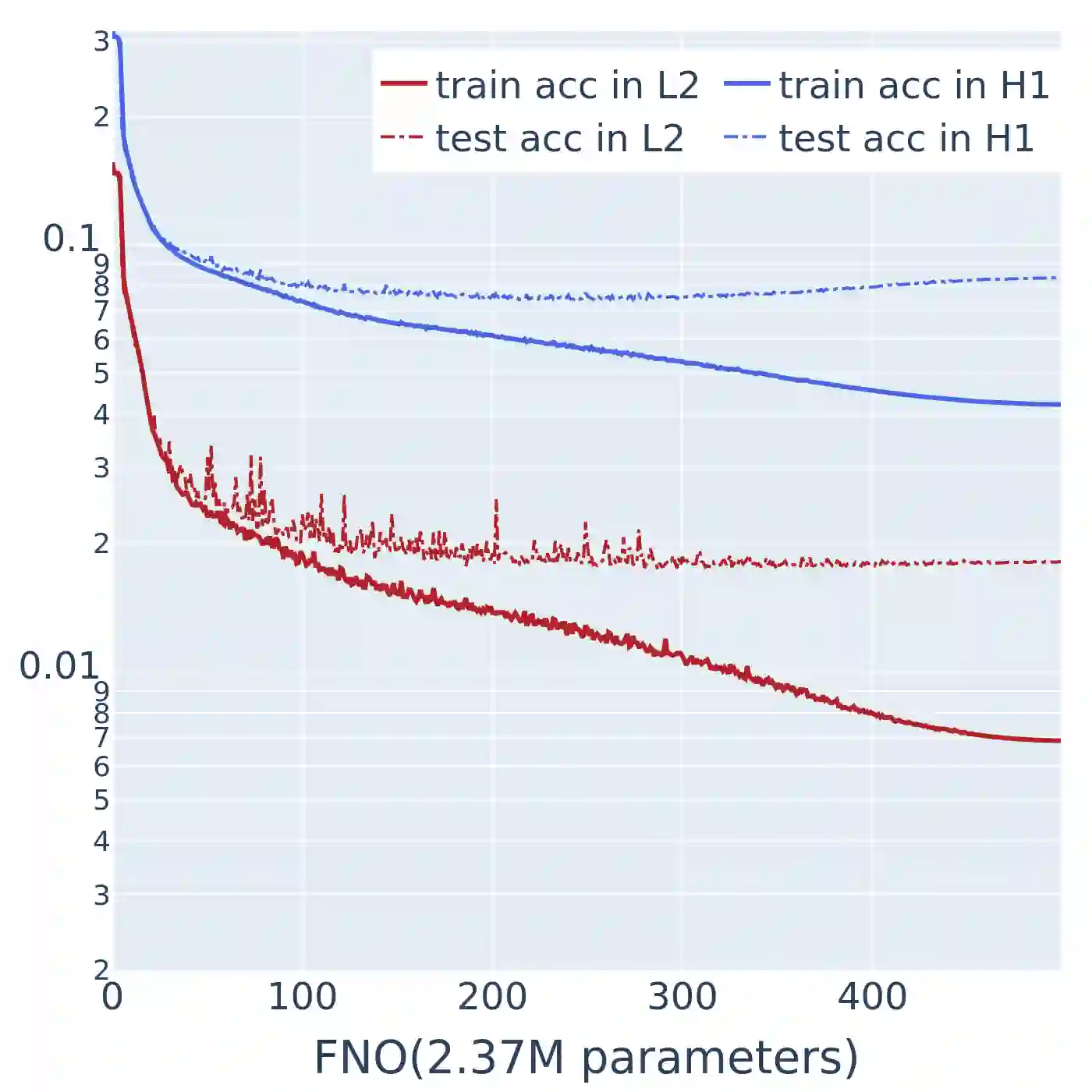

In this work, we propose a concise neural operator architecture for operator learning. Drawing an analogy with a conventional fully connected neural network, we define the neural operator as follows: the output of the $i$-th neuron in a nonlinear operator layer is defined by $O_i(u) = \sigma\left( \sum_j W_{ij} u + B_{ij}\right)$. Here, $ W_{ij}$ denotes the bounded linear operator connecting $j$-th input neuron to $i$-th output neuron, and the bias $ B_{ij}$ takes the form of a function rather than a scalar. Given its new universal approximation property, the efficient parameterization of the bounded linear operators between two neurons (Banach spaces) plays a critical role. As a result, we introduce MgNO, utilizing multigrid structures to parameterize these linear operators between neurons. This approach offers both mathematical rigor and practical expressivity. Additionally, MgNO obviates the need for conventional lifting and projecting operators typically required in previous neural operators. Moreover, it seamlessly accommodates diverse boundary conditions. Our empirical observations reveal that MgNO exhibits superior ease of training compared to other CNN-based models, while also displaying a reduced susceptibility to overfitting when contrasted with spectral-type neural operators. We demonstrate the efficiency and accuracy of our method with consistently state-of-the-art performance on different types of partial differential equations (PDEs).

翻译:本文提出了一种简洁的神经算子架构用于算子学习。通过与传统的全连接神经网络进行类比,我们将神经算子定义为:非线性算子层中第$i$个神经元的输出由$O_i(u) = \sigma\left( \sum_j W_{ij} u + B_{ij}\right)$给出。其中$W_{ij}$表示连接第$j$个输入神经元与第$i$个输出神经元的有界线性算子,偏置项$B_{ij}$采用函数形式而非标量形式。基于该架构新近证明的通用逼近性质,两个神经元(巴拿赫空间)间有界线性算子的高效参数化具有关键意义。为此,我们提出MgNO方法,利用多重网格结构对神经元间的线性算子进行参数化。该方法兼具数学严谨性与实际表达能力。此外,MgNO无需传统神经算子中通常必需的提升与投影算子,并能自然兼容各类边界条件。实验观测表明,相较于其他基于CNN的模型,MgNO具有更优的训练便利性;与谱类神经算子相比,其过拟合倾向显著降低。我们在多种类型的偏微分方程上验证了所提方法的效率与精度,均取得持续领先的性能表现。