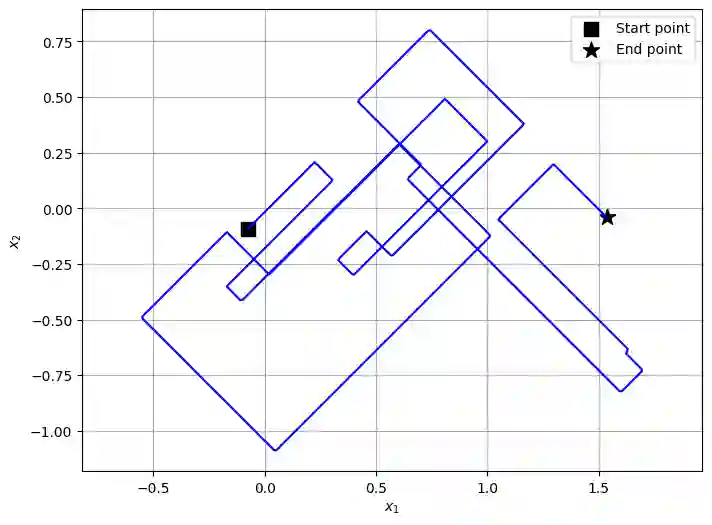

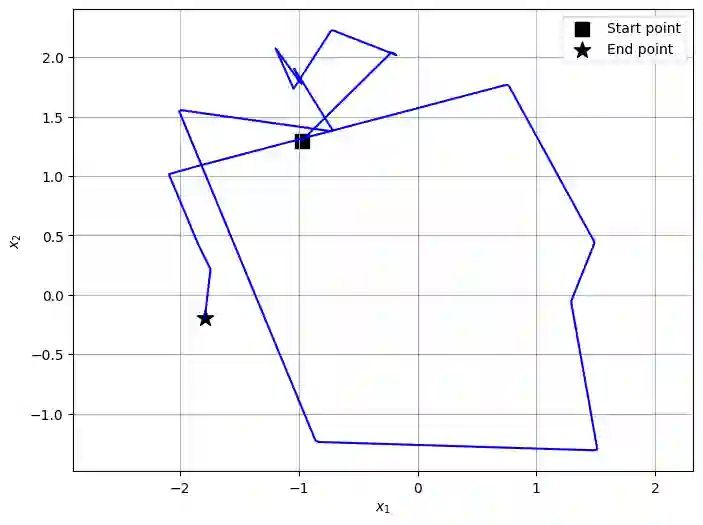

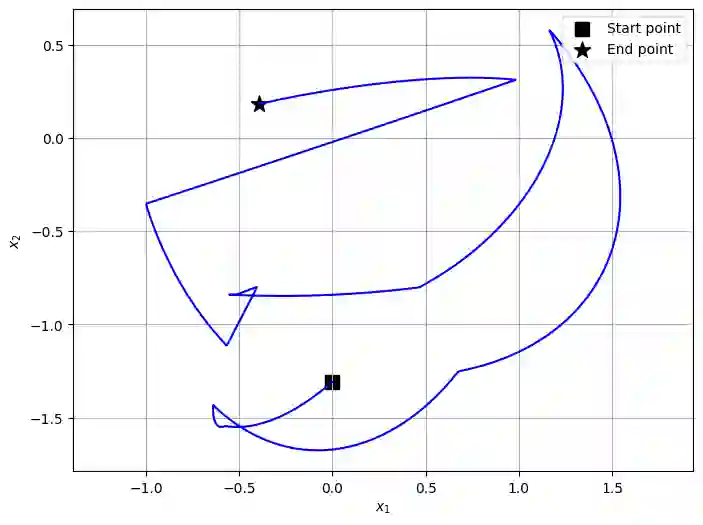

We introduce a novel class of generative models based on piecewise deterministic Markov processes (PDMPs), a family of non-diffusive stochastic processes consisting of deterministic motion and random jumps at random times. Similarly to diffusions, such Markov processes admit time reversals that turn out to be PDMPs as well. We apply this observation to three PDMPs considered in the literature: the Zig-Zag process, Bouncy Particle Sampler, and Randomised Hamiltonian Monte Carlo. For these three particular instances, we show that the jump rates and kernels of the corresponding time reversals admit explicit expressions depending on some conditional densities of the PDMP under consideration before and after a jump. Based on these results, we propose efficient training procedures to learn these characteristics and consider methods to approximately simulate the reverse process. Finally, we provide bounds in the total variation distance between the data distribution and the resulting distribution of our model in the case where the base distribution is the standard $d$-dimensional Gaussian distribution. Promising numerical simulations support further investigations into this class of models.

翻译:本文介绍了一类基于分段确定性马尔可夫过程的新型生成模型。PDMP是一类非扩散随机过程,由确定性运动和随机时刻的随机跳跃构成。与扩散过程类似,此类马尔可夫过程存在时间反转,且反转过程本身也是PDMP。我们将这一观察应用于文献中已探讨的三种PDMP:Zig-Zag过程、弹跳粒子采样器以及随机化哈密顿蒙特卡洛方法。针对这三种具体实例,我们证明了相应时间反转的跳跃速率与核函数具有显式表达式,这些表达式取决于所研究PDMP在跳跃前后的条件概率密度。基于这些结果,我们提出了高效训练方法来学习这些特征,并考虑了近似模拟反向过程的方法。最后,在基分布为标准$d$维高斯分布的情况下,我们给出了数据分布与模型生成分布之间全变差距离的界。具有前景的数值模拟结果支持对该模型类开展进一步研究。