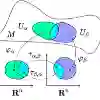

Bayesian Federated Learning (BFL) combines uncertainty modeling with decentralized training, enabling the development of personalized and reliable models under data heterogeneity and privacy constraints. Existing approaches typically rely on Markov Chain Monte Carlo (MCMC) sampling or variational inference, often incorporating personalization mechanisms to better adapt to local data distributions. In this work, we propose an information-geometric projection framework for personalization in parametric BFL. By projecting the global model onto a neighborhood of the user's local model, our method enables a tunable trade-off between global generalization and local specialization. Under mild assumptions, we show that this projection step is equivalent to computing a barycenter on the statistical manifold, allowing us to derive closed-form solutions and achieve cost-free personalization. We apply the proposed approach to a variational learning setup using the Improved Variational Online Newton (IVON) optimizer and extend its application to general aggregation schemes in BFL. Empirical evaluations under heterogeneous data distributions confirm that our method effectively balances global and local performance with minimal computational overhead.

翻译:贝叶斯联邦学习将不确定性建模与去中心化训练相结合,能够在数据异构性和隐私约束下开发个性化且可靠的模型。现有方法通常依赖马尔可夫链蒙特卡罗采样或变分推断,并常结合个性化机制以更好地适应本地数据分布。本研究提出一种用于参数化贝叶斯联邦学习的个性化信息几何投影框架。通过将全局模型投影至用户本地模型的邻域,我们的方法实现了全局泛化与局部特化之间的可调节权衡。在温和假设下,我们证明该投影步骤等价于计算统计流形上的重心点,从而能够推导闭式解并实现零成本个性化。我们将所提方法应用于采用改进变分在线牛顿优化器的变分学习设置,并将其扩展至贝叶斯联邦学习中的通用聚合方案。在异构数据分布下的实证评估表明,我们的方法能以最小计算开销有效平衡全局与局部性能。