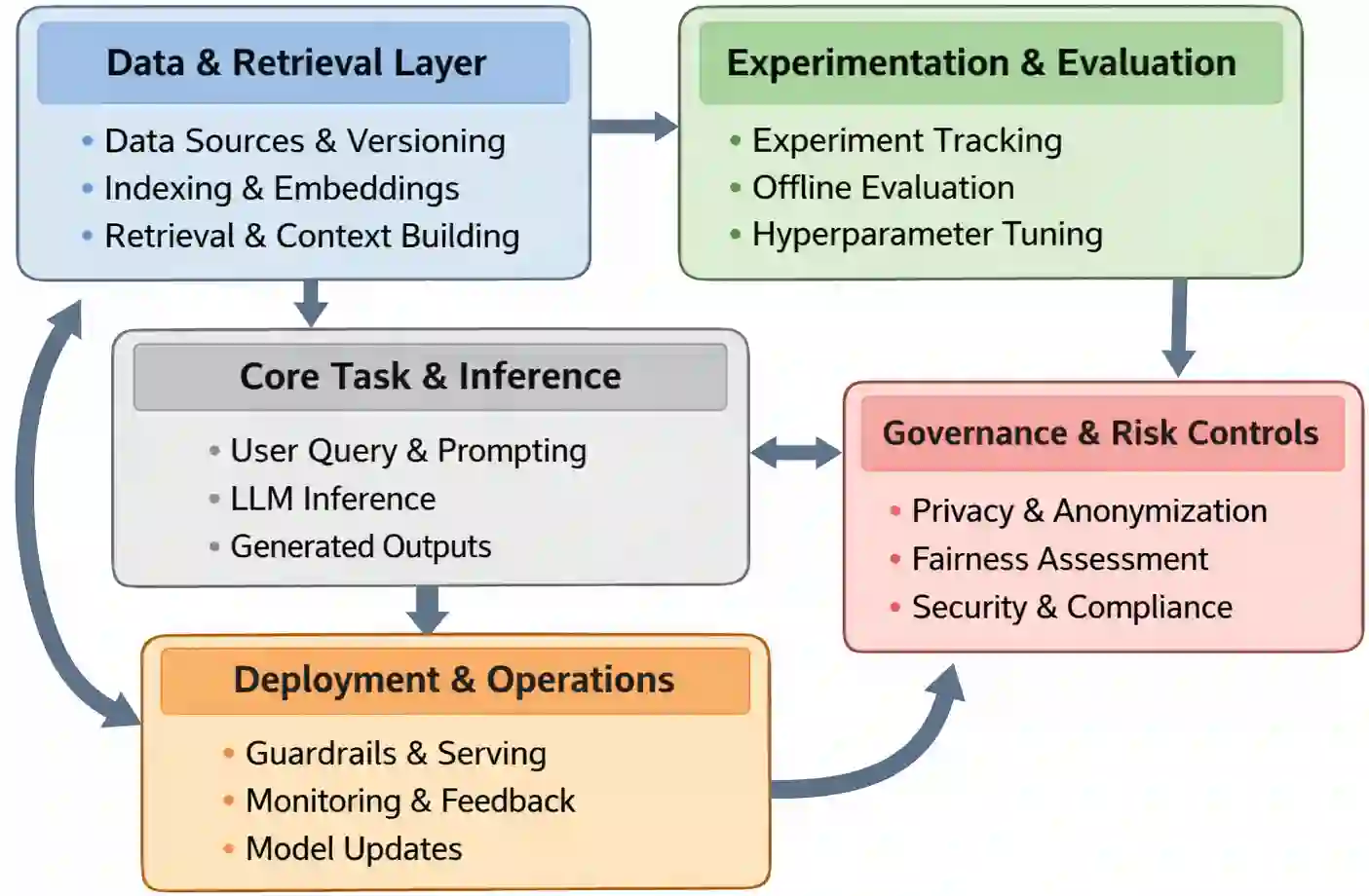

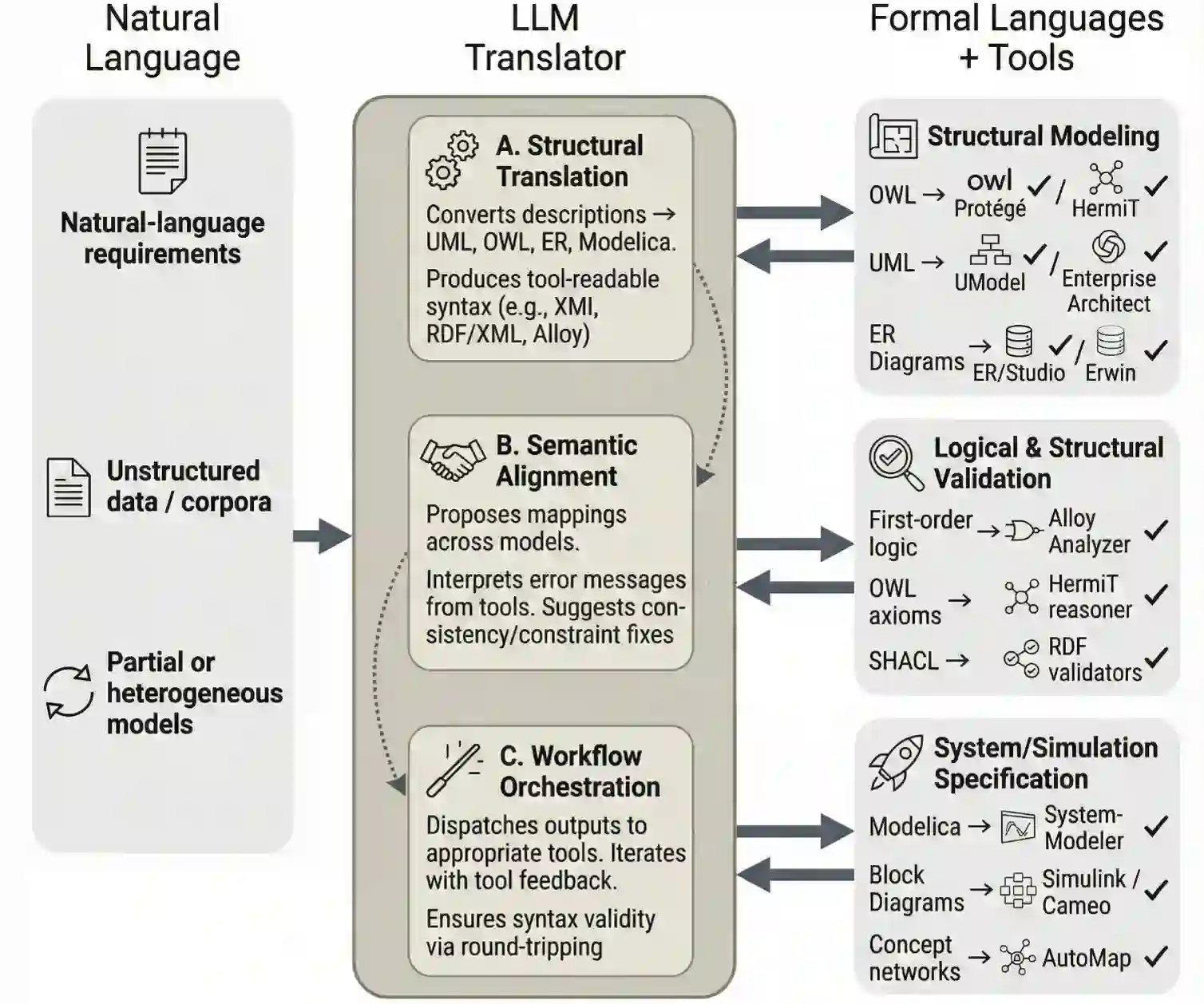

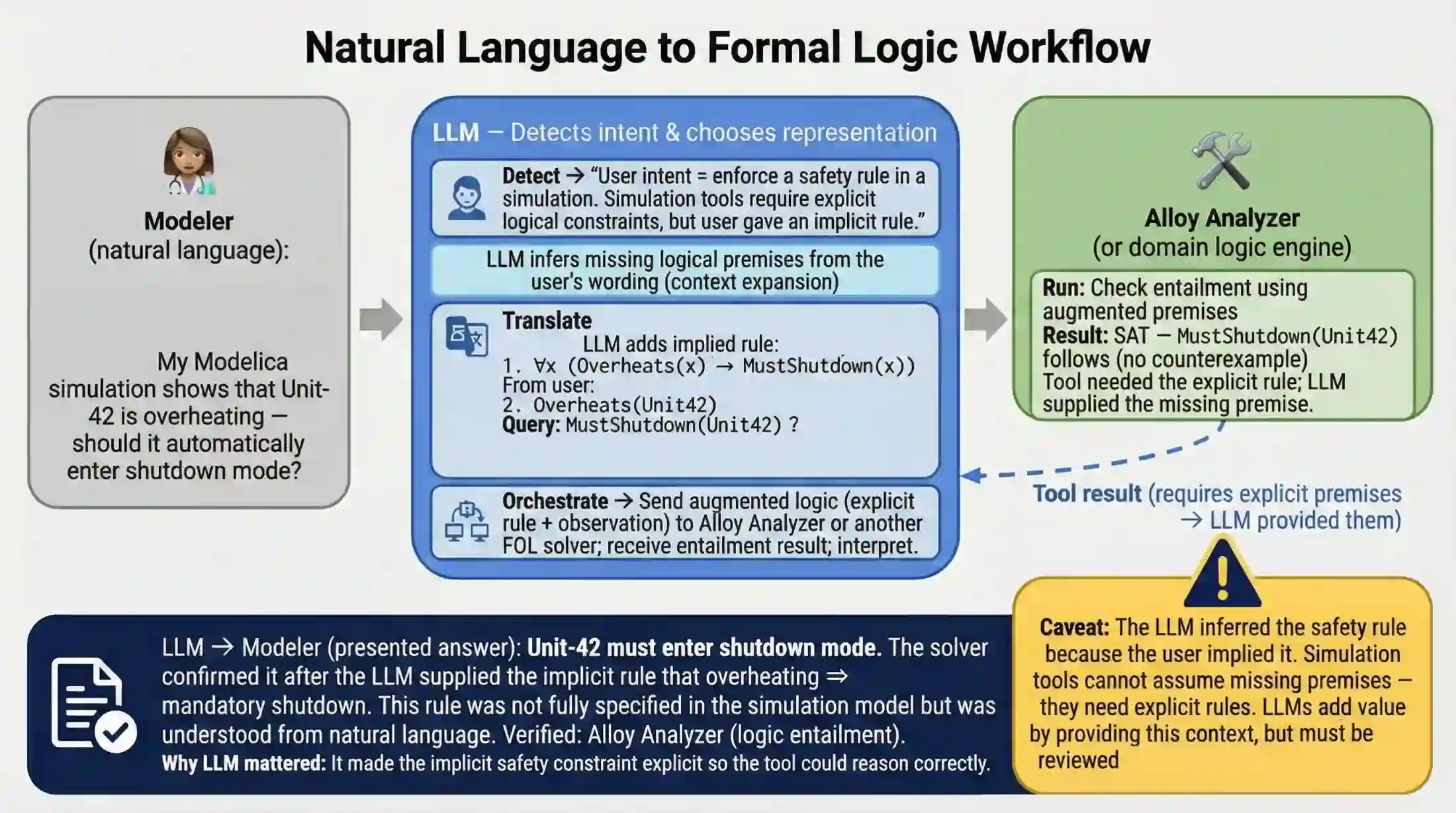

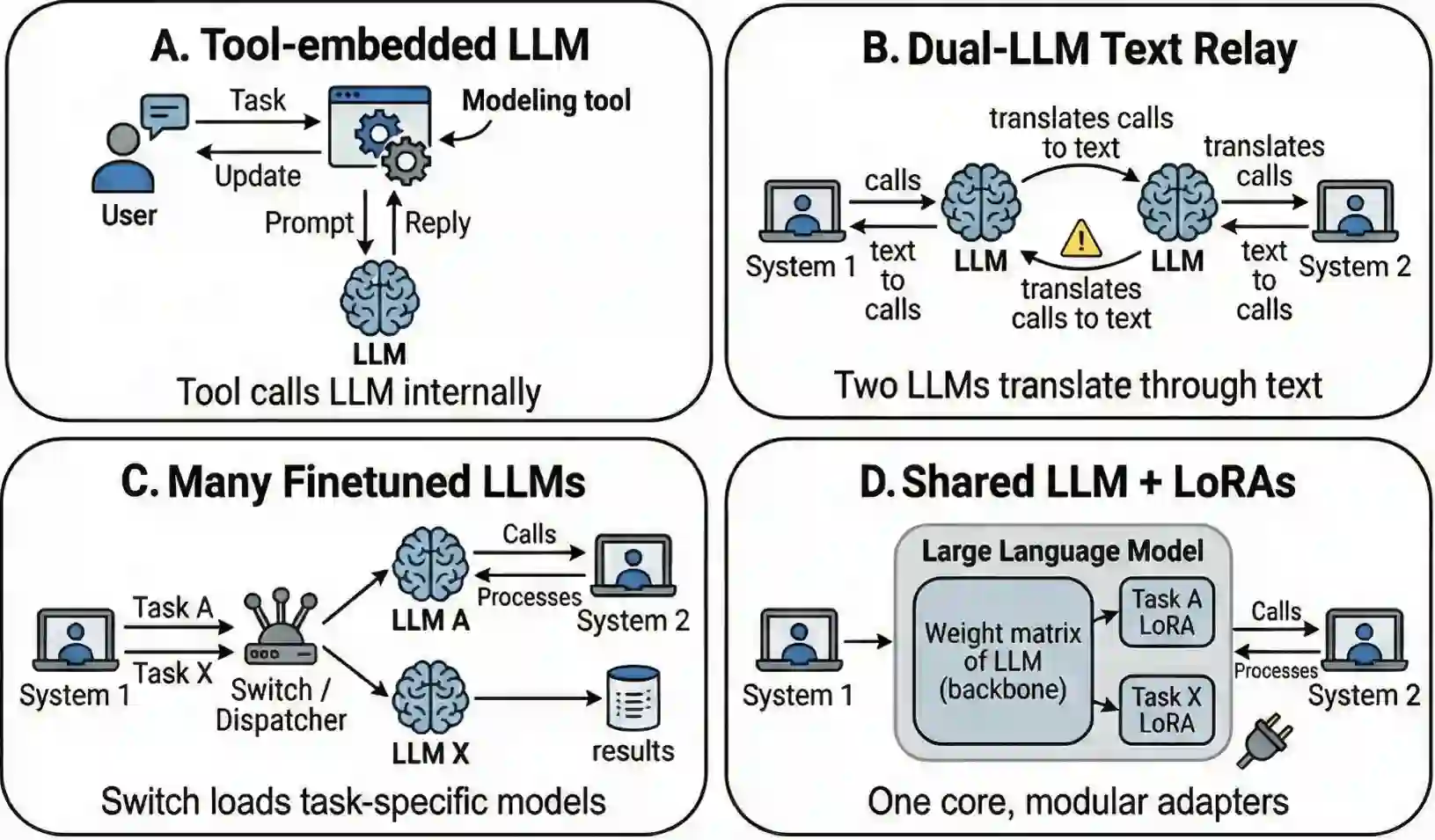

Large language models (LLMs) have rapidly become familiar tools to researchers and practitioners. Concepts such as prompting, temperature, or few-shot examples are now widely recognized, and LLMs are increasingly used in Modeling & Simulation (M&S) workflows. However, practices that appear straightforward may introduce subtle issues, unnecessary complexity, or may even lead to inferior results. Adding more data can backfire (e.g., deteriorating performance through model collapse or inadvertently wiping out existing guardrails), spending time on fine-tuning a model can be unnecessary without a prior assessment of what it already knows, setting the temperature to 0 is not sufficient to make LLMs deterministic, providing a large volume of M&S data as input can be excessive (LLMs cannot attend to everything) but naive simplifications can lose information. We aim to provide comprehensive and practical guidance on how to use LLMs, with an emphasis on M&S applications. We discuss common sources of confusion, including non-determinism, knowledge augmentation (including RAG and LoRA), decomposition of M&S data, and hyper-parameter settings. We emphasize principled design choices, diagnostic strategies, and empirical evaluation, with the goal of helping modelers make informed decisions about when, how, and whether to rely on LLMs.

翻译:大型语言模型(LLMs)已迅速成为研究人员和实践者熟悉的工具。诸如提示、温度或小样本示例等概念现已广为人知,LLMs在建模与仿真(M&S)工作流中的应用也日益增多。然而,看似直接的操作可能会引入微妙的问题、不必要的复杂性,甚至导致较差的结果。增加更多数据可能适得其反(例如,通过模型崩溃或无意中消除现有防护机制导致性能下降),在没有预先评估模型已有知识的情况下花费时间微调模型可能是不必要的,将温度设置为0不足以使LLMs具有确定性,提供大量M&S数据作为输入可能过度(LLMs无法关注所有信息)但简单的简化可能会丢失信息。本文旨在提供关于如何使用LLMs的全面且实用的指导,重点关注M&S应用。我们讨论了常见的混淆来源,包括非确定性、知识增强(包括RAG和LoRA)、M&S数据的分解以及超参数设置。我们强调原则性的设计选择、诊断策略和实证评估,旨在帮助建模者就何时、如何以及是否依赖LLMs做出明智决策。