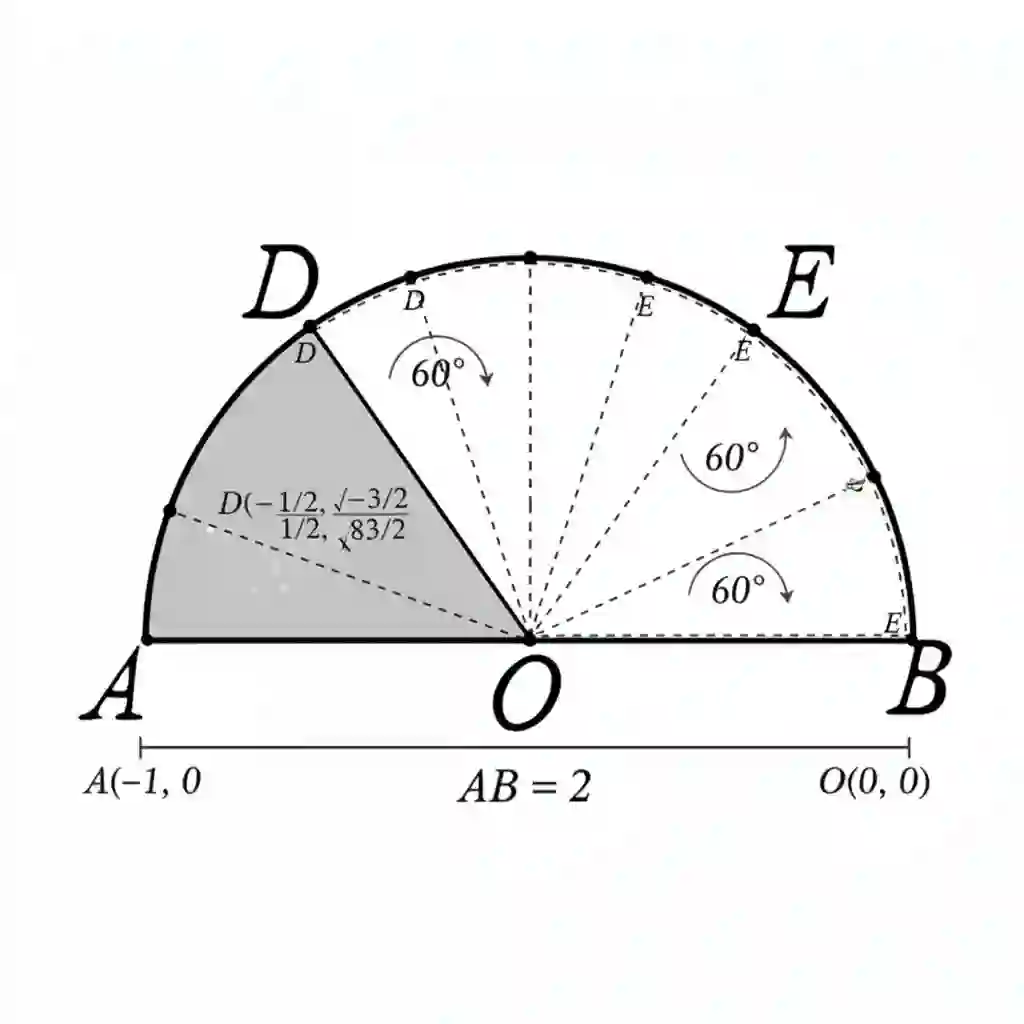

While Large Language Models (LLMs) have excelled in textual reasoning, they struggle with mathematical domains like geometry that intrinsically rely on visual aids. Existing approaches to Visual Chain-of-Thought (VCoT) are often limited by rigid external tools or fail to generate the high-fidelity, strategically-timed diagrams necessary for complex problem-solving. To bridge this gap, we introduce MathCanvas, a comprehensive framework designed to endow unified Large Multimodal Models (LMMs) with intrinsic VCoT capabilities for mathematics. Our approach consists of two phases. First, a Visual Manipulation stage pre-trains the model on a novel 15.2M-pair corpus, comprising 10M caption-to-diagram pairs (MathCanvas-Imagen) and 5.2M step-by-step editing trajectories (MathCanvas-Edit), to master diagram generation and editing. Second, a Strategic Visual-Aided Reasoning stage fine-tunes the model on MathCanvas-Instruct, a new 219K-example dataset of interleaved visual-textual reasoning paths, teaching it when and how to leverage visual aids. To facilitate rigorous evaluation, we introduce MathCanvas-Bench, a challenging benchmark with 3K problems that require models to produce interleaved visual-textual solutions. Our model, BAGEL-Canvas, trained under this framework, achieves an 86% relative improvement over strong LMM baselines on MathCanvas-Bench, demonstrating excellent generalization to other public math benchmarks. Our work provides a complete toolkit-framework, datasets, and benchmark-to unlock complex, human-like visual-aided reasoning in LMMs. Project Page: https://mathcanvas.github.io/

翻译:尽管大型语言模型(LLMs)在文本推理方面表现出色,但在几何等本质上依赖视觉辅助的数学领域仍存在困难。现有的视觉思维链(VCoT)方法常受限于僵化的外部工具,或无法生成复杂问题求解所需的高保真、策略性定时的图表。为弥合这一差距,我们提出了MathCanvas——一个旨在为统一的大型多模态模型(LMMs)赋予内在数学VCoT能力的综合框架。我们的方法包含两个阶段。首先,在视觉操控阶段,模型在一个新颖的1520万对语料库上进行预训练,该语料库包含1000万图文对(MathCanvas-Imagen)和520万逐步编辑轨迹(MathCanvas-Edit),以掌握图表生成与编辑。其次,在策略性视觉辅助推理阶段,模型在MathCanvas-Instruct上进行微调,这是一个包含21.9万个交织式视觉-文本推理路径的新数据集,旨在教会模型何时及如何利用视觉辅助。为促进严谨评估,我们推出了MathCanvas-Bench,这是一个包含3000个需要模型生成交织式视觉-文本解决方案的挑战性基准测试。在此框架下训练的模型BAGEL-Canvas,在MathCanvas-Bench上相较于强大的LMM基线实现了86%的相对性能提升,并在其他公共数学基准测试中展现出优异的泛化能力。我们的工作提供了一套完整的工具包——包括框架、数据集和基准测试——以解锁LMM中复杂、类人的视觉辅助推理能力。项目页面:https://mathcanvas.github.io/