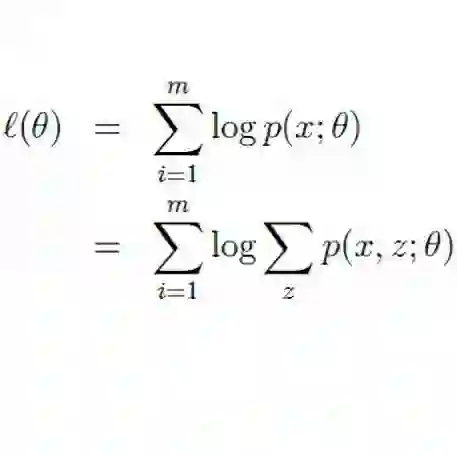

The EM (Expectation-Maximization) algorithm is regarded as an MM (Majorization-Minimization) algorithm for maximum likelihood estimation of statistical models. Expanding this view, this paper demonstrates that by choosing an appropriate probability distribution, even nonstatistical optimization problem can be cast as a negative log-likelihood-like minimization problem, which can be approached by an EM (or MM) algorithm. When a polynomial objective is optimized over a simple polyhedral feasible set and an exponential family distribution is employed, the EM algorithm can be reduced to a natural gradient descent of the employed distribution with a constant step size. This is demonstrated through three examples. In this paper, we demonstrate the global convergence of specific cases with some exponential family distributions in a general form. In instances when the feasible set is not sufficiently simple, the use of MM algorithms can nevertheless be adequately described. When the objective is to minimize a convex quadratic function and the constraints are polyhedral, global convergence can also be established based on the existing results for an entropy-like proximal point algorithm.

翻译:EM(期望最大化)算法被视为用于统计模型最大似然估计的MM(优化-最小化)算法。本文拓展这一观点,证明通过选择合适的概率分布,即使是非统计优化问题也可转化为类负对数似然最小化问题,从而可通过EM(或MM)算法求解。当在简单多面体可行集上优化多项式目标函数并采用指数族分布时,EM算法可简化为采用分布的恒定步长自然梯度下降。这一结论通过三个示例得以验证。本文以一般形式证明了特定指数族分布情形下的全局收敛性。当可行集不够简单时,MM算法的应用仍能得到充分描述。当目标函数为凸二次函数最小化且约束为多面体时,基于现有熵类近端点算法的研究成果亦可建立全局收敛性。