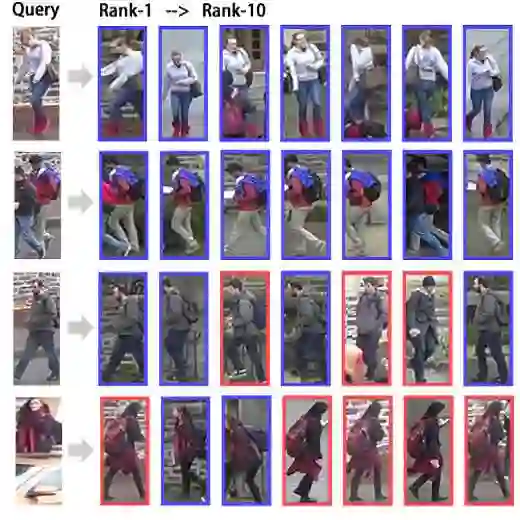

Personalized retrieval and segmentation aim to locate specific instances within a dataset based on an input image and a short description of the reference instance. While supervised methods are effective, they require extensive labeled data for training. Recently, self-supervised foundation models have been introduced to these tasks showing comparable results to supervised methods. However, a significant flaw in these models is evident: they struggle to locate a desired instance when other instances within the same class are presented. In this paper, we explore text-to-image diffusion models for these tasks. Specifically, we propose a novel approach called PDM for Personalized Features Diffusion Matching, that leverages intermediate features of pre-trained text-to-image models for personalization tasks without any additional training. PDM demonstrates superior performance on popular retrieval and segmentation benchmarks, outperforming even supervised methods. We also highlight notable shortcomings in current instance and segmentation datasets and propose new benchmarks for these tasks.

翻译:个人化检索与分割旨在根据输入图像和参考实例的简短描述,在数据集中定位特定实例。尽管监督方法效果显著,但其训练需要大量标注数据。近期,自监督基础模型被引入这些任务,并展现出与监督方法相当的性能。然而,这些模型存在一个明显缺陷:当同一类别中存在其他实例时,它们难以准确定位目标实例。本文探索了文本到图像扩散模型在这些任务中的应用。具体而言,我们提出了一种名为PDM(个人化特征扩散匹配)的新方法,该方法利用预训练文本到图像模型的中间特征进行个人化任务,无需任何额外训练。PDM在主流检索与分割基准测试中表现出卓越性能,甚至超越了监督方法。我们还指出了当前实例与分割数据集的显著不足,并为此类任务提出了新的基准测试方案。