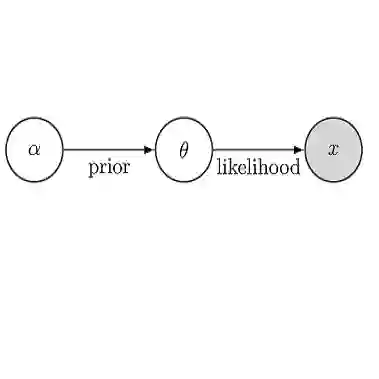

In applied Bayesian inference scenarios, users may have access to a large number of pre-existing model evaluations, for example from maximum-a-posteriori (MAP) optimization runs. However, traditional approximate inference techniques make little to no use of this available information. We propose the framework of post-process Bayesian inference as a means to obtain a quick posterior approximation from existing target density evaluations, with no further model calls. Within this framework, we introduce Variational Sparse Bayesian Quadrature (VSBQ), a method for post-process approximate inference for models with black-box and potentially noisy likelihoods. VSBQ reuses existing target density evaluations to build a sparse Gaussian process (GP) surrogate model of the log posterior density function. Subsequently, we leverage sparse-GP Bayesian quadrature combined with variational inference to achieve fast approximate posterior inference over the surrogate. We validate our method on challenging synthetic scenarios and real-world applications from computational neuroscience. The experiments show that VSBQ builds high-quality posterior approximations by post-processing existing optimization traces, with no further model evaluations.

翻译:在应用贝叶斯推断场景中,用户可能已拥有大量预先存在的模型评估结果,例如来自最大后验概率优化过程的输出。然而,传统的近似推断技术几乎无法利用这些可用信息。我们提出后处理贝叶斯推断框架,旨在仅通过现有目标密度评估结果快速获得后验近似,且无需额外的模型调用。在此框架内,我们提出了变分稀疏贝叶斯求积方法,用于对具有黑箱且可能含噪声似然函数的模型进行后处理近似推断。VSBQ通过复用现有的目标密度评估结果,构建对数后验密度函数的稀疏高斯过程代理模型。随后,我们结合稀疏高斯过程贝叶斯求积与变分推断技术,实现对代理模型的快速近似后验推断。我们在具有挑战性的合成场景及计算神经科学领域的实际应用中验证了该方法。实验表明,VSBQ能够通过后处理现有优化轨迹(无需额外模型评估)构建高质量的后验近似。