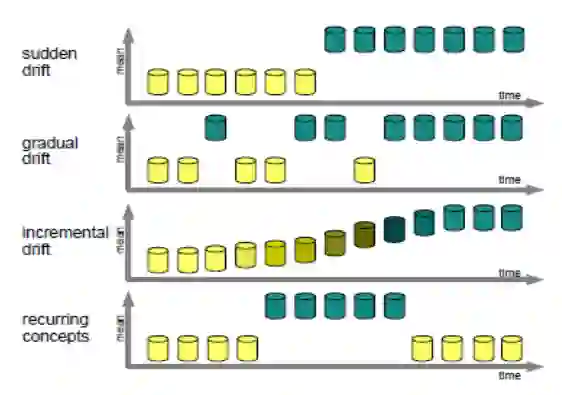

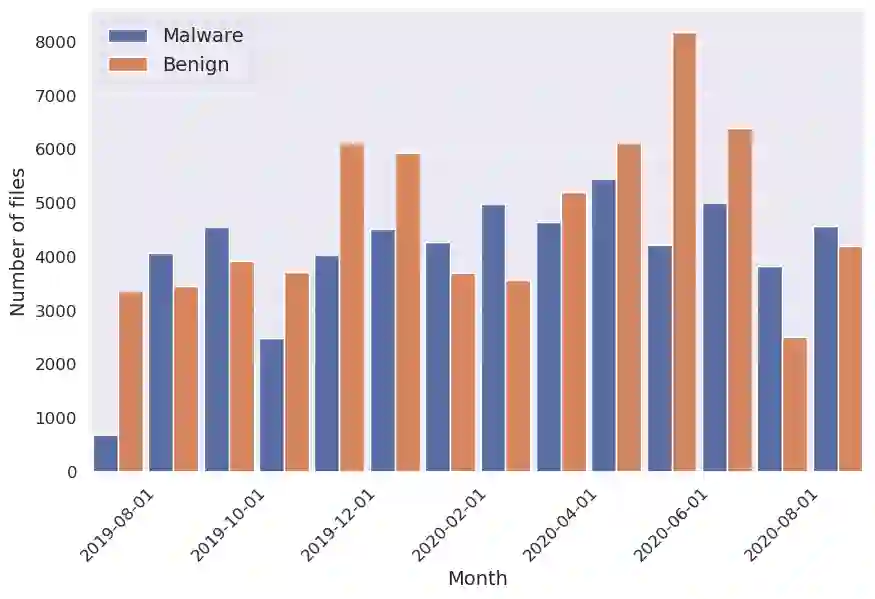

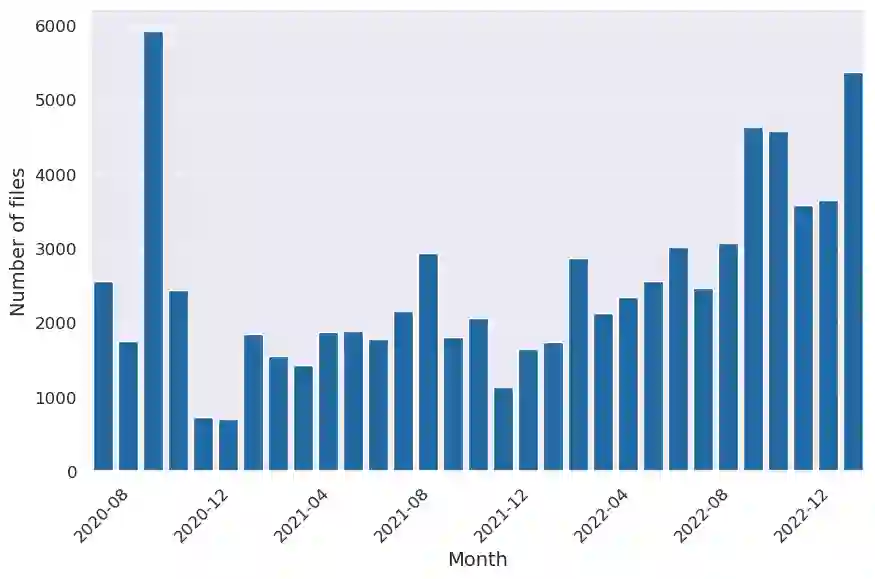

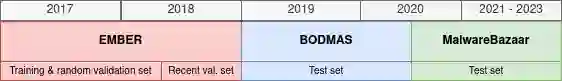

Despite the promising results of machine learning models in malicious files detection, they face the problem of concept drift due to their constant evolution. This leads to declining performance over time, as the data distribution of the new files differs from the training one, requiring frequent model update. In this work, we propose a model-agnostic protocol to improve a baseline neural network against drift. We show the importance of feature reduction and training with the most recent validation set possible, and propose a loss function named Drift-Resilient Binary Cross-Entropy, an improvement to the classical Binary Cross-Entropy more effective against drift. We train our model on the EMBER dataset, published in2018, and evaluate it on a dataset of recent malicious files, collected between 2020 and 2023. Our improved model shows promising results, detecting 15.2% more malware than a baseline model.

翻译:尽管机器学习模型在恶意文件检测方面取得了显著成果,但由于恶意软件的持续演化,这些模型面临着概念漂移的问题。随着时间的推移,新文件的数据分布与训练数据产生差异,导致模型性能下降,需要频繁更新模型。本研究提出一种与模型无关的协议,用于改进基线神经网络以应对概念漂移。我们论证了特征约简和使用最新验证集进行训练的重要性,并提出一种名为“抗漂移二元交叉熵”的损失函数,该函数是对经典二元交叉熵的改进,能更有效地应对概念漂移。我们在2018年发布的EMBER数据集上训练模型,并在2020年至2023年间收集的近期恶意文件数据集上进行评估。改进后的模型显示出良好效果,比基线模型多检测出15.2%的恶意软件。