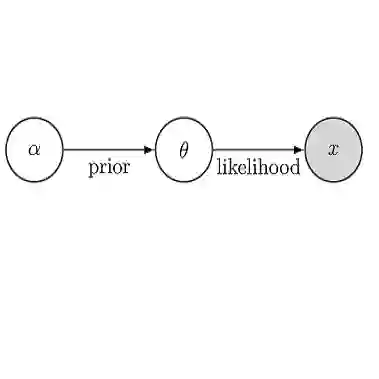

We develop an iterative framework for Bayesian inference problems where the posterior distribution may involve computationally intensive models, intractable gradients, significant posterior concentration, and pronounced non-Gaussianity. Our approach integrates: (i) a generalized annealing scheme that combines geometric tempering with multi-fidelity modeling; (ii) expressive measure transport surrogates for the intermediate annealed and final target distributions, learned variationally without evaluating gradients of the target density; and, (iii) an importance-weighting scheme to combine multiple quadrature rules, which recycles and reweighs expensive model evaluations as successive posterior approximations are built. Our scheme produces both a quadrature rule for computing posterior expectations and a transport-based approximation of the posterior from which we can easily generate independent Monte Carlo samples. We demonstrate the efficiency and accuracy of our approach on low-dimensional but strongly non-Gaussian Bayesian inverse problems involving partial differential equations.

翻译:暂无翻译