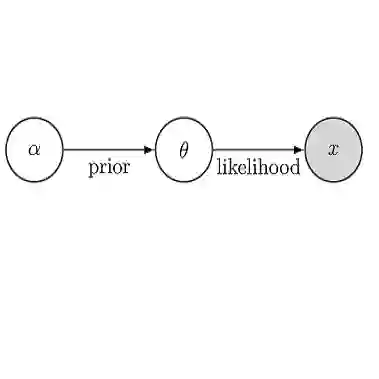

Bayesian inference gets its name from *Bayes's theorem*, expressing posterior probabilities for hypotheses about a data generating process as the (normalized) product of prior probabilities and a likelihood function. But Bayesian inference uses all of probability theory, not just Bayes's theorem. Many hypotheses of scientific interest are *composite hypotheses*, with the strength of evidence for the hypothesis dependent on knowledge about auxiliary factors, such as the values of nuisance parameters (e.g., uncertain background rates or calibration factors). Many important capabilities of Bayesian methods arise from use of the law of total probability, which instructs analysts to compute probabilities for composite hypotheses by *marginalization* over auxiliary factors. This tutorial targets relative newcomers to Bayesian inference, aiming to complement tutorials that focus on Bayes's theorem and how priors modulate likelihoods. The emphasis here is on marginalization over parameter spaces -- both how it is the foundation for important capabilities, and how it may motivate caution when parameter spaces are large. Topics covered include the difference between likelihood and probability, understanding the impact of priors beyond merely shifting the maximum likelihood estimate, and the role of marginalization in accounting for uncertainty in nuisance parameters, systematic error, and model misspecification.

翻译:贝叶斯推断得名于*贝叶斯定理*,该定理将关于数据生成过程的假设的后验概率表示为先验概率与似然函数的(归一化)乘积。然而,贝叶斯推断运用的是整个概率论体系,而不仅仅是贝叶斯定理。许多具有科学意义的假设属于*复合假设*,其证据强度依赖于对辅助因素(如干扰参数的值——例如不确定的背景率或校准因子)的认知。贝叶斯方法的诸多重要能力源于全概率定律的应用,该定律指导分析者通过对辅助因素进行*边缘化*来计算复合假设的概率。本教程主要面向贝叶斯推断的初学者,旨在补充那些专注于贝叶斯定理以及先验如何调节似然的教程。本文重点在于参数空间上的边缘化——既阐述其如何成为重要能力的基础,也探讨当参数空间较大时如何审慎对待。涵盖的主题包括似然与概率的区别、理解先验影响超越仅偏移最大似然估计的意义,以及边缘化在考虑干扰参数不确定性、系统误差和模型误设中的作用。