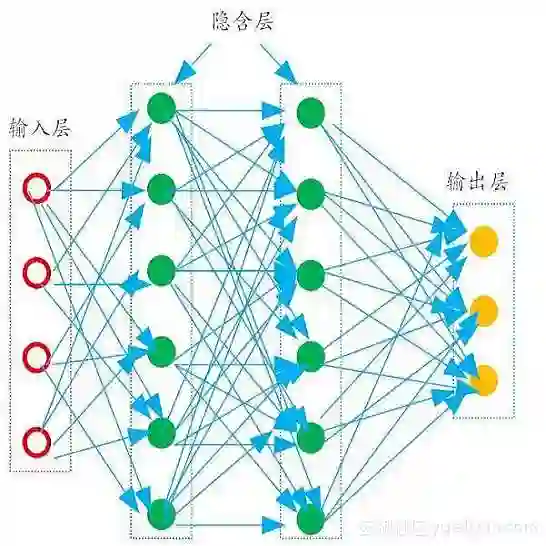

We investigate the learning of implicit neural representation (INR) using an overparameterized multilayer perceptron (MLP) via a novel nonparametric teaching perspective. The latter offers an efficient example selection framework for teaching nonparametrically defined (viz. non-closed-form) target functions, such as image functions defined by 2D grids of pixels. To address the costly training of INRs, we propose a paradigm called Implicit Neural Teaching (INT) that treats INR learning as a nonparametric teaching problem, where the given signal being fitted serves as the target function. The teacher then selects signal fragments for iterative training of the MLP to achieve fast convergence. By establishing a connection between MLP evolution through parameter-based gradient descent and that of function evolution through functional gradient descent in nonparametric teaching, we show for the first time that teaching an overparameterized MLP is consistent with teaching a nonparametric learner. This new discovery readily permits a convenient drop-in of nonparametric teaching algorithms to broadly enhance INR training efficiency, demonstrating 30%+ training time savings across various input modalities.

翻译:我们通过一种新颖的非参数教学视角,研究了使用过参数化多层感知机(MLP)学习隐式神经表示(INR)的方法。后者为教学非参数化定义(即非封闭形式)的目标函数(例如由二维像素网格定义的图像函数)提供了高效的示例选择框架。为解决INR训练成本高昂的问题,我们提出了一种名为隐式神经教学(INT)的范式,将INR学习视为一个非参数教学问题,其中待拟合的给定信号作为目标函数。随后,教师选择信号片段用于MLP的迭代训练,以实现快速收敛。通过建立基于参数梯度下降的MLP演化与非参数教学中基于函数梯度下降的函数演化之间的关联,我们首次证明了对过参数化MLP的教学与非参数学习者的教学是一致的。这一新发现使得非参数教学算法能够便捷地直接应用于广泛提升INR训练效率,在多种输入模态下实现了30%以上的训练时间节省。