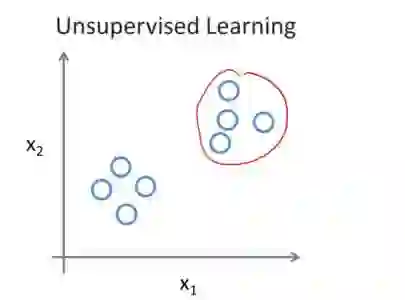

Federated learning achieves effective performance in modeling decentralized data. In practice, client data are not well-labeled, which makes it potential for federated unsupervised learning (FUSL) with non-IID data. However, the performance of existing FUSL methods suffers from insufficient representations, i.e., (1) representation collapse entanglement among local and global models, and (2) inconsistent representation spaces among local models. The former indicates that representation collapse in local model will subsequently impact the global model and other local models. The latter means that clients model data representation with inconsistent parameters due to the deficiency of supervision signals. In this work, we propose FedU2 which enhances generating uniform and unified representation in FUSL with non-IID data. Specifically, FedU2 consists of flexible uniform regularizer (FUR) and efficient unified aggregator (EUA). FUR in each client avoids representation collapse via dispersing samples uniformly, and EUA in server promotes unified representation by constraining consistent client model updating. To extensively validate the performance of FedU2, we conduct both cross-device and cross-silo evaluation experiments on two benchmark datasets, i.e., CIFAR10 and CIFAR100.

翻译:联邦学习在建模分散式数据方面表现出色。实践中,客户端数据往往缺乏充分标注,这使得在非独立同分布数据下进行联邦无监督学习具有潜力。然而,现有联邦无监督学习方法受限于表示不足,即:(1) 局部模型与全局模型之间表示坍塌的相互纠缠,以及 (2) 不同局部模型之间表示空间的不一致。前者表明局部模型中的表示坍塌会进而影响全局模型及其他局部模型;后者则指由于缺乏监督信号,各客户端用于建模数据表示的参数不一致。为此,本文提出FedU2方法,旨在增强非独立同分布数据下联邦无监督学习中统一且一致的表示生成能力。具体而言,FedU2包含灵活均匀正则化器(FUR)和高效统一聚合器(EUA)。各客户端的FUR通过均匀分散样本来避免表示坍塌,而服务器的EUA通过约束客户端模型一致性更新来促进统一表示。为全面验证FedU2性能,我们在两个基准数据集(CIFAR10和CIFAR100)上分别开展了跨设备与跨机构评估实验。