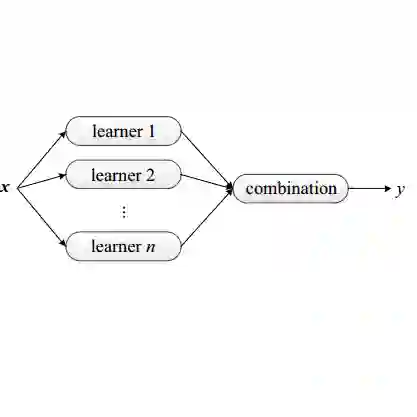

We introduce Statsformer, a principled framework for integrating large language model (LLM)-derived knowledge into supervised statistical learning. Existing approaches are limited in adaptability and scope: they either inject LLM guidance as an unvalidated heuristic, which is sensitive to LLM hallucination, or embed semantic information within a single fixed learner. Statsformer overcomes both limitations through a guardrailed ensemble architecture. We embed LLM-derived feature priors within an ensemble of linear and nonlinear learners, adaptively calibrating their influence via cross-validation. This design yields a flexible system with an oracle-style guarantee that it performs no worse than any convex combination of its in-library base learners, up to statistical error. Empirically, informative priors yield consistent performance improvements, while uninformative or misspecified LLM guidance is automatically downweighted, mitigating the impact of hallucinations across a diverse range of prediction tasks.

翻译:本文提出Statsformer,一个将大型语言模型(LLM)衍生的知识整合到监督统计学习中的原则性框架。现有方法在适应性和适用范围上存在局限:它们要么将LLM引导作为未经验证的启发式规则引入(易受LLM幻觉影响),要么将语义信息嵌入到单一固定学习器中。Statsformer通过一种带防护机制的集成架构克服了这两类局限。我们将LLM衍生的特征先验嵌入到线性与非线性学习器组成的集成中,并通过交叉验证自适应地校准其影响。该设计构建了一个灵活系统,并具备类预言机性质的性能保证:在统计误差范围内,其表现不劣于库内基学习器的任何凸组合。实证研究表明,信息性先验能带来持续的性能提升,而无信息性或错误设定的LLM引导会被自动降权,从而在多样化的预测任务中有效缓解幻觉的影响。