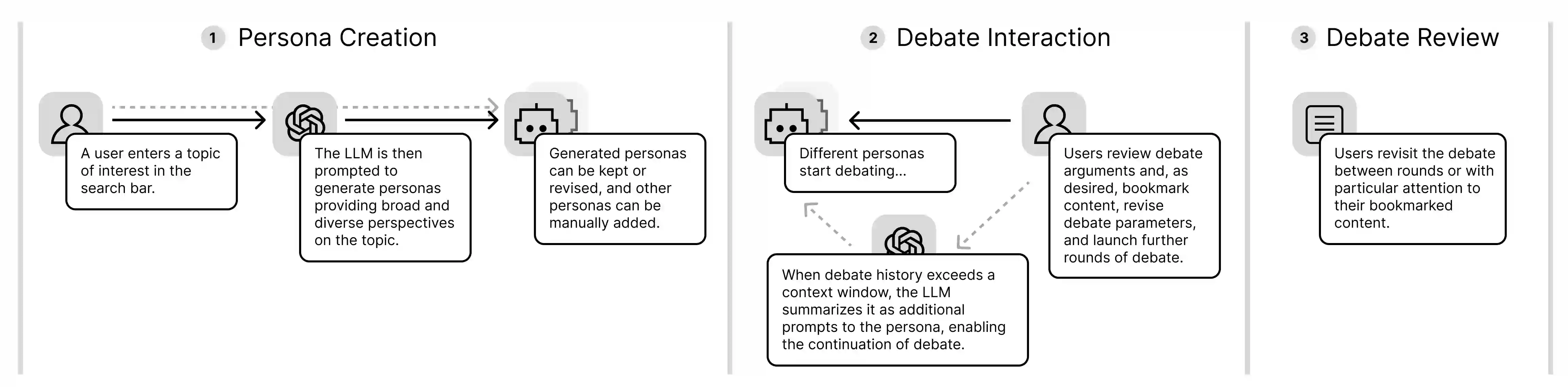

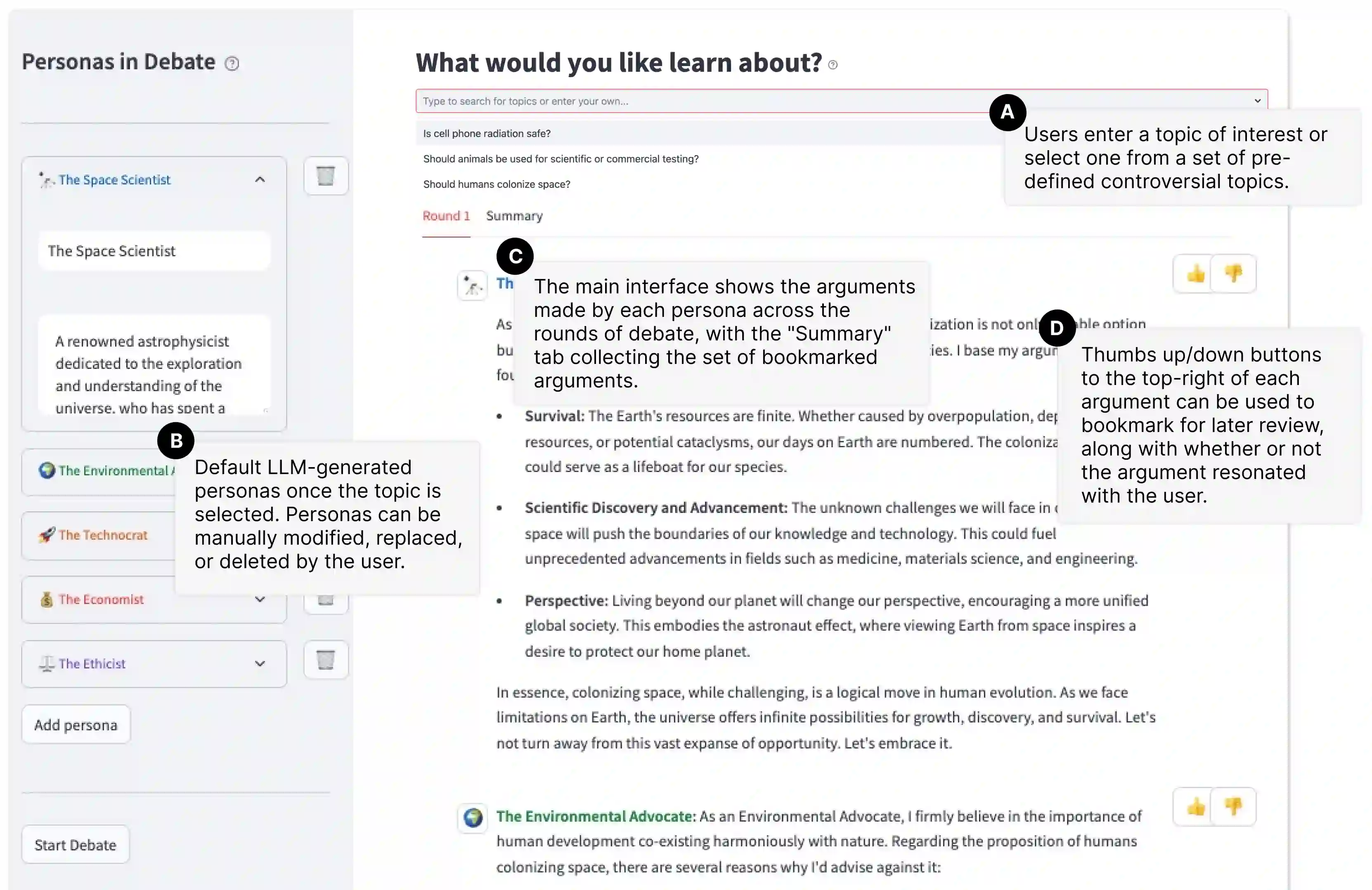

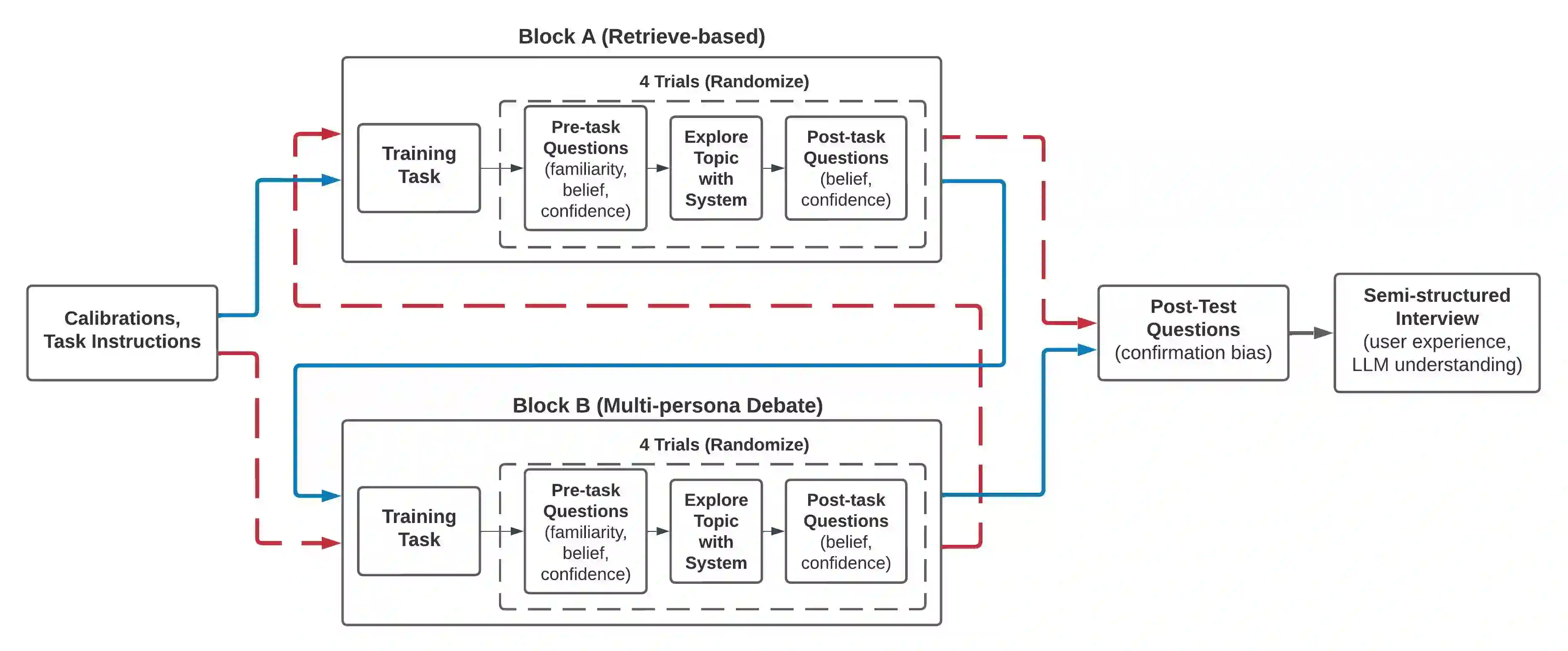

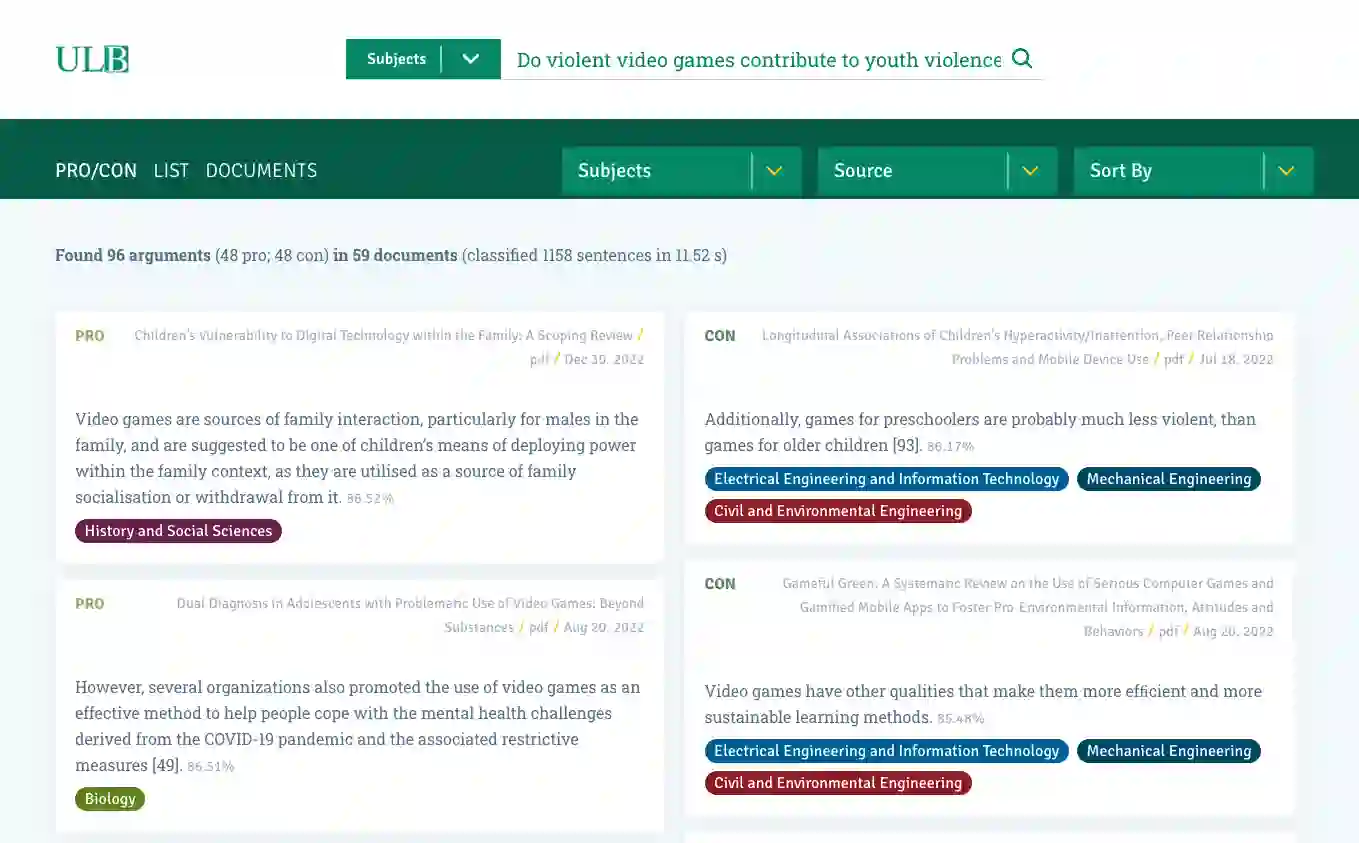

Large language models (LLMs) are enabling designers to give life to exciting new user experiences for information access. In this work, we present a system that generates LLM personas to debate a topic of interest from different perspectives. How might information seekers use and benefit from such a system? Can centering information access around diverse viewpoints help to mitigate thorny challenges like confirmation bias in which information seekers over-trust search results matching existing beliefs? How do potential biases and hallucinations in LLMs play out alongside human users who are also fallible and possibly biased? Our study exposes participants to multiple viewpoints on controversial issues via a mixed-methods, within-subjects study. We use eye-tracking metrics to quantitatively assess cognitive engagement alongside qualitative feedback. Compared to a baseline search system, we see more creative interactions and diverse information-seeking with our multi-persona debate system, which more effectively reduces user confirmation bias and conviction toward their initial beliefs. Overall, our study contributes to the emerging design space of LLM-based information access systems, specifically investigating the potential of simulated personas to promote greater exposure to information diversity, emulate collective intelligence, and mitigate bias in information seeking.

翻译:大型语言模型(LLM)使设计者能够为信息获取创造激动人心的新用户体验。本研究提出一个系统,该系统生成LLM角色以从不同角度辩论目标议题。信息寻求者将如何使用并受益于此类系统?以多元观点为核心的信息获取方式能否帮助缓解确认偏误等棘手挑战——即信息寻求者过度信任与现有信念相符的搜索结果?LLM潜在的偏见与幻觉如何与同样存在缺陷且可能带有偏见的人类用户相互作用?我们通过混合方法、受试者内设计的研究,让参与者接触关于争议议题的多种观点。我们使用眼动追踪指标定量评估认知参与度,并结合定性反馈。与基线搜索系统相比,我们的多角色辩论系统促成了更具创造性的互动和多元化的信息寻求行为,更有效地降低了用户的确认偏误及其对初始信念的坚持程度。总体而言,本研究为基于LLM的信息获取系统这一新兴设计领域作出贡献,特别探讨了模拟角色在促进信息多样性接触、模拟集体智慧以及缓解信息寻求过程中的偏见方面的潜力。