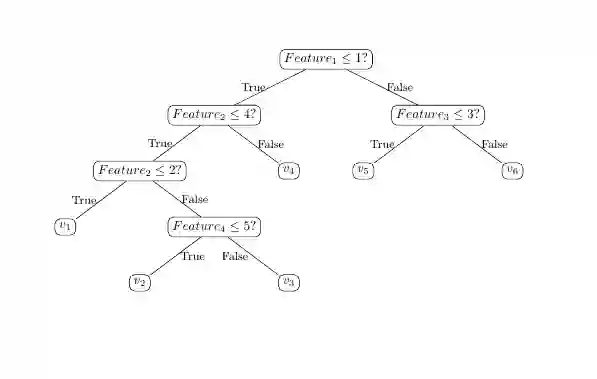

Based on decision trees, it is efficient to handle tabular data. Conventional decision tree growth methods often result in suboptimal trees because of their greedy nature. Their inherent structure limits the options of hardware to implement decision trees in parallel. Here is a compact representation of binary decision trees to overcome these deficiencies. We explicitly formulate the dependence of prediction on binary tests for binary decision trees and construct a function to guide the input sample from the root to the appropriate leaf node. And based on this formulation we introduce a new interpretation of binary decision trees. Then we approximate this formulation via continuous functions. Finally, we interpret the decision tree as a model combination method. And we propose the selection-prediction scheme to unify a few learning methods.

翻译:基于决策树的方法在处理表格数据时具有高效性。传统决策树生长方法因其贪婪特性往往导致次优树结构。其固有结构限制了决策树并行化实现的硬件选择范围。本文提出二元决策树的紧凑表示以克服上述缺陷。我们显式构建了二元决策树中预测结果对二元测试的依赖关系,并构造了引导输入样本从根节点到达对应叶节点的函数。基于该形式化体系,我们引入对二元决策树的全新诠释。进而通过连续函数逼近该形式化表达。最后,我们将决策树解释为一种模型组合方法,并提出选择-预测框架以统一若干学习方法。