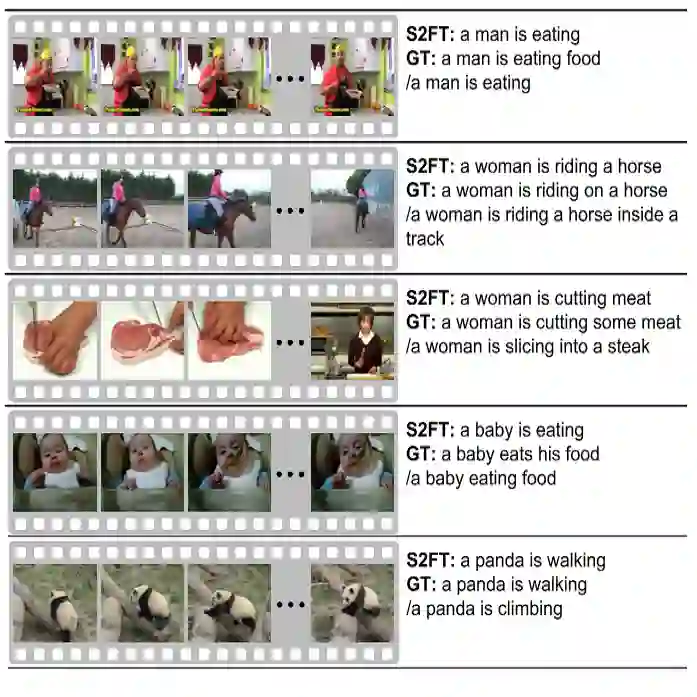

Video captioning aims to describe video contents using natural language format that involves understanding and interpreting scenes, actions and events that occurs simultaneously on the view. Current approaches have mainly concentrated on visual cues, often neglecting the rich information available from other important modality of audio information, including their inter-dependencies. In this work, we introduce a novel video captioning method trained with multi-modal contrastive loss that emphasizes both multi-modal integration and interpretability. Our approach is designed to capture the dependency between these modalities, resulting in more accurate, thus pertinent captions. Furthermore, we highlight the importance of interpretability, employing multiple attention mechanisms that provide explanation into the model's decision-making process. Our experimental results demonstrate that our proposed method performs favorably against the state-of the-art models on commonly used benchmark datasets of MSR-VTT and VATEX.

翻译:视频描述生成旨在以自然语言格式描述视频内容,这涉及对视野中同时发生的场景、动作和事件的理解与解释。现有方法主要集中于视觉线索,往往忽略了音频等其他重要模态所提供的丰富信息及其相互依赖性。本文提出一种新颖的视频描述生成方法,该方法通过多模态对比损失进行训练,强调多模态整合与可解释性。我们的方法旨在捕捉这些模态间的依赖关系,从而生成更准确且切题的字幕。此外,我们强调可解释性的重要性,采用多种注意力机制为模型的决策过程提供解释。实验结果表明,在MSR-VTT和VATEX等常用基准数据集上,我们提出的方法相较于当前最先进模型表现出优越性能。