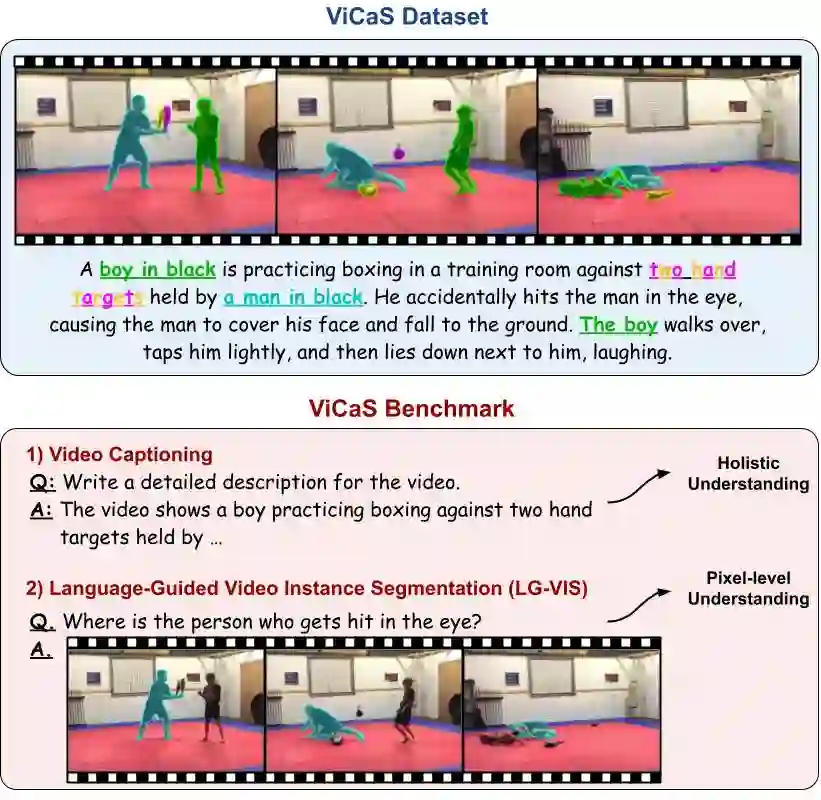

Recent advances in multimodal large language models (MLLMs) have expanded research in video understanding, primarily focusing on high-level tasks such as video captioning and question-answering. Meanwhile, a smaller body of work addresses dense, pixel-precise segmentation tasks, which typically involve category-guided or referral-based object segmentation. Although both directions are essential for developing models with human-level video comprehension, they have largely evolved separately, with distinct benchmarks and architectures. This paper aims to unify these efforts by introducing ViCaS, a new dataset containing thousands of challenging videos, each annotated with detailed, human-written captions and temporally consistent, pixel-accurate masks for multiple objects with phrase grounding. Our benchmark evaluates models on both holistic/high-level understanding and language-guided, pixel-precise segmentation. We also present carefully validated evaluation measures and propose an effective model architecture that can tackle our benchmark. Project page: https://ali2500.github.io/vicas-project/

翻译:近年来,多模态大语言模型(MLLMs)的进展推动了视频理解研究的发展,主要集中在视频描述和问答等高层任务上。与此同时,也有少量工作关注密集的、像素级精确的分割任务,这类任务通常涉及基于类别引导或指称的对象分割。尽管这两个方向对于开发具备人类水平视频理解能力的模型都至关重要,但它们大多独立发展,拥有不同的基准测试和架构。本文旨在通过引入ViCaS来统一这些努力。ViCaS是一个包含数千个挑战性视频的新数据集,每个视频都标注了详细的人工撰写描述,以及针对多个对象的、具有短语定位的时序一致且像素级精确的掩码。我们的基准测试从整体/高层理解和语言引导的像素级精确分割两个方面评估模型。我们还提出了经过仔细验证的评估指标,并设计了一个能够有效应对我们基准测试的模型架构。项目页面:https://ali2500.github.io/vicas-project/