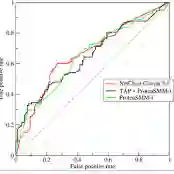

In high-stakes risk prediction, quantifying uncertainty through interval-valued predictions is essential for reliable decision-making. However, standard evaluation tools like the receiver operating characteristic (ROC) curve and the area under the curve (AUC) are designed for point scores and fail to capture the impact of predictive uncertainty on ranking performance. We propose an uncertainty-aware ROC framework specifically for interval-valued predictions, introducing two new measures: $AUC_L$ and $AUC_U$. This framework enables an informative three-region decomposition of the ROC plane, partitioning pairwise rankings into correct, incorrect, and uncertain orderings. This approach naturally supports selective prediction by allowing models to abstain from ranking cases with overlapping intervals, thereby optimizing the trade-off between abstention rate and discriminative reliability. We prove that under valid class-conditional coverage, $AUC_L$ and $AUC_U$ provide formal lower and upper bounds on the theoretical optimal AUC ($AUC^*$), characterizing the physical limit of achievable discrimination. The proposed framework applies broadly to interval-valued prediction models, regardless of the interval construction method. Experiments on real-world benchmark datasets, using bootstrap-based intervals as one instantiation, validate the framework's correctness and demonstrate its practical utility for uncertainty-aware evaluation and decision-making.

翻译:在高风险预测任务中,通过区间值预测来量化不确定性对于可靠决策至关重要。然而,诸如受试者工作特征(ROC)曲线和曲线下面积(AUC)等标准评估工具是为点值分数设计的,无法捕捉预测不确定性对排序性能的影响。我们提出一个专门针对区间值预测的不确定性感知ROC框架,引入了两个新度量:$AUC_L$和$AUC_U$。该框架实现了对ROC平面信息丰富的三区域分解,将成对排序划分为正确、错误和不确定的排序。该方法自然地支持选择性预测,允许模型对区间重叠的案例放弃排序,从而优化弃判率与判别可靠性之间的权衡。我们证明,在满足有效的类条件覆盖下,$AUC_L$和$AUC_U$为理论最优AUC($AUC^*$)提供了正式的下界和上界,刻画了可达到判别性能的物理极限。所提出的框架广泛适用于区间值预测模型,与区间构建方法无关。在真实世界基准数据集上的实验,以基于自助法的区间作为一种实例,验证了框架的正确性,并展示了其在不确定性感知评估与决策中的实际效用。