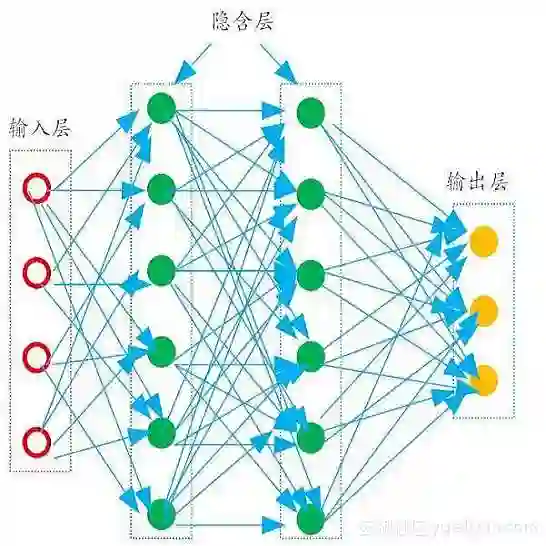

Reconstructing dynamic urban scenes presents significant challenges due to their intrinsic geometric structures and spatiotemporal dynamics. Existing methods that attempt to model dynamic urban scenes without leveraging priors on potentially moving regions often produce suboptimal results. Meanwhile, approaches based on manual 3D annotations yield improved reconstruction quality but are impractical due to labor-intensive labeling. In this paper, we revisit the potential of 2D semantic maps for classifying dynamic and static Gaussians and integrating spatial and temporal dimensions for urban scene representation. We introduce Urban4D, a novel framework that employs a semantic-guided decomposition strategy inspired by advances in deep 2D semantic map generation. Our approach distinguishes potentially dynamic objects through reliable semantic Gaussians. To explicitly model dynamic objects, we propose an intuitive and effective 4D Gaussian splatting (4DGS) representation that aggregates temporal information through learnable time embeddings for each Gaussian, predicting their deformations at desired timestamps using a multilayer perceptron (MLP). For more accurate static reconstruction, we also design a k-nearest neighbor (KNN)-based consistency regularization to handle the ground surface due to its low-texture characteristic. Extensive experiments on real-world datasets demonstrate that Urban4D not only achieves comparable or better quality than previous state-of-the-art methods but also effectively captures dynamic objects while maintaining high visual fidelity for static elements.

翻译:动态城市场景的重建因其固有的几何结构和时空动态特性而面临重大挑战。现有方法在未利用潜在运动区域先验知识的情况下尝试建模动态城市场景,往往产生次优结果。同时,基于人工3D标注的方法虽能提升重建质量,但因标注工作繁重而缺乏实用性。本文重新探讨了利用二维语义地图对动态与静态高斯元进行分类,并整合时空维度进行城市场景表征的潜力。我们提出Urban4D——一种受深度二维语义地图生成技术启发的新型语义引导分解框架。该方法通过可靠的语义高斯元识别潜在动态对象。为显式建模动态对象,我们提出一种直观有效的4D高斯溅射(4DGS)表征,通过为每个高斯元引入可学习的时间嵌入来聚合时序信息,并利用多层感知机(MLP)预测其在目标时间戳的形变。针对低纹理特征的地表区域,为提升静态重建精度,我们设计了基于k近邻(KNN)的一致性正则化方法。在真实数据集上的大量实验表明,Urban4D不仅达到或超越了现有最优方法的性能,还能有效捕捉动态对象,同时为静态元素保持高度的视觉保真度。