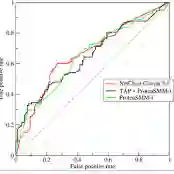

While machine learning (ML) models are becoming mainstream, especially in sensitive application areas, the risk of data leakage has become a growing concern. Attacks like membership inference (MIA) have shown that trained models can reveal sensitive data, jeopardizing confidentiality. While traditional Artificial Neural Networks (ANNs) dominate ML applications, neuromorphic architectures, specifically Spiking Neural Networks (SNNs), are emerging as promising alternatives due to their low power consumption and event-driven processing, akin to biological neurons. Privacy in ANNs is well-studied; however, little work has explored the privacy-preserving properties of SNNs. This paper examines whether SNNs inherently offer better privacy. Using MIAs, we assess the privacy resilience of SNNs versus ANNs across diverse datasets. We analyze the impact of learning algorithms (surrogate gradient and evolutionary), frameworks (snnTorch, TENNLab, LAVA), and parameters on SNN privacy. Our findings show that SNNs consistently outperform ANNs in privacy preservation, with evolutionary algorithms offering additional resilience. For instance, on CIFAR-10, SNNs achieve an AUC of 0.59, significantly lower than ANNs' 0.82, and on CIFAR-100, SNNs maintain an AUC of 0.58 compared to ANNs' 0.88. Additionally, we explore the privacy-utility trade-off with Differentially Private Stochastic Gradient Descent (DPSGD), finding that SNNs sustain less accuracy loss than ANNs under similar privacy constraints.

翻译:随着机器学习模型日益成为主流,特别是在敏感应用领域,数据泄露风险已成为日益严峻的问题。成员推理攻击等研究表明,训练完成的模型可能泄露敏感数据,危及机密性。传统人工神经网络在机器学习应用中占据主导地位,而神经形态架构——特别是脉冲神经网络——因其低功耗和事件驱动的处理方式(类似于生物神经元)正成为具有前景的替代方案。人工神经网络的隐私保护已有深入研究,但针对脉冲神经网络隐私保护特性的探索尚不充分。本文通过成员推理攻击,系统评估了脉冲神经网络相较于人工神经网络在不同数据集上的隐私鲁棒性。我们分析了学习算法(代理梯度法与进化算法)、开发框架(snnTorch、TENNLab、LAVA)及参数配置对脉冲神经网络隐私保护的影响。研究结果表明,脉冲神经网络在隐私保护方面持续优于人工神经网络,其中进化算法可提供更强的隐私鲁棒性。例如在CIFAR-10数据集上,脉冲神经网络的AUC值为0.59,显著低于人工神经网络的0.82;在CIFAR-100数据集上,脉冲神经网络的AUC值为0.58,而人工神经网络达到0.88。此外,我们通过差分隐私随机梯度下降法探讨了隐私-效用的权衡关系,发现在相同隐私约束条件下,脉冲神经网络比人工神经网络能保持更高的准确率。