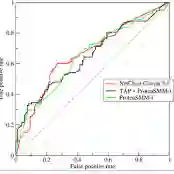

Artificial intelligence is revealing what medicine never intended to encode. Deep vision models, trained on chest X-rays, can now detect not only disease but also invisible traces of social inequality. In this study, we show that state-of-the-art architectures (DenseNet121, SwinV2-B, MedMamba) can predict a patient's health insurance type, a strong proxy for socioeconomic status, from normal chest X-rays with significant accuracy (AUC around 0.70 on MIMIC-CXR-JPG, 0.68 on CheXpert). The signal was unlikely contributed by demographic features by our machine learning study combining age, race, and sex labels to predict health insurance types; it also remains detectable when the model is trained exclusively on a single racial group. Patch-based occlusion reveals that the signal is diffuse rather than localized, embedded in the upper and mid-thoracic regions. This suggests that deep networks may be internalizing subtle traces of clinical environments, equipment differences, or care pathways; learning socioeconomic segregation itself. These findings challenge the assumption that medical images are neutral biological data. By uncovering how models perceive and exploit these hidden social signatures, this work reframes fairness in medical AI: the goal is no longer only to balance datasets or adjust thresholds, but to interrogate and disentangle the social fingerprints embedded in clinical data itself.

翻译:人工智能正在揭示医学从未意图编码的信息。基于胸部X光片训练的深度视觉模型,如今不仅能检测疾病,还能识别社会不平等留下的无形痕迹。本研究证明,先进架构(DenseNet121、SwinV2-B、MedMamba)能够从正常胸部X光片中显著准确地预测患者的健康保险类型(该指标是社会经济地位的重要代理变量),在MIMIC-CXR-JPG数据集上AUC约0.70,在CheXpert数据集上AUC约0.68。通过结合年龄、种族和性别标签预测健康保险类型的机器学习实验,我们发现该信号不太可能由人口统计学特征贡献;即使模型仅在单一种族群体数据上训练,该信号仍可被检测到。基于图像块的遮挡实验表明,该信号呈弥散性而非局部性,主要嵌入上胸部及中胸部区域。这暗示深度网络可能内化了临床环境、设备差异或诊疗路径的细微痕迹,即正在学习社会经济隔离本身。这些发现挑战了医学影像是中性生物数据的固有假设。通过揭示模型如何感知并利用这些隐藏的社会特征,本研究重构了医疗人工智能的公平性内涵:目标不再仅限于平衡数据集或调整阈值,更需要审视并解构临床数据本身嵌入的社会指纹。