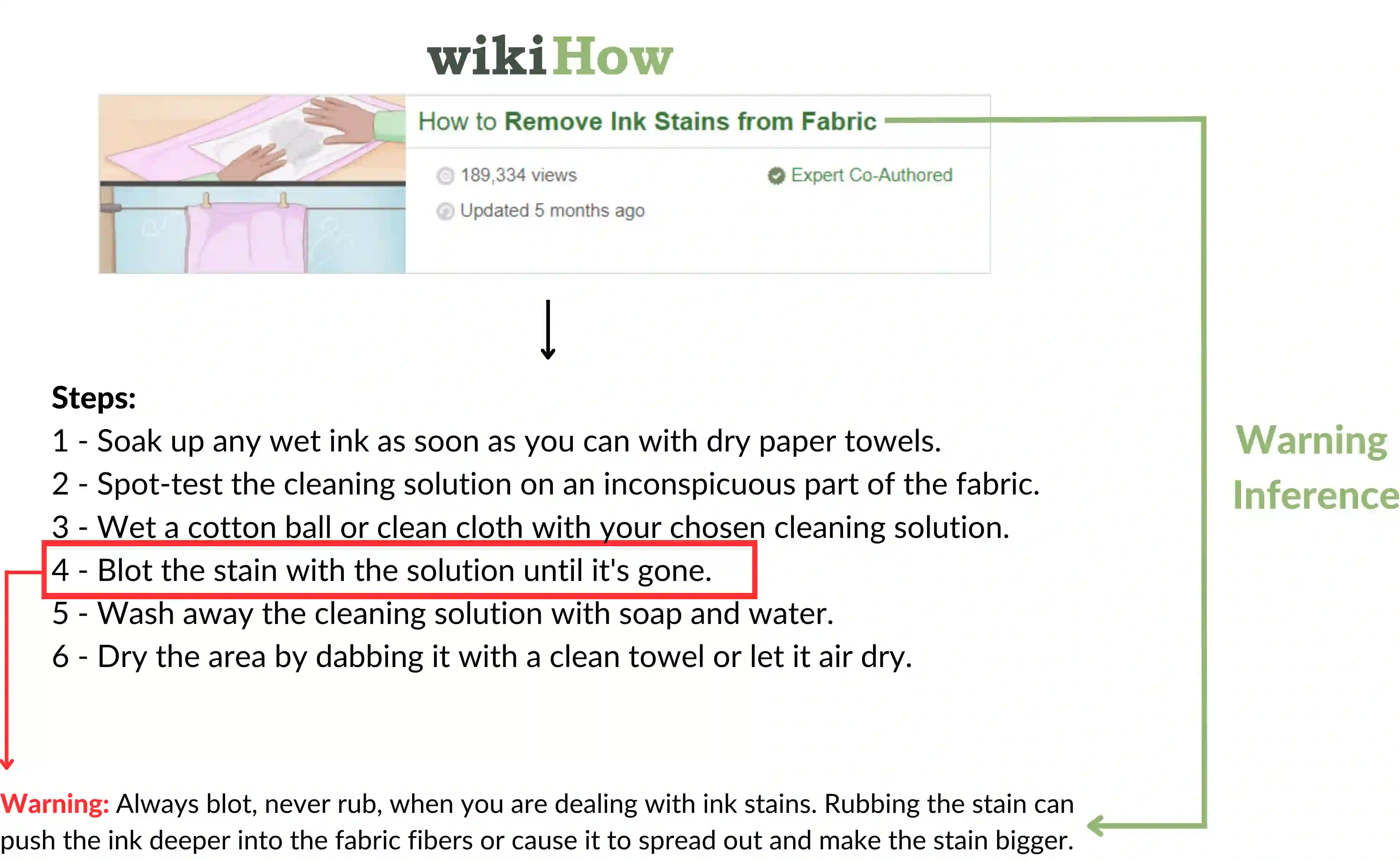

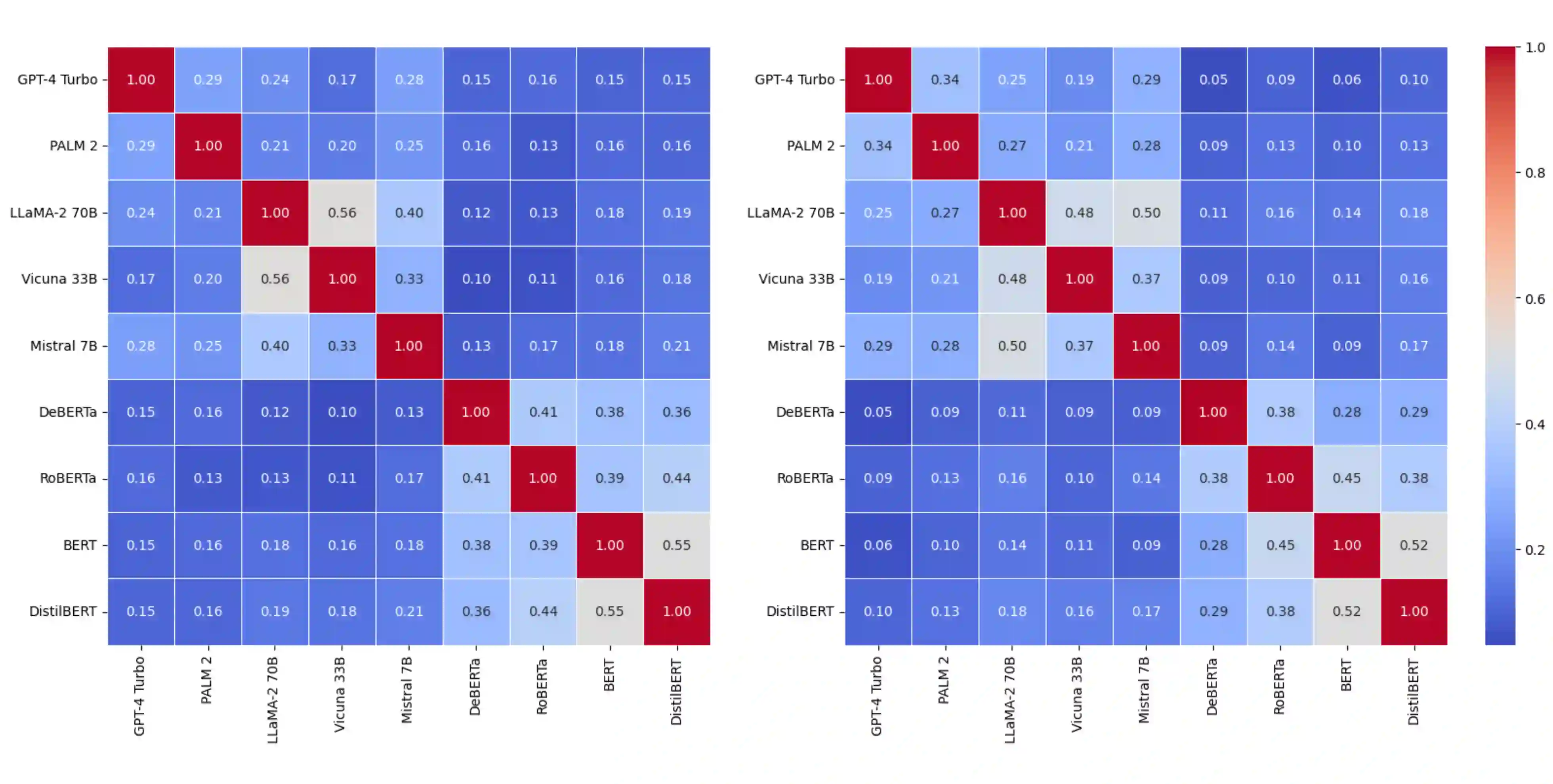

Recently, there has been growing interest within the community regarding whether large language models are capable of planning or executing plans. However, most prior studies use LLMs to generate high-level plans for simplified scenarios lacking linguistic complexity and domain diversity, limiting analysis of their planning abilities. These setups constrain evaluation methods (e.g., predefined action space), architectural choices (e.g., only generative models), and overlook the linguistic nuances essential for realistic analysis. To tackle this, we present PARADISE, an abductive reasoning task using Q\&A format on practical procedural text sourced from wikiHow. It involves warning and tip inference tasks directly associated with goals, excluding intermediary steps, with the aim of testing the ability of the models to infer implicit knowledge of the plan solely from the given goal. Our experiments, utilizing fine-tuned language models and zero-shot prompting, reveal the effectiveness of task-specific small models over large language models in most scenarios. Despite advancements, all models fall short of human performance. Notably, our analysis uncovers intriguing insights, such as variations in model behavior with dropped keywords, struggles of BERT-family and GPT-4 with physical and abstract goals, and the proposed tasks offering valuable prior knowledge for other unseen procedural tasks. The PARADISE dataset and associated resources are publicly available for further research exploration with https://github.com/GGLAB-KU/paradise.

翻译:摘要:近期,社区对大型语言模型是否具备规划或执行计划的能力日益关注。然而,多数先前研究仅使用LLMs对缺乏语言复杂性和领域多样性的简化场景生成高层级计划,限制了对其规划能力的分析。这类设置约束了评估方法(如预定义动作空间)、架构选择(如仅限生成式模型),且忽略了现实分析所必需的语言细微差别。为解决此问题,我们提出PARADISE——一项基于wikiHow实用程序性文本的溯因推理任务,采用问答形式。该任务包含与目标直接相关的警告与技巧推理任务,排除中间步骤,旨在测试模型仅凭给定目标推断计划隐含知识的能力。通过微调语言模型与零样本提示的实验表明:多数场景下,任务专用小模型的性能优于大型语言模型。尽管取得进展,所有模型仍未能达到人类表现。值得注意的是,我们的分析揭示了有趣发现,例如:关键词缺失时模型行为的变化、BERT族模型与GPT-4在物理目标与抽象目标上的表现差异,以及所提任务为其他未见程序性任务提供的宝贵先验知识。PARADISE数据集及相关资源已通过https://github.com/GGLAB-KU/paradise 公开,以促进后续研究探索。