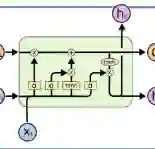

This paper introduces a novel method for real-time exercise classification using a Bidirectional Long Short-Term Memory (BiLSTM) neural network. Existing exercise recognition approaches often rely on synthetic datasets, raw coordinate inputs sensitive to user and camera variations, and fail to fully exploit the temporal dependencies in exercise movements. These issues limit their generalizability and robustness in real-world conditions, where lighting, camera angles, and user body types vary. To address these challenges, we propose a BiLSTM-based model that leverages invariant features, such as joint angles, alongside raw coordinates. By using both angles and (x, y, z) coordinates, the model adapts to changes in perspective, user positioning, and body differences, improving generalization. Training on 30-frame sequences enables the BiLSTM to capture the temporal context of exercises and recognize patterns evolving over time. We compiled a dataset combining synthetic data from the InfiniteRep dataset and real-world videos from Kaggle and other sources. This dataset includes four common exercises: squat, push-up, shoulder press, and bicep curl. The model was trained and validated on these diverse datasets, achieving an accuracy of over 99% on the test set. To assess generalizability, the model was tested on 2 separate test sets representative of typical usage conditions. Comparisons with the previous approach from the literature are present in the result section showing that the proposed model is the best-performing one. The classifier is integrated into a web application providing real-time exercise classification and repetition counting without manual exercise selection. Demo and datasets are available at the following GitHub Repository: https://github.com/RiccardoRiccio/Fitness-AI-Trainer-With-Automatic-Exercise-Recognition-and-Counting.

翻译:本文提出了一种利用双向长短期记忆(BiLSTM)神经网络实现实时健身动作分类的新方法。现有动作识别方法通常依赖合成数据集、对用户和摄像头变化敏感的原始坐标输入,且未能充分利用健身动作中的时间依赖性。这些问题限制了其在真实场景(光照、拍摄角度、用户体型存在差异)下的泛化能力和鲁棒性。为应对这些挑战,我们提出一种基于BiLSTM的模型,该模型结合关节角度等不变特征与原始坐标进行学习。通过同时利用角度和(x, y, z)坐标,模型能够适应视角变化、用户位置差异及体型区别,从而提升泛化性能。使用30帧序列进行训练使BiLSTM能够捕捉健身动作的时间上下文信息,识别随时间演变的运动模式。我们构建了一个融合InfiniteRep合成数据与Kaggle等来源真实视频的数据集,包含深蹲、俯卧撑、肩推举和肱二头肌弯举四种常见健身动作。模型在此多样化数据集上训练验证,在测试集上准确率超过99%。为评估泛化能力,模型在两个代表典型使用场景的独立测试集上进行了测试。结果部分展示了与现有文献方法的对比,表明所提模型性能最优。该分类器已集成至网络应用程序中,无需手动选择动作类型即可实现实时健身动作分类与重复次数计数。演示程序及数据集详见GitHub仓库:https://github.com/RiccardoRiccio/Fitness-AI-Trainer-With-Automatic-Exercise-Recognition-and-Counting。