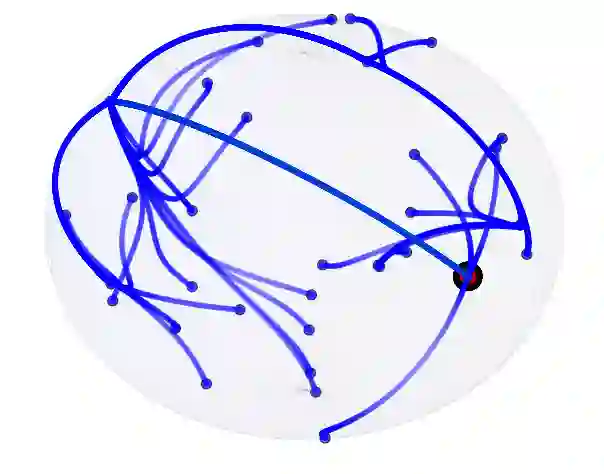

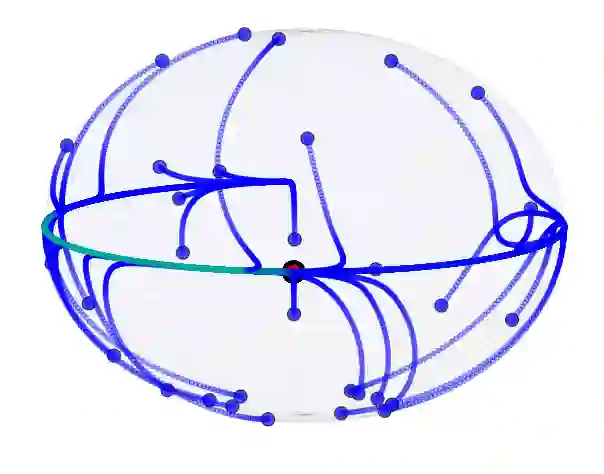

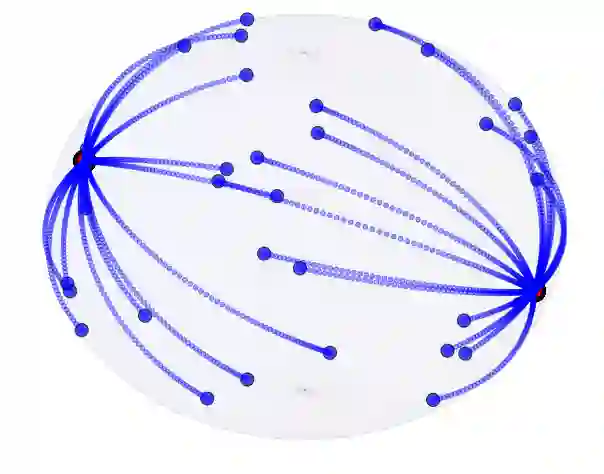

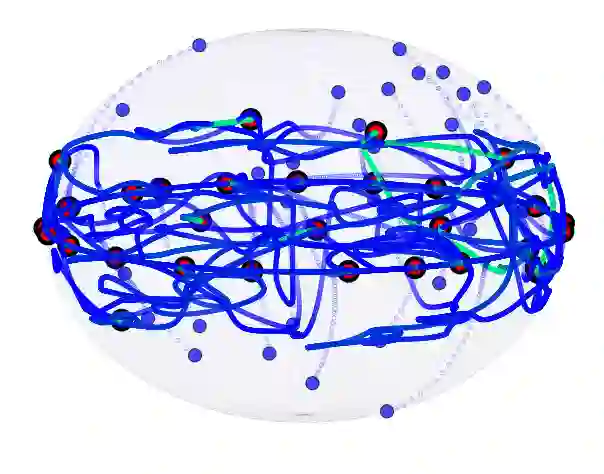

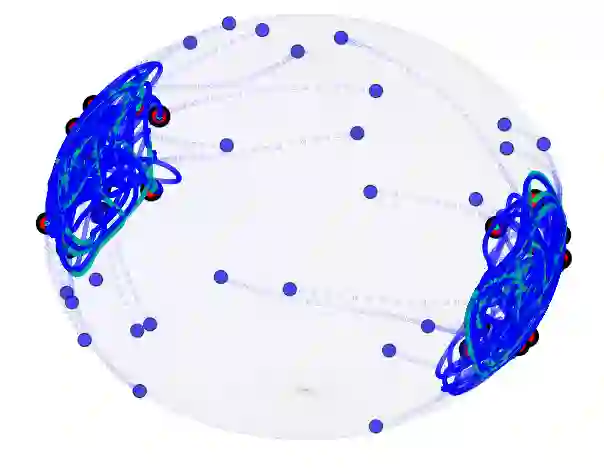

This work presents a modification of the self-attention dynamics proposed by Geshkovski et al. (arXiv:2312.10794) to better reflect the practically relevant, causally masked attention used in transformer architectures for generative AI. This modification translates into an interacting particle system that cannot be interpreted as a mean-field gradient flow. Despite this loss of structure, we significantly strengthen the results of Geshkovski et al. (arXiv:2312.10794) in this context: While previous rigorous results focused on cases where all three matrices (Key, Query, and Value) were scaled identities, we prove asymptotic convergence to a single cluster for arbitrary key-query matrices and a value matrix equal to the identity. Additionally, we establish a connection to the classical R\'enyi parking problem from combinatorial geometry to make initial theoretical steps towards demonstrating the existence of meta-stable states.

翻译:本研究对Geshkovski等人(arXiv:2312.10794)提出的自注意力动力学进行了改进,以更好地反映生成式AI中Transformer架构实际使用的因果掩码注意力机制。该改进转化为一个无法被解释为平均场梯度流的相互作用粒子系统。尽管损失了原有结构,我们在此背景下显著强化了Geshkovski等人(arXiv:2312.10794)的结果:以往严格结果聚焦于所有三个矩阵(键、查询和值)均为缩放单位矩阵的情形,而我们证明了在任意键-查询矩阵且值矩阵为单位矩阵的条件下,系统仍能渐近收敛至单一聚类。此外,我们建立了与组合几何学中经典Rényi停车问题的联系,为证明亚稳态的存在性迈出了初步的理论探索步伐。