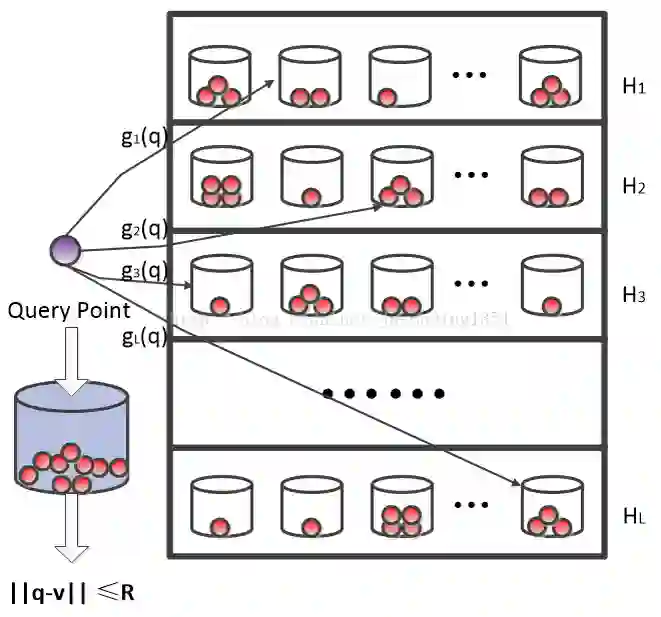

Bipartite graph hashing (BGH) is extensively used for Top-K search in Hamming space at low storage and inference costs. Recent research adopts graph convolutional hashing for BGH and has achieved the state-of-the-art performance. However, the contributions of its various influencing factors to hashing performance have not been explored in-depth, including the same/different sign count between two binary embeddings during Hamming space search (sign property), the contribution of sub-embeddings at each layer (model property), the contribution of different node types in the bipartite graph (node property), and the combination of augmentation methods. In this work, we build a lightweight graph convolutional hashing model named LightGCH by mainly removing the augmentation methods of the state-of-the-art model BGCH. By analyzing the contributions of each layer and node type to performance, as well as analyzing the Hamming similarity statistics at each layer, we find that the actual neighbors in the bipartite graph tend to have low Hamming similarity at the shallow layer, and all nodes tend to have high Hamming similarity at the deep layers in LightGCH. To tackle these problems, we propose a novel sign-guided framework SGBGH to make improvement, which uses sign-guided negative sampling to improve the Hamming similarity of neighbors, and uses sign-aware contrastive learning to help nodes learn more uniform representations. Experimental results show that SGBGH outperforms BGCH and LightGCH significantly in embedding quality.

翻译:二分图哈希(BGH)广泛应用于汉明空间中的Top-K搜索,具有低存储和推理成本。近年来的研究采用图卷积哈希方法进行BGH,并取得了最先进的性能。然而,其各种影响因素对哈希性能的贡献尚未得到深入探讨,包括汉明空间搜索中两个二进制嵌入之间的相同/不同符号计数(符号属性)、每一层子嵌入的贡献(模型属性)、二分图中不同节点类型的贡献(节点属性)以及数据增强方法的组合。在本工作中,我们构建了一个轻量级图卷积哈希模型LightGCH,主要通过移除最先进模型BGCH的数据增强方法实现。通过分析每一层和每种节点类型对性能的贡献,以及分析每一层的汉明相似性统计,我们发现:在LightGCH中,浅层处二分图中的实际邻居倾向于具有低汉明相似性,而深层处所有节点倾向于具有高汉明相似性。为解决这些问题,我们提出一种新颖的符号引导框架SGBGH,该框架采用符号引导的负采样来提高邻居的汉明相似性,并使用符号感知对比学习帮助节点学习更均匀的表示。实验结果表明,SGBGH在嵌入质量上显著优于BGCH和LightGCH。