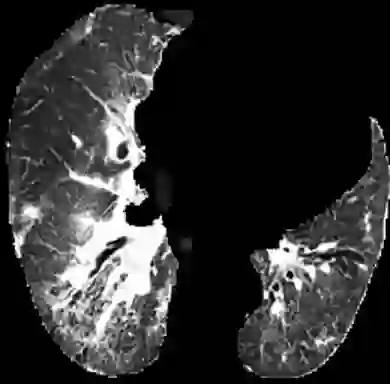

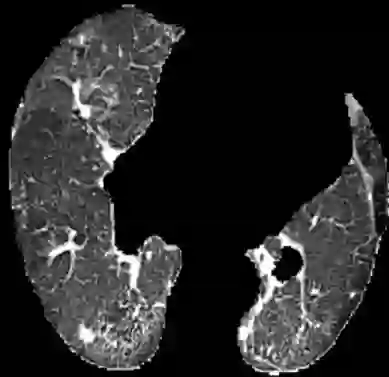

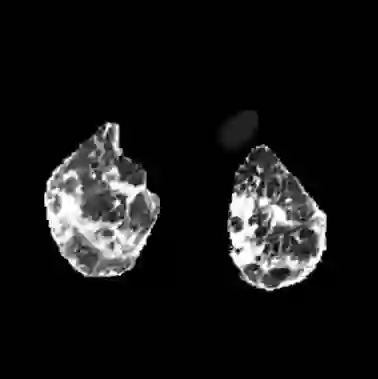

In the field of medical imaging, particularly in tasks related to early disease detection and prognosis, understanding the reasoning behind AI model predictions is imperative for assessing their reliability. Conventional explanation methods encounter challenges in identifying decisive features in medical image classifications, especially when discriminative features are subtle or not immediately evident. To address this limitation, we propose an agent model capable of generating counterfactual images that prompt different decisions when plugged into a black box model. By employing this agent model, we can uncover influential image patterns that impact the black model's final predictions. Through our methodology, we efficiently identify features that influence decisions of the deep black box. We validated our approach in the rigorous domain of medical prognosis tasks, showcasing its efficacy and potential to enhance the reliability of deep learning models in medical image classification compared to existing interpretation methods. The code will be publicly available at https://github.com/ayanglab/DiffExplainer.

翻译:在医学影像领域,尤其是与早期疾病检测和预后相关的任务中,理解AI模型预测背后的推理过程对于评估其可靠性至关重要。传统的解释方法在识别医学图像分类中的决定性特征时面临挑战,特别是当判别性特征较为细微或不明显时。为应对这一局限,我们提出了一种代理模型,该模型能够生成反事实图像,当输入黑盒模型时会引发不同的决策。通过使用该代理模型,我们可以揭示影响黑盒模型最终预测的关键图像模式。借助我们的方法,我们能够高效识别影响深度黑盒模型决策的特征。我们在严格的医学预后任务领域中验证了我们的方法,展示了其相较于现有解释方法在提升医学图像分类深度学习模型可靠性方面的有效性和潜力。代码将在 https://github.com/ayanglab/DiffExplainer 公开提供。