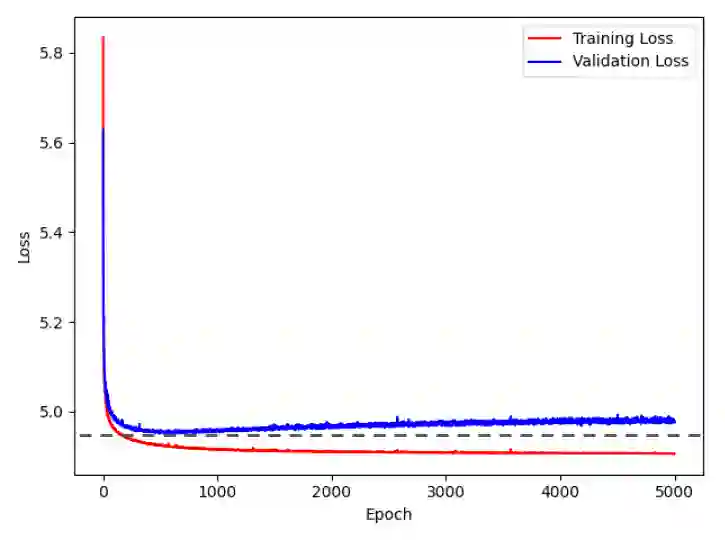

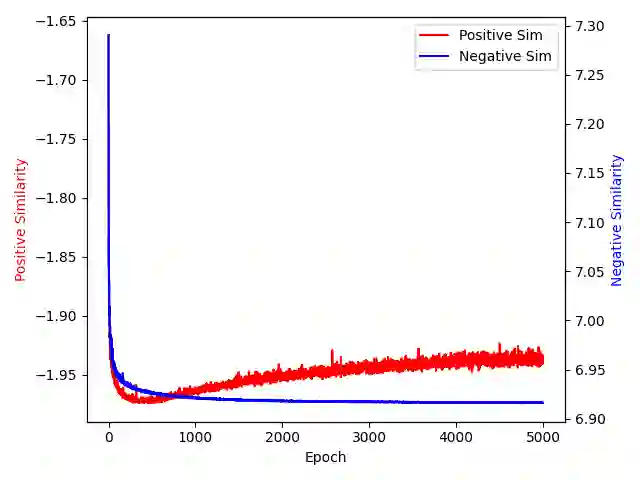

Overfitting describes a machine learning phenomenon where the model fits too closely to the training data, resulting in poor generalization. While this occurrence is thoroughly documented for many forms of supervised learning, it is not well examined in the context of unsupervised learning. In this work we examine the nature of overfitting in unsupervised contrastive learning. We show that overfitting can indeed occur and the mechanism behind overfitting.

翻译:过拟合描述了一种机器学习现象,即模型与训练数据拟合过于紧密,导致泛化能力下降。尽管这种现象在多种监督学习形式中已有详尽记录,但在无监督学习背景下尚未得到充分研究。本文探讨了无监督对比学习中过拟合的本质。我们证明过拟合确实可能发生,并揭示了其背后的机制。

相关内容

专知会员服务

34+阅读 · 2019年10月18日

专知会员服务

36+阅读 · 2019年10月17日