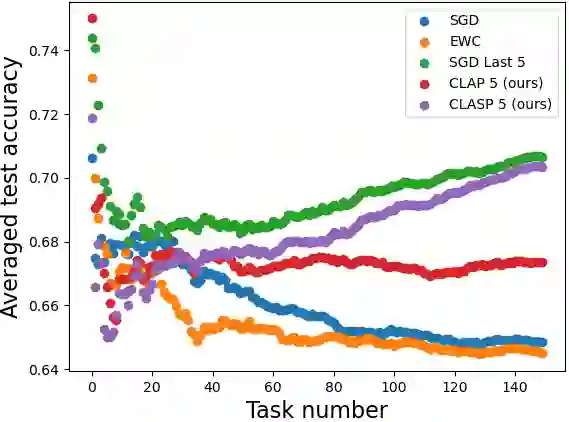

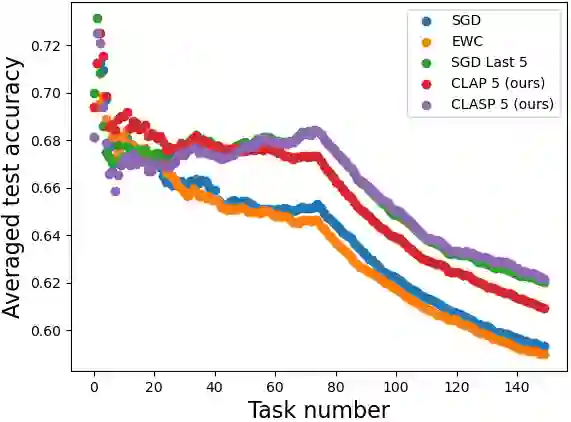

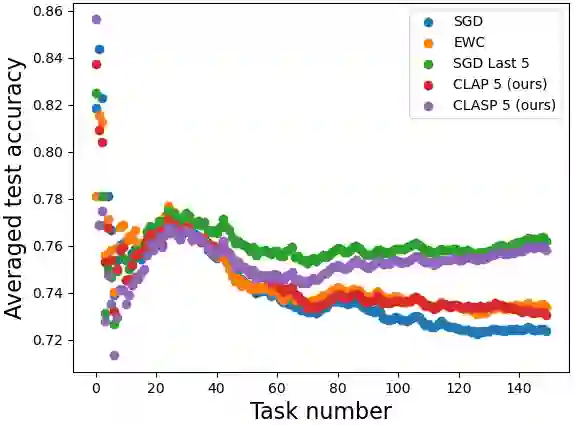

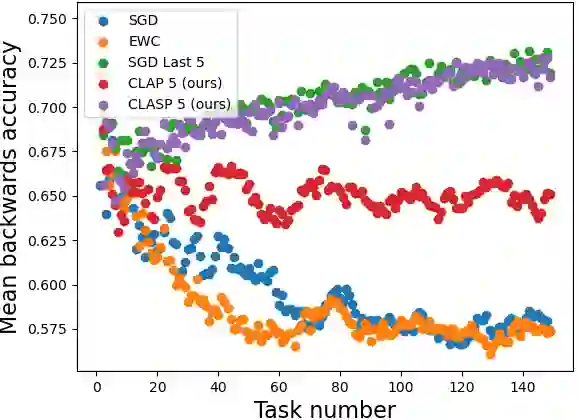

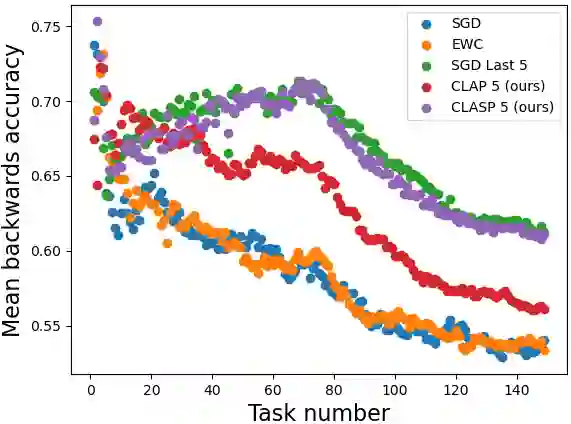

In continual learning, knowledge must be preserved and re-used between tasks, maintaining good transfer to future tasks and minimizing forgetting of previously learned ones. While several practical algorithms have been devised for this setting, there have been few theoretical works aiming to quantify and bound the degree of Forgetting in general settings. We provide both data-dependent and oracle upper bounds that apply regardless of model and algorithm choice, as well as bounds for Gibbs posteriors. We derive an algorithm inspired by our bounds and demonstrate empirically that our approach yields improved forward and backward transfer.

翻译:在连续学习中,知识需要在不同任务之间保留并复用,既要保持对后续任务的良好迁移能力,又要最小化对先前所学任务的遗忘。尽管针对这一场景已设计出多种实用算法,但旨在量化并界定一般场景中遗忘程度的理论研究仍较为匮乏。我们提出了同时适用于任意模型与算法选择的数据依赖上界与预言机上界,以及针对吉布斯后验的界。受这些界限启发,我们推导出一种算法,并通过实验表明该方法能有效提升前向迁移与后向迁移性能。

相关内容

Source: Apple - iOS 8