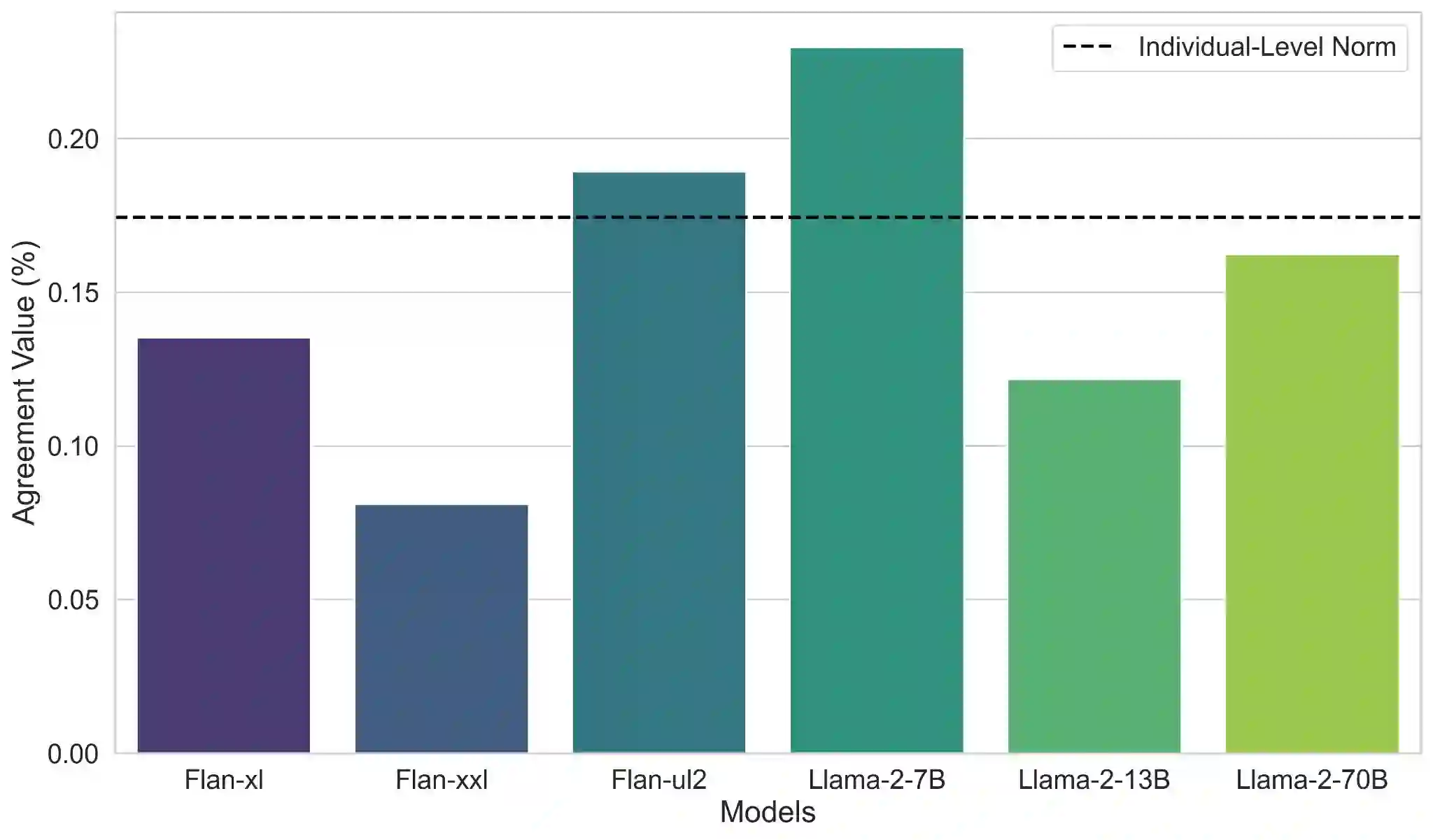

Cross-domain alignment refers to the task of mapping a concept from one domain to another. For example, ``If a \textit{doctor} were a \textit{color}, what color would it be?''. This seemingly peculiar task is designed to investigate how people represent concrete and abstract concepts through their mappings between categories and their reasoning processes over those mappings. In this paper, we adapt this task from cognitive science to evaluate the conceptualization and reasoning abilities of large language models (LLMs) through a behavioral study. We examine several LLMs by prompting them with a cross-domain mapping task and analyzing their responses at both the population and individual levels. Additionally, we assess the models' ability to reason about their predictions by analyzing and categorizing their explanations for these mappings. The results reveal several similarities between humans' and models' mappings and explanations, suggesting that models represent concepts similarly to humans. This similarity is evident not only in the model representation but also in their behavior. Furthermore, the models mostly provide valid explanations and deploy reasoning paths that are similar to those of humans.

翻译:跨领域对齐任务指将一个概念从一个领域映射到另一个领域。例如,“如果一位医生是一种颜色,它会是什么颜色?”。这一看似奇特的任务旨在研究人们如何通过类别间的映射及其推理过程来表征具体和抽象概念。在本文中,我们将这一认知科学任务进行调整,通过行为研究来评估大语言模型的概念化与推理能力。我们通过向多个大语言模型提示跨领域映射任务,并在群体与个体层面分析其响应,对这些模型进行了检验。此外,我们通过分析并归类模型对这些映射的解释,评估了模型对其预测进行推理的能力。结果显示,人类与模型的映射及解释存在若干相似之处,表明模型以类似于人类的方式表征概念。这种相似性不仅体现在模型表征中,也体现在其行为上。此外,模型大多能提供有效的解释,并采用与人类相似的推理路径。